はじめに

AWSにはのKubernetesマネージドサービスである、EKSが存在します。

今回は、EKSをコピペだけでサービス開始できるように手順を作りましたので、その共有です。

IAMも出来るだけ細かく設定しましたが、ざっくり設定した箇所もあるため、ご注意ください。

awscliで設定していくため、以下の記事で事前セットアップが必要です。

EKSに必要な事前準備

IAMグループの Policyの設定

必要に応じて権限を付与して行きます。Defaultで用意されているPolicyでは、EKSのクラスターを開始する権限が用意されていなかったため、Policyを自作します。

- Policy Name : AmazonEKSManagementPolicy

まず、Policyの内容をFileへ出力します

mkdir ~/policies

cd ~/policies

cat <<'EOF' > AmazonEKSManagementPolicy.json

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "VisualEditor0",

"Effect": "Allow",

"Action": [

"iam:ListServerCertificates",

"iam:ListPoliciesGrantingServiceAccess",

"iam:ListServiceSpecificCredentials",

"iam:ListMFADevices",

"iam:ListSigningCertificates",

"iam:ListVirtualMFADevices",

"iam:ListInstanceProfilesForRole",

"iam:ListSSHPublicKeys",

"iam:ListAttachedRolePolicies",

"iam:ListAttachedUserPolicies",

"iam:ListAttachedGroupPolicies",

"iam:ListRolePolicies",

"iam:ListAccessKeys",

"eks:CreateCluster",

"iam:ListPolicies",

"iam:ListSAMLProviders",

"iam:ListGroupPolicies",

"iam:ListEntitiesForPolicy",

"iam:ListRoles",

"iam:ListUserPolicies",

"iam:ListInstanceProfiles",

"eks:DeleteCluster",

"iam:ListPolicyVersions",

"iam:ListOpenIDConnectProviders",

"iam:ListGroupsForUser",

"iam:ListAccountAliases",

"iam:ListUsers",

"eks:DescribeCluster",

"iam:ListGroups",

"eks:ListClusters",

"iam:GetLoginProfile",

"iam:GetAccountSummary",

"iam:PassRole",

"iam:CreateRole",

"iam:AttachRolePolicy",

"iam:DetachRolePolicy",

"iam:CreateInstanceProfile",

"iam:DeleteRole",

"iam:AddRoleToInstanceProfile",

"iam:RemoveRoleFromInstanceProfile",

"iam:DeleteInstanceProfile",

"iam:GetRole",

"cloudformation:*",

"iam:CreateServiceLinkedRole"

],

"Resource": "*"

}

]

}

EOF

Policy 設定用 Profile を使用して、aws コマンドで Policy を作成します

aws iam --profile managepolicy create-policy --policy-name AmazonEKSManagementPolicy --policy-document file://AmazonEKSManagementPolicy.json

memo 作成したpolicyの削除。policyの内容を変更する際に使用します

arn_AmazonEKSManagementPolicy=$(aws --output json --profile managepolicy iam list-policies | jq -r '.Policies | map(select(.PolicyName == "AmazonEKSManagementPolicy"))[].Arn')

aws iam --profile managepolicy detach-group-policy --group-name awscli-group --policy-arn $arn_AmazonEKSManagementPolicy

aws iam --profile managepolicy detach-group-policy --group-name gui-group --policy-arn $arn_AmazonEKSManagementPolicy

aws iam --profile managepolicy delete-policy --policy-arn $arn_AmazonEKSManagementPolicy

Policyを付与します

arn_AmazonEKSManagementPolicy=$(aws --output json --profile managepolicy iam list-policies | jq -r '.Policies | map(select(.PolicyName == "AmazonEKSManagementPolicy"))[].Arn')

aws --profile managepolicy iam attach-group-policy --policy-arn $arn_AmazonEKSManagementPolicy --group-name awscli-group

aws --profile managepolicy iam attach-group-policy --policy-arn $arn_AmazonEKSManagementPolicy --group-name gui-group

arn_AmazonEKSClusterPolicy=$(aws --output json --profile managepolicy iam list-policies | jq -r '.Policies | map(select(.PolicyName == "AmazonEKSClusterPolicy"))[].Arn')

aws --profile managepolicy iam attach-group-policy --policy-arn $arn_AmazonEKSClusterPolicy --group-name awscli-group

aws --profile managepolicy iam attach-group-policy --policy-arn $arn_AmazonEKSClusterPolicy --group-name gui-group

arn_AmazonEKSWorkerNodePolicy=$(aws --output json --profile managepolicy iam list-policies | jq -r '.Policies | map(select(.PolicyName == "AmazonEKSWorkerNodePolicy"))[].Arn')

aws --profile managepolicy iam attach-group-policy --policy-arn $arn_AmazonEKSWorkerNodePolicy --group-name awscli-group

aws --profile managepolicy iam attach-group-policy --policy-arn $arn_AmazonEKSWorkerNodePolicy --group-name gui-group

arn_AmazonEKSServicePolicy=$(aws --output json --profile managepolicy iam list-policies | jq -r '.Policies | map(select(.PolicyName == "AmazonEKSServicePolicy"))[].Arn')

aws --profile managepolicy iam attach-group-policy --policy-arn $arn_AmazonEKSServicePolicy --group-name awscli-group

aws --profile managepolicy iam attach-group-policy --policy-arn $arn_AmazonEKSServicePolicy --group-name gui-group

arn_AmazonEKS_CNI_Policy=$(aws --output json --profile managepolicy iam list-policies | jq -r '.Policies | map(select(.PolicyName == "AmazonEKS_CNI_Policy"))[].Arn')

aws --profile managepolicy iam attach-group-policy --policy-arn $arn_AmazonEKS_CNI_Policy --group-name awscli-group

aws --profile managepolicy iam attach-group-policy --policy-arn $arn_AmazonEKS_CNI_Policy --group-name gui-group

arn_AmazonEC2FullAccess=$(aws --output json --profile managepolicy iam list-policies | jq -r '.Policies | map(select(.PolicyName == "AmazonEC2FullAccess"))[].Arn')

aws --profile managepolicy iam attach-group-policy --policy-arn $arn_AmazonEC2FullAccess --group-name awscli-group

aws --profile managepolicy iam attach-group-policy --policy-arn $arn_AmazonEC2FullAccess --group-name gui-group

また、VPCを作成する必要があるので、VPC用のPolicyを付与します

arn_AmazonVPCFullAccess=$(aws --output json --profile managepolicy iam list-policies | jq -r '.Policies | map(select(.PolicyName == "AmazonVPCFullAccess"))[].Arn')

aws --profile managepolicy iam attach-group-policy --policy-arn $arn_AmazonVPCFullAccess --group-name awscli-group

aws --profile managepolicy iam attach-group-policy --policy-arn $arn_AmazonVPCFullAccess --group-name gui-group

IAM Roleの作成

EKS を開始するために必要なIAM Role を作成します。IAM Role の使用用途の一例を記載すると、Kubernetes上で Service (Type: Load Balancer) を作成した時に、作成するRoleの権限を使用して、Elastic Load Balancer を作成する、といったことが挙げられます。

awscli では上手に作成できなかったため、ManagementConsoleから作成します

- IAMページから、「Create Role」を押す

- EKSサービスを選択し、「Next:Permissions」を押す

- 2種類のPolicyが自動選択されるので、そのまま「Next:Review」を押す

- AmazonEKSClusterPolicy

- AmazonEKSServicePolicy

- RoleName : EKSRole を入力し、「Create role」を押す

VPCの作成

VPCを作成するCloudFormationTemplateが用意されているため、これを利用します。

cloudformationのファイルをダウンロードします

mkdir ~/eks

cd ~/eks

rm -f amazon-eks-vpc-sample.yaml

wget https://amazon-eks.s3-us-west-2.amazonaws.com/cloudformation/2018-08-21/amazon-eks-vpc-sample.yaml

Skip : まずは、CloudFormationに与えるParameterをjson形式で用意します。

cat <<'EOF' > ~/eks_cfn_vpc.json

[

{

"ParameterKey": "VpcBlock",

"ParameterValue": "10.10.0.0/16"

},

{

"ParameterKey": "Subnet01Block",

"ParameterValue": "10.10.0.0/24"

},

{

"ParameterKey": "Subnet02Block",

"ParameterValue": "10.10.1.0/24"

},

{

"ParameterKey": "Subnet03Block",

"ParameterValue": "10.10.2.0/24"

}

]

EOF

stackの作成

vpcstack_arn=$(aws cloudformation create-stack --stack-name askboxvpc --template-body file://~/eks/amazon-eks-vpc-sample.yaml --parameters --capabilities CAPABILITY_IAM)

Skip (subnetのCIDRを指定するパターン)

vpcstack_arn=$(aws cloudformation create-stack --stack-name askboxvpc --template-body file://~/eks/amazon-eks-vpc-sample.yaml --parameters file://~/eks_cfn_vpc.json --capabilities CAPABILITY_IAM)

Statusの確認。CREATE_COMPLETE まで待機します

aws cloudformation describe-stacks --stack-name $vpcstack_arn --query Stacks[].StackStatus

memo VPC stack の削除

aws cloudformation delete-stack --stack-name $vpcstack_arn

keypairの作成

適当にkeypairを作ります

ssh-keygen

実行例

[root@sugi-awscli ~]# ssh-keygen

Generating public/private rsa key pair.

Enter file in which to save the key (/root/.ssh/id_rsa):

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:V6w24pHZgrB0dtz+RUtw2JDYQmvS795axffPxtyaWYs root@sugi-awscli.localdomain

The key's randomart image is:

+---[RSA 2048]----+

| ..oo=. |

| . ooo+o. |

| o o + =.o o |

| . = o B + o o |

| . . S B . o +|

| . * + . .o|

| . o .o+|

| . o.=B|

| oE=+o|

+----[SHA256]-----+

keypeirをAWSへImportします

aws ec2 import-key-pair --key-name "eks_keypair" --public-key-material file://~/.ssh/id_rsa.pub

操作マシンにEKS操作に必要なコンポーネント導入

kubectl

EKSクラスタを操作するために、kubectlと呼ばれるを導入します。

まず、Amazonからkubectlバイナリをダウンロードします (Linux, Mac, Windows でダウンロードするバイナリファイルが異なるので、正しいものを選択します)

curl -o kubectl https://amazon-eks.s3-us-west-2.amazonaws.com/1.10.3/2018-07-26/bin/linux/amd64/kubectl

ダウンロードしたバイナリファイルに実行アクセス権限を付与します

chmod +x ./kubectl

バイナリファイルを /usr/local/bin に格納します

mv ./kubectl /usr/local/bin

動作確認のため、kubectlのversionを表示します

[root@sugi-awscli ~]# kubectl version --short --client

Client Version: v1.10.3

aws-iam-authenticator (旧:Heptio Authenticator)

通常のKubernetesは、Kubernetes側でユーザの認証認可を行っています。

aws-iam-authenticatorは、AWS側のIAMを使用して、EKS側で認証を通す機能ためのツールです。

これにより、二重管理とならないメリットがあります。

バイナリをダウンロードします

curl -o aws-iam-authenticator https://amazon-eks.s3-us-west-2.amazonaws.com/1.10.3/2018-07-26/bin/linux/amd64/aws-iam-authenticator

実行権限を付与します

chmod +x ./aws-iam-authenticator

バイナリファイルを /usr/local/bin に格納します

mv ./aws-iam-authenticator /usr/local/bin

動作確認のためにhelpを表示します

aws-iam-authenticator help

実行例

[root@sugi-awscli ~]# aws-iam-authenticator help

A tool to authenticate to Kubernetes using AWS IAM credentials

Usage:

heptio-authenticator-aws [command]

Available Commands:

help Help about any command

init Pre-generate certificate, private key, and kubeconfig files for the server.

server Run a webhook validation server suitable that validates tokens using AWS IAM

token Authenticate using AWS IAM and get token for Kubernetes

verify Verify a token for debugging purpose

Flags:

-i, --cluster-id ID Specify the cluster ID, a unique-per-cluster identifier for your heptio-authenticator-aws installation.

-c, --config filename Load configuration from filename

-h, --help help for heptio-authenticator-aws

Use "heptio-authenticator-aws [command] --help" for more information about a command.

EKSクラスタの作成

2018年8月現在、EKSをサポートしているのは、以下の2個のリージョンです。

今回は「us-west-2」を利用します。

- US East (N. Virginia) : us-east-1

- US West (Oregon) : us-west-2

EKSのために作成したIAMロールのARNを取得

eks_iamrole=$(aws --output json iam list-roles | jq -r '.Roles | map(select(.RoleName == "EKSRole"))[].Arn')

割り当てるVPCのSubnetのIDを取得

vpcstack_arn=$(aws --output json cloudformation list-stacks --stack-status-filter CREATE_COMPLETE | jq -r '.StackSummaries | map(select(.StackName == "askboxvpc"))[].StackId')

subnetids=$(aws --output json cloudformation describe-stacks --stack-name $vpcstack_arn | jq -r '.Stacks[0].Outputs | map(select(.OutputKey == "SubnetIds"))[0].OutputValue')

arr=( `echo $subnetids | tr -s ',' ' '`)

subnet1=${arr[0]}

subnet2=${arr[1]}

subnet3=${arr[2]}

VPCのIDを取得

vpcid=$(aws --output json cloudformation describe-stacks --stack-name $vpcstack_arn | jq -r '.Stacks[0].Outputs | map(select(.OutputKey == "VpcId"))[0].OutputValue')

VPC作成時に作成されたControlPlaneSecurityGroup のSecurity Group の ID を取得

secgroup_id=$(aws --output json cloudformation describe-stacks --stack-name $vpcstack_arn | jq -r '.Stacks[0].Outputs | map(select(.OutputKey == "SecurityGroups"))[0].OutputValue')

EKCクラスタの作成

eks_clustername=askbox

aws eks create-cluster --name $eks_clustername --role-arn $eks_iamrole --resources-vpc-config subnetIds=$subnet1,$subnet2,$subnet3,securityGroupIds=$secgroup_id

StatusがCREATINGとなっていることを確認できます

[root@sugi-awscli policies]# aws eks describe-cluster --name $eks_clustername --query cluster.status

CREATING

約10分ほどで、StatusがACTIVEと遷移します

[root@sugi-awscli policies]# aws eks describe-cluster --name $eks_clustername --query cluster.status

ACTIVE

kubectlを設定する

kubernetes config を格納する用のDirectoryを作成します

mkdir -p ~/.kube

.kube 以下に以下のファイルを生成します

cat <<'EOF' > ~/.kube/config-manual

apiVersion: v1

clusters:

- cluster:

server: <endpoint-url>

certificate-authority-data: <base64-encoded-ca-cert>

name: kubernetes

contexts:

- context:

cluster: kubernetes

namespace: default

user: aws

name: aws

current-context: aws

kind: Config

preferences: {}

users:

- name: aws

user:

exec:

apiVersion: client.authentication.k8s.io/v1alpha1

command: aws-iam-authenticator

args:

- "token"

- "-i"

- "<cluster-name>"

# - "-r"

# - "<role-arn>"

# env:

# - name: AWS_PROFILE

# value: "<aws-profile>"

EOF

endpoint と certificateAuthority.data を変数に格納します。これは、kubectlの認証に使用します

eks_endpoint=$(aws eks describe-cluster --name $eks_clustername --query cluster.endpoint)

eks_certdata=$(aws eks describe-cluster --name $eks_clustername --query cluster.certificateAuthority.data)

sed で編集します

sed -i -e "s#<endpoint-url>#$eks_endpoint#g" ~/.kube/config-manual

sed -i -e "s/<base64-encoded-ca-cert>/$eks_certdata/g" ~/.kube/config-manual

sed -i -e "s/<cluster-name>/$eks_clustername/g" ~/.kube/config-manual

環境変数を設定します

export KUBECONFIG=$KUBECONFIG:~/.kube/config-manual

bashrcに追加します

echo 'export KUBECONFIG=~/.kube/config-manual' >> ~/.bashrc

Defaultで作成されているServiceを確認します

[root@sugi-awscli eks]# kubectl get svc --all-namespaces -o wide

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

default kubernetes ClusterIP 10.100.0.1 <none> 443/TCP 3m <none>

kube-system kube-dns ClusterIP 10.100.0.10 <none> 53/UDP,53/TCP 3m k8s-app=kube-dns

Podも確認します

[root@sugi-awscli eks]# kubectl get pod --all-namespaces -o wide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE

kube-system kube-dns-64b69465b4-xw6hw 0/3 Pending 0 3m <none> <none>

Deployment

[root@sugi-awscli eks]# kubectl get deployment --all-namespaces -o wide

NAMESPACE NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

kube-system kube-dns 1 1 1 0 3m kubedns,dnsmasq,sidecar 602401143452.dkr.ecr.us-west-2.amazonaws.com/eks/kube-dns/kube-dns:1.14.10,602401143452.dkr.ecr.us-west-2.amazonaws.com/eks/kube-dns/dnsmasq-nanny:1.14.10,602401143452.dkr.ecr.us-west-2.amazonaws.com/eks/kube-dns/sidecar:1.14.10 k8s-app=kube-dns

event

[root@sugi-awscli eks]# kubectl get events --all-namespaces

NAMESPACE LAST SEEN FIRST SEEN COUNT NAME KIND SUBOBJECT TYPE REASON SOURCE MESSAGE

kube-system 3m 3m 1 kube-controller-manager.154e6e1fb97c6e3f Endpoints Normal LeaderElection controller-manager ip-10-0-110-101.us-west-2.compute.internal_5c999f0b-a929-11e8-b246-06d410ffe9ec became leader

kube-system 25s 3m 15 kube-dns-7cc87d595-kt8wg.154e6e2174906809 Pod Warning FailedScheduling default-scheduler no nodes available to schedule pods

kube-system 3m 3m 1 kube-dns-7cc87d595.154e6e21747b0cf6 ReplicaSet Normal SuccessfulCreate replicaset-controller Created pod: kube-dns-7cc87d595-kt8wg

kube-system 3m 3m 1 kube-dns.154e6e2173b4273b Deployment Normal ScalingReplicaSet deployment-controller Scaled up replica set kube-dns-7cc87d595 to 1

kube-system 3m 3m 1 kube-scheduler.154e6e1fe6e4cb04 Endpoints Normal LeaderElection default-scheduler ip-10-0-110-101.us-west-2.compute.internal_58fe49b2-a929-11e8-bf2a-06d410ffe9ec became leader

なお、nodeをgetしてもMasterは表示されません

[root@sugi-awscli ~]# kubectl get node

No resources found.

Kubernetesの操作に便利なコンポーネント導入

Kubernetesの操作に便利なコンポーネントを導入します。こちらのものは必須ではないので、不要であればSkipして頂いて大丈夫です。

bash_completionの有効化

kubectlのサブコマンドの自動補完を有効にするために、以下のパッケージを導入する

yum install -y bash-completion

bashrcに以下の行を追記

echo "source <(kubectl completion bash)" >> ~/.bash_profile

一度ターミナルをexitし、再度ログインすると、kubectlのcompletionが有効になる

kubectx と kubens のインストール

Kubernetesクラスタを管理する際に便利な物を導入する。

- kubextc : kubectlの実行クラスタを変更する際に、楽に変更可能とするためのもの

- kubens : kubectlで namespace を変更する際に、楽に変更可能とするためのもの

sudo git clone https://github.com/ahmetb/kubectx /opt/kubectx

sudo ln -s /opt/kubectx/kubectx /usr/local/bin/kubectx

sudo ln -s /opt/kubectx/kubens /usr/local/bin/kubens

kube-prompt-bash を install (CentOS7)

bash-promt に kubernetes クラスタや namespace を表示するツールを導入

cd ~

git clone https://github.com/Sugi275/kube-prompt-bash.git

echo "source ~/kube-prompt-bash/kube-prompt-bash.sh" >> ~/.bashrc

echo 'export PS1='\''[\u@\h \W($(kube_prompt))]\$ '\' >> ~/.bashrc

EKS Nodeの追加

EC2インスタンスの起動

EC2インスタンスを追加する事で、EKSにNodeを追加する事が出来ます。

AWS側でEC2インスタンスを起動する、CloudFormation用のテンプレートファイルが用意されています。

CloudFormationに渡すパラメータを以下のjsonで定義します。こちらの値は適宜環境ごとに変更をしてください。

BootstrapArgumentsにパラメータを指定することで、kubeletへの引数や、Node(?)あたりの最大のPod数などを指定できるようです。

https://github.com/awslabs/amazon-eks-ami/blob/master/files/bootstrap.sh

Nodeに使用するAMIは以下URLで確認できます

https://docs.aws.amazon.com/eks/latest/userguide/eks-optimized-ami.html

cat <<'EOF' > ~/eks/eks_cfn_node.json

[

{

"ParameterKey": "KeyName",

"ParameterValue": "eks_keypair"

},

{

"ParameterKey": "NodeImageId",

"ParameterValue": "ami-08cab282f9979fc7a"

},

{

"ParameterKey": "NodeInstanceType",

"ParameterValue": "t2.medium"

},

{

"ParameterKey": "NodeAutoScalingGroupMinSize",

"ParameterValue": "1"

},

{

"ParameterKey": "NodeAutoScalingGroupMaxSize",

"ParameterValue": "3"

},

{

"ParameterKey": "ClusterName",

"ParameterValue": "clustername_sed"

},

{

"ParameterKey": "BootstrapArguments",

"ParameterValue": ""

},

{

"ParameterKey": "NodeGroupName",

"ParameterValue": "eksnodegroup"

},

{

"ParameterKey": "ClusterControlPlaneSecurityGroup",

"ParameterValue": "secgroupid_sed"

},

{

"ParameterKey": "VpcId",

"ParameterValue": "vpcid_sed"

},

{

"ParameterKey": "Subnets",

"ParameterValue": "subnet1_sed,subnet2_sed,subnet3_sed"

}

]

EOF

jsonファイルの編集に必要な情報を環境変数に入れます

vpcstack_arn=$(aws --output json cloudformation list-stacks --stack-status-filter CREATE_COMPLETE | jq -r '.StackSummaries | map(select(.StackName == "askboxvpc"))[].StackId')

subnetids=$(aws --output json cloudformation describe-stacks --stack-name $vpcstack_arn | jq -r '.Stacks[0].Outputs | map(select(.OutputKey == "SubnetIds"))[0].OutputValue')

arr=( `echo $subnetids | tr -s ',' ' '`)

subnet1=${arr[0]}

subnet2=${arr[1]}

subnet3=${arr[2]}

vpcid=$(aws --output json cloudformation describe-stacks --stack-name $vpcstack_arn | jq -r '.Stacks[0].Outputs | map(select(.OutputKey == "VpcId"))[0].OutputValue')

secgroup_id=$(aws --output json cloudformation describe-stacks --stack-name $vpcstack_arn | jq -r '.Stacks[0].Outputs | map(select(.OutputKey == "SecurityGroups"))[0].OutputValue')

sed で編集します

sed -i -e "s/subnet1_sed/$subnet1/g" ~/eks/eks_cfn_node.json

sed -i -e "s/subnet2_sed/$subnet2/g" ~/eks/eks_cfn_node.json

sed -i -e "s/subnet3_sed/$subnet3/g" ~/eks/eks_cfn_node.json

sed -i -e "s/vpcid_sed/$vpcid/g" ~/eks/eks_cfn_node.json

sed -i -e "s/secgroupid_sed/$secgroup_id/g" ~/eks/eks_cfn_node.json

sed -i -e "s/clustername_sed/$eks_clustername/g" ~/eks/eks_cfn_node.json

以下コマンドでCloudFormationを使用して、EC2インスタンスをDeployします。3分ほどかかります

nodestack_arn=$(aws cloudformation create-stack --stack-name askboxnodes --template-url https://amazon-eks.s3-us-west-2.amazonaws.com/cloudformation/2018-08-21/amazon-eks-nodegroup.yaml --parameters file://~/eks/eks_cfn_node.json --capabilities CAPABILITY_IAM)

stackのステータスの確認

aws cloudformation describe-stacks --stack-name $nodestack_arn --query Stacks[].StackStatus

実行例 CREATE_IN_PROGRESS が CREATE_COMPLETEとなるまで待機します

[root@sugi-awscli eks]# aws cloudformation describe-stacks --stack-name $nodestack_arn --query Stacks[].StackStatus

CREATE_COMPLETE

また、EC2インスタンスのstack checks が 2/2 checksとなることも確認します。これは、stackがcompleteとなるより遅いので注意が必要です

EKSクラスタのために作成したEC2インスタンスのIDを取得

instanceids=$(aws --output json ec2 describe-instances | jq -r '.Reservations | map(select(.Instances[].Tags[].Key == "kubernetes.io/cluster/askbox"))[].Instances[].InstanceId')

arr=( `echo $instanceids | tr -s ',' ' '`)

instanceid1=${arr[0]}

instanceid2=${arr[1]}

instanceid3=${arr[2]}

以下の全てでStatusがpassedと表示されていること

aws --output json ec2 describe-instance-status --instance-ids $instanceid1

aws --output json ec2 describe-instance-status --instance-ids $instanceid2

aws --output json ec2 describe-instance-status --instance-ids $instanceid3

実行例

[root@sugi-awscli ~(default aws)]# aws --output json ec2 describe-instance-status --instance-ids $instanceid1

{

"InstanceStatuses": [

{

"InstanceId": "i-00ff0a999a7669c04",

"InstanceState": {

"Code": 16,

"Name": "running"

},

"AvailabilityZone": "us-west-2c",

"SystemStatus": {

"Status": "ok",

"Details": [

{

"Status": "passed",

"Name": "reachability"

}

]

},

"InstanceStatus": {

"Status": "ok",

"Details": [

{

"Status": "passed",

"Name": "reachability"

}

]

}

}

]

}

memo stackの削除

aws cloudformation delete-stack --stack-name $nodestack_arn

この段階で、Instanceが3台起動しています

aws ec2 --output table describe-instances

なお、sshするには、ec2-userを使用します

ssh -i id_rsa ec2-user@XXX.XXX.XXX.XXX

EC2インスタンスをEKSクラスタに追加

AWS側で用意されている config map のマニフェストファイルを download します

cd ~/eks

rm -f aws-auth-cm.yaml

curl -O https://amazon-eks.s3-us-west-2.amazonaws.com/cloudformation/2018-08-21/aws-auth-cm.yaml

内容を確認します。

EC2インスタンスをCloudFormationで作成した際に、EC2インスタンスには、IAMRoleが自動的に生成されて設定されています。

ファイル内のrolearn を、IAM Role の IDを指定する必要があります。

[root@sugi-awscli ~]# cat ~/eks/aws-auth-cm.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: aws-auth

namespace: kube-system

data:

mapRoles: |

- rolearn: <ARN of instance role (not instance profile)>

username: system:node:{{EC2PrivateDNSName}}

groups:

- system:bootstrappers

- system:nodes

IAM Role の ARN を取得します

nodestack_arn=$(aws --output json cloudformation list-stacks --stack-status-filter CREATE_COMPLETE | jq -r '.StackSummaries | map(select(.StackName == "askboxnodes"))[].StackId')

node_iamrole_arn=$(aws cloudformation describe-stacks --stack-name $nodestack_arn --query Stacks[0].Outputs[0].OutputValue)

sedで編集します

sed -i -e "s#<ARN of instance role (not instance profile)>#$node_iamrole_arn#g" ~/eks/aws-auth-cm.yaml

kubectlでconfigmapを作成します

kubectl apply -f ~/eks/aws-auth-cm.yaml

実行例

[root@sugi-awscli ~]# kubectl apply -f ~/eks/aws-auth-cm.yaml

configmap "aws-auth" created

kubectl get nodes で、EKS NodeがReady状態として見えてくるまで待機します.

[root@sugi-awscli eks]# kubectl get nodes -o wide

NAME STATUS ROLES AGE VERSION EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

ip-192-168-186-83.us-west-2.compute.internal Ready <none> 55s v1.10.3 <none> Amazon Linux 2 4.14.62-70.117.amzn2.x86_64 docker://17.6.2

ip-192-168-203-156.us-west-2.compute.internal Ready <none> 1m v1.10.3 <none> Amazon Linux 2 4.14.62-70.117.amzn2.x86_64 docker://17.6.2

ip-192-168-70-39.us-west-2.compute.internal Ready <none> 58s v1.10.3 <none> Amazon Linux 2 4.14.62-70.117.amzn2.x86_64 docker://17.6.2

また、そのたResourceも確認します

Service

[root@sugi-awscli eks]# kubectl get svc --all-namespaces -o wide

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

default kubernetes ClusterIP 10.100.0.1 <none> 443/TCP 30m <none>

kube-system kube-dns ClusterIP 10.100.0.10 <none> 53/UDP,53/TCP 30m k8s-app=kube-dns

Pod

[root@sugi-awscli eks]# kubectl get pod --all-namespaces -o wide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE

kube-system aws-node-8zsbf 1/1 Running 0 1m 192.168.70.39 ip-192-168-70-39.us-west-2.compute.internal

kube-system aws-node-fj28w 1/1 Running 0 1m 192.168.203.156 ip-192-168-203-156.us-west-2.compute.internal

kube-system aws-node-kk2w9 1/1 Running 1 1m 192.168.186.83 ip-192-168-186-83.us-west-2.compute.internal

kube-system kube-dns-7cc87d595-9qqfm 3/3 Running 0 30m 192.168.89.10 ip-192-168-70-39.us-west-2.compute.internal

kube-system kube-proxy-5rfs2 1/1 Running 0 1m 192.168.203.156 ip-192-168-203-156.us-west-2.compute.internal

kube-system kube-proxy-6vhzr 1/1 Running 0 1m 192.168.70.39 ip-192-168-70-39.us-west-2.compute.internal

kube-system kube-proxy-qf6l2 1/1 Running 0 1m 192.168.186.83 ip-192-168-186-83.us-west-2.compute.internal

Deployment

[root@sugi-awscli eks]# kubectl get deployment --all-namespaces -o wide

NAMESPACE NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

kube-system kube-dns 1 1 1 1 30m kubedns,dnsmasq,sidecar 602401143452.dkr.ecr.us-west-2.amazonaws.com/eks/kube-dns/kube-dns:1.14.10,602401143452.dkr.ecr.us-west-2.amazonaws.com/eks/kube-dns/dnsmasq-nanny:1.14.10,602401143452.dkr.ecr.us-west-2.amazonaws.com/eks/kube-dns/sidecar:1.14.10 k8s-app=kube-dns

Event

[root@sugi-awscli eks]# kubectl get events --all-namespaces

NAMESPACE LAST SEEN FIRST SEEN COUNT NAME KIND SUBOBJECT TYPE REASON SOURCE MESSAGE

default 1m 1m 1 ip-192-168-186-83.us-west-2.compute.internal.154f7fa6a4439f0d Node Normal Starting kube-proxy, ip-192-168-186-83.us-west-2.compute.internal Starting kube-proxy.

default 2m 2m 1 ip-192-168-203-156.us-west-2.compute.internal.154f7fa2df5d4ad4 Node Normal Starting kube-proxy, ip-192-168-203-156.us-west-2.compute.internal Starting kube-proxy.

default 2m 2m 1 ip-192-168-70-39.us-west-2.compute.internal.154f7fa2a5221c3c Node Normal Starting kube-proxy, ip-192-168-70-39.us-west-2.compute.internal Starting kube-proxy.

kube-system 2m 2m 1 aws-node-8zsbf.154f7fa1aafc3c79 Pod Normal SuccessfulMountVolume kubelet, ip-192-168-70-39.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "log-dir"

kube-system 2m 2m 1 aws-node-8zsbf.154f7fa1ab00009b Pod Normal SuccessfulMountVolume kubelet, ip-192-168-70-39.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "cni-bin-dir"

kube-system 2m 2m 1 aws-node-8zsbf.154f7fa1ab008ad9 Pod Normal SuccessfulMountVolume kubelet, ip-192-168-70-39.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "cni-net-dir"

kube-system 2m 2m 1 aws-node-8zsbf.154f7fa1ab0ee5a2 Pod Normal SuccessfulMountVolume kubelet, ip-192-168-70-39.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "dockersock"

kube-system 2m 2m 1 aws-node-8zsbf.154f7fa1abfacdb3 Pod Normal SuccessfulMountVolume kubelet, ip-192-168-70-39.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "aws-node-token-5f8vf"

kube-system 2m 2m 1 aws-node-8zsbf.154f7fa1cf212a0c Pod spec.containers{aws-node} Normal Pulling kubelet, ip-192-168-70-39.us-west-2.compute.internal pulling image "602401143452.dkr.ecr.us-west-2.amazonaws.com/amazon-k8s-cni:1.1.0"

kube-system 2m 2m 1 aws-node-8zsbf.154f7fa507d9c382 Pod spec.containers{aws-node} Normal Pulled kubelet, ip-192-168-70-39.us-west-2.compute.internal Successfully pulled image "602401143452.dkr.ecr.us-west-2.amazonaws.com/amazon-k8s-cni:1.1.0"

kube-system 1m 1m 1 aws-node-8zsbf.154f7fa632fb4f13 Pod spec.containers{aws-node} Normal Created kubelet, ip-192-168-70-39.us-west-2.compute.internal Created container

kube-system 1m 1m 1 aws-node-8zsbf.154f7fa637a51b5e Pod spec.containers{aws-node} Normal Started kubelet, ip-192-168-70-39.us-west-2.compute.internal Started container

kube-system 2m 2m 1 aws-node-fj28w.154f7fa144eda7cc Pod Normal SuccessfulMountVolume kubelet, ip-192-168-203-156.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "cni-bin-dir"

kube-system 2m 2m 1 aws-node-fj28w.154f7fa144f21da1 Pod Normal SuccessfulMountVolume kubelet, ip-192-168-203-156.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "log-dir"

kube-system 2m 2m 1 aws-node-fj28w.154f7fa144fffda6 Pod Normal SuccessfulMountVolume kubelet, ip-192-168-203-156.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "cni-net-dir"

kube-system 2m 2m 1 aws-node-fj28w.154f7fa145007864 Pod Normal SuccessfulMountVolume kubelet, ip-192-168-203-156.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "dockersock"

kube-system 2m 2m 1 aws-node-fj28w.154f7fa146017bed Pod Normal SuccessfulMountVolume kubelet, ip-192-168-203-156.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "aws-node-token-5f8vf"

kube-system 2m 2m 1 aws-node-fj28w.154f7fa1725c6eb1 Pod spec.containers{aws-node} Normal Pulling kubelet, ip-192-168-203-156.us-west-2.compute.internal pulling image "602401143452.dkr.ecr.us-west-2.amazonaws.com/amazon-k8s-cni:1.1.0"

kube-system 2m 2m 1 aws-node-fj28w.154f7fa433d9eea6 Pod spec.containers{aws-node} Normal Pulled kubelet, ip-192-168-203-156.us-west-2.compute.internal Successfully pulled image "602401143452.dkr.ecr.us-west-2.amazonaws.com/amazon-k8s-cni:1.1.0"

kube-system 1m 1m 1 aws-node-fj28w.154f7fa56082ec99 Pod spec.containers{aws-node} Normal Created kubelet, ip-192-168-203-156.us-west-2.compute.internal Created container

kube-system 1m 1m 1 aws-node-fj28w.154f7fa564e1cdb6 Pod spec.containers{aws-node} Normal Started kubelet, ip-192-168-203-156.us-west-2.compute.internal Started container

kube-system 2m 2m 1 aws-node-kk2w9.154f7fa2918ff4f7 Pod Normal SuccessfulMountVolume kubelet, ip-192-168-186-83.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "cni-net-dir"

kube-system 2m 2m 1 aws-node-kk2w9.154f7fa291939085 Pod Normal SuccessfulMountVolume kubelet, ip-192-168-186-83.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "log-dir"

kube-system 2m 2m 1 aws-node-kk2w9.154f7fa291945a5c Pod Normal SuccessfulMountVolume kubelet, ip-192-168-186-83.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "dockersock"

kube-system 2m 2m 1 aws-node-kk2w9.154f7fa291982f8a Pod Normal SuccessfulMountVolume kubelet, ip-192-168-186-83.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "cni-bin-dir"

kube-system 2m 2m 1 aws-node-kk2w9.154f7fa29288adce Pod Normal SuccessfulMountVolume kubelet, ip-192-168-186-83.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "aws-node-token-5f8vf"

kube-system 2m 2m 1 aws-node-kk2w9.154f7fa2ba47017c Pod spec.containers{aws-node} Normal Pulling kubelet, ip-192-168-186-83.us-west-2.compute.internal pulling image "602401143452.dkr.ecr.us-west-2.amazonaws.com/amazon-k8s-cni:1.1.0"

kube-system 2m 2m 1 aws-node-kk2w9.154f7fa4e65bee09 Pod spec.containers{aws-node} Normal Pulled kubelet, ip-192-168-186-83.us-west-2.compute.internal Successfully pulled image "602401143452.dkr.ecr.us-west-2.amazonaws.com/amazon-k8s-cni:1.1.0"

kube-system 1m 1m 2 aws-node-kk2w9.154f7fa64d184a8b Pod spec.containers{aws-node} Normal Created kubelet, ip-192-168-186-83.us-west-2.compute.internal Created container

kube-system 1m 1m 2 aws-node-kk2w9.154f7fa653d8667e Pod spec.containers{aws-node} Normal Started kubelet, ip-192-168-186-83.us-west-2.compute.internal Started container

kube-system 1m 1m 1 aws-node-kk2w9.154f7fad8ee47cde Pod spec.containers{aws-node} Normal Pulled kubelet, ip-192-168-186-83.us-west-2.compute.internal Container image "602401143452.dkr.ecr.us-west-2.amazonaws.com/amazon-k8s-cni:1.1.0" already present on machine

kube-system 2m 2m 1 aws-node.154f7fa1397afed2 DaemonSet Normal SuccessfulCreate daemonset-controller Created pod: aws-node-fj28w

kube-system 2m 2m 1 aws-node.154f7fa19ad97010 DaemonSet Normal SuccessfulCreate daemonset-controller Created pod: aws-node-8zsbf

kube-system 2m 2m 1 aws-node.154f7fa2880bd662 DaemonSet Normal SuccessfulCreate daemonset-controller Created pod: aws-node-kk2w9

kube-system 30m 30m 1 kube-controller-manager.154f7e10cb9930e9 Endpoints Normal LeaderElection controller-manager ip-10-0-162-118.us-west-2.compute.internal_90e1fe0a-abe1-11e8-953c-0a12b25cd7ca became leader

kube-system 5m 30m 91 kube-dns-7cc87d595-9qqfm.154f7e11e2d0b696 Pod Warning FailedScheduling default-scheduler no nodes available to schedule pods

kube-system 1m 1m 1 kube-dns-7cc87d595-9qqfm.154f7faa307dc51a Pod Normal SuccessfulMountVolume kubelet, ip-192-168-70-39.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "kube-dns-config"

kube-system 1m 1m 1 kube-dns-7cc87d595-9qqfm.154f7faa30badf04 Pod Normal SuccessfulMountVolume kubelet, ip-192-168-70-39.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "kube-dns-token-x4c5c"

kube-system 1m 1m 1 kube-dns-7cc87d595-9qqfm.154f7faa46074644 Pod spec.containers{kubedns} Normal Pulling kubelet, ip-192-168-70-39.us-west-2.compute.internal pulling image "602401143452.dkr.ecr.us-west-2.amazonaws.com/eks/kube-dns/kube-dns:1.14.10"

kube-system 1m 1m 1 kube-dns-7cc87d595-9qqfm.154f7fab016ab6c3 Pod spec.containers{kubedns} Normal Pulled kubelet, ip-192-168-70-39.us-west-2.compute.internal Successfully pulled image "602401143452.dkr.ecr.us-west-2.amazonaws.com/eks/kube-dns/kube-dns:1.14.10"

kube-system 1m 1m 1 kube-dns-7cc87d595-9qqfm.154f7fab0b00b1c7 Pod spec.containers{kubedns} Normal Created kubelet, ip-192-168-70-39.us-west-2.compute.internal Created container

kube-system 1m 1m 1 kube-dns-7cc87d595-9qqfm.154f7fab10f70446 Pod spec.containers{kubedns} Normal Started kubelet, ip-192-168-70-39.us-west-2.compute.internal Started container

kube-system 1m 1m 1 kube-dns-7cc87d595-9qqfm.154f7fab1107b340 Pod spec.containers{dnsmasq} Normal Pulling kubelet, ip-192-168-70-39.us-west-2.compute.internal pulling image "602401143452.dkr.ecr.us-west-2.amazonaws.com/eks/kube-dns/dnsmasq-nanny:1.14.10"

kube-system 1m 1m 1 kube-dns-7cc87d595-9qqfm.154f7fab5688fea5 Pod spec.containers{dnsmasq} Normal Pulled kubelet, ip-192-168-70-39.us-west-2.compute.internal Successfully pulled image "602401143452.dkr.ecr.us-west-2.amazonaws.com/eks/kube-dns/dnsmasq-nanny:1.14.10"

kube-system 1m 1m 1 kube-dns-7cc87d595-9qqfm.154f7fab5ef0b6db Pod spec.containers{dnsmasq} Normal Created kubelet, ip-192-168-70-39.us-west-2.compute.internal Created container

kube-system 1m 1m 1 kube-dns-7cc87d595-9qqfm.154f7fab641ed9a4 Pod spec.containers{dnsmasq} Normal Started kubelet, ip-192-168-70-39.us-west-2.compute.internal Started container

kube-system 1m 1m 1 kube-dns-7cc87d595-9qqfm.154f7fab642f00ae Pod spec.containers{sidecar} Normal Pulling kubelet, ip-192-168-70-39.us-west-2.compute.internal pulling image "602401143452.dkr.ecr.us-west-2.amazonaws.com/eks/kube-dns/sidecar:1.14.10"

kube-system 1m 1m 1 kube-dns-7cc87d595-9qqfm.154f7fab99262eb9 Pod spec.containers{sidecar} Normal Pulled kubelet, ip-192-168-70-39.us-west-2.compute.internal Successfully pulled image "602401143452.dkr.ecr.us-west-2.amazonaws.com/eks/kube-dns/sidecar:1.14.10"

kube-system 1m 1m 1 kube-dns-7cc87d595-9qqfm.154f7faba082743c Pod spec.containers{sidecar} Normal Created kubelet, ip-192-168-70-39.us-west-2.compute.internal Created container

kube-system 1m 1m 1 kube-dns-7cc87d595-9qqfm.154f7faba460fc1d Pod spec.containers{sidecar} Normal Started kubelet, ip-192-168-70-39.us-west-2.compute.internal Started container

kube-system 30m 30m 1 kube-dns-7cc87d595.154f7e11e2bef990 ReplicaSet Normal SuccessfulCreate replicaset-controller Created pod: kube-dns-7cc87d595-9qqfm

kube-system 30m 30m 1 kube-dns.154f7e11e15bc6f9 Deployment Normal ScalingReplicaSet deployment-controller Scaled up replica set kube-dns-7cc87d595 to 1

kube-system 2m 2m 1 kube-proxy-5rfs2.154f7fa144eda7cc Pod Normal SuccessfulMountVolume kubelet, ip-192-168-203-156.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "varlog"

kube-system 2m 2m 1 kube-proxy-5rfs2.154f7fa14500f6cd Pod Normal SuccessfulMountVolume kubelet, ip-192-168-203-156.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "xtables-lock"

kube-system 2m 2m 1 kube-proxy-5rfs2.154f7fa145c45707 Pod Normal SuccessfulMountVolume kubelet, ip-192-168-203-156.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "kubeconfig"

kube-system 2m 2m 1 kube-proxy-5rfs2.154f7fa145f52908 Pod Normal SuccessfulMountVolume kubelet, ip-192-168-203-156.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "kube-proxy-token-qvmnc"

kube-system 2m 2m 1 kube-proxy-5rfs2.154f7fa1725bd118 Pod spec.containers{kube-proxy} Normal Pulling kubelet, ip-192-168-203-156.us-west-2.compute.internal pulling image "602401143452.dkr.ecr.us-west-2.amazonaws.com/eks/kube-proxy:v1.10.3"

kube-system 2m 2m 1 kube-proxy-5rfs2.154f7fa2052f0d04 Pod spec.containers{kube-proxy} Normal Pulled kubelet, ip-192-168-203-156.us-west-2.compute.internal Successfully pulled image "602401143452.dkr.ecr.us-west-2.amazonaws.com/eks/kube-proxy:v1.10.3"

kube-system 2m 2m 1 kube-proxy-5rfs2.154f7fa2c4b9d601 Pod spec.containers{kube-proxy} Normal Created kubelet, ip-192-168-203-156.us-west-2.compute.internal Created container

kube-system 2m 2m 1 kube-proxy-5rfs2.154f7fa2cce04913 Pod spec.containers{kube-proxy} Normal Started kubelet, ip-192-168-203-156.us-west-2.compute.internal Started container

kube-system 2m 2m 1 kube-proxy-6vhzr.154f7fa1aafcb50f Pod Normal SuccessfulMountVolume kubelet, ip-192-168-70-39.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "xtables-lock"

kube-system 2m 2m 1 kube-proxy-6vhzr.154f7fa1ab0df8b5 Pod Normal SuccessfulMountVolume kubelet, ip-192-168-70-39.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "varlog"

kube-system 2m 2m 1 kube-proxy-6vhzr.154f7fa1ab2c4d1f Pod Normal SuccessfulMountVolume kubelet, ip-192-168-70-39.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "kubeconfig"

kube-system 2m 2m 1 kube-proxy-6vhzr.154f7fa1ac005d32 Pod Normal SuccessfulMountVolume kubelet, ip-192-168-70-39.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "kube-proxy-token-qvmnc"

kube-system 2m 2m 1 kube-proxy-6vhzr.154f7fa1cdc633ec Pod spec.containers{kube-proxy} Normal Pulling kubelet, ip-192-168-70-39.us-west-2.compute.internal pulling image "602401143452.dkr.ecr.us-west-2.amazonaws.com/eks/kube-proxy:v1.10.3"

kube-system 2m 2m 1 kube-proxy-6vhzr.154f7fa2668c3bd5 Pod spec.containers{kube-proxy} Normal Pulled kubelet, ip-192-168-70-39.us-west-2.compute.internal Successfully pulled image "602401143452.dkr.ecr.us-west-2.amazonaws.com/eks/kube-proxy:v1.10.3"

kube-system 2m 2m 1 kube-proxy-6vhzr.154f7fa28e21dc4d Pod spec.containers{kube-proxy} Normal Created kubelet, ip-192-168-70-39.us-west-2.compute.internal Created container

kube-system 2m 2m 1 kube-proxy-6vhzr.154f7fa292998fc3 Pod spec.containers{kube-proxy} Normal Started kubelet, ip-192-168-70-39.us-west-2.compute.internal Started container

kube-system 2m 2m 1 kube-proxy-qf6l2.154f7fa291940b11 Pod Normal SuccessfulMountVolume kubelet, ip-192-168-186-83.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "varlog"

kube-system 2m 2m 1 kube-proxy-qf6l2.154f7fa2919fb313 Pod Normal SuccessfulMountVolume kubelet, ip-192-168-186-83.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "xtables-lock"

kube-system 2m 2m 1 kube-proxy-qf6l2.154f7fa291ce3d66 Pod Normal SuccessfulMountVolume kubelet, ip-192-168-186-83.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "kubeconfig"

kube-system 2m 2m 1 kube-proxy-qf6l2.154f7fa292426765 Pod Normal SuccessfulMountVolume kubelet, ip-192-168-186-83.us-west-2.compute.internal MountVolume.SetUp succeeded for volume "kube-proxy-token-qvmnc"

kube-system 2m 2m 1 kube-proxy-qf6l2.154f7fa2bb6cce24 Pod spec.containers{kube-proxy} Normal Pulling kubelet, ip-192-168-186-83.us-west-2.compute.internal pulling image "602401143452.dkr.ecr.us-west-2.amazonaws.com/eks/kube-proxy:v1.10.3"

kube-system 1m 1m 1 kube-proxy-qf6l2.154f7fa6826182cf Pod spec.containers{kube-proxy} Normal Pulled kubelet, ip-192-168-186-83.us-west-2.compute.internal Successfully pulled image "602401143452.dkr.ecr.us-west-2.amazonaws.com/eks/kube-proxy:v1.10.3"

kube-system 1m 1m 1 kube-proxy-qf6l2.154f7fa68edd4211 Pod spec.containers{kube-proxy} Normal Created kubelet, ip-192-168-186-83.us-west-2.compute.internal Created container

kube-system 1m 1m 1 kube-proxy-qf6l2.154f7fa694787812 Pod spec.containers{kube-proxy} Normal Started kubelet, ip-192-168-186-83.us-west-2.compute.internal Started container

kube-system 2m 2m 1 kube-proxy.154f7fa139e436df DaemonSet Normal SuccessfulCreate daemonset-controller Created pod: kube-proxy-5rfs2

kube-system 2m 2m 1 kube-proxy.154f7fa19afc239b DaemonSet Normal SuccessfulCreate daemonset-controller Created pod: kube-proxy-6vhzr

kube-system 2m 2m 1 kube-proxy.154f7fa2883ae96f DaemonSet Normal SuccessfulCreate daemonset-controller Created pod: kube-proxy-qf6l2

kube-system 30m 30m 1 kube-scheduler.154f7e10df006956 Endpoints Normal LeaderElection default-scheduler ip-10-0-162-118.us-west-2.compute.internal_8f6303d3-abe1-11e8-a575-0a12b25cd7ca became leader

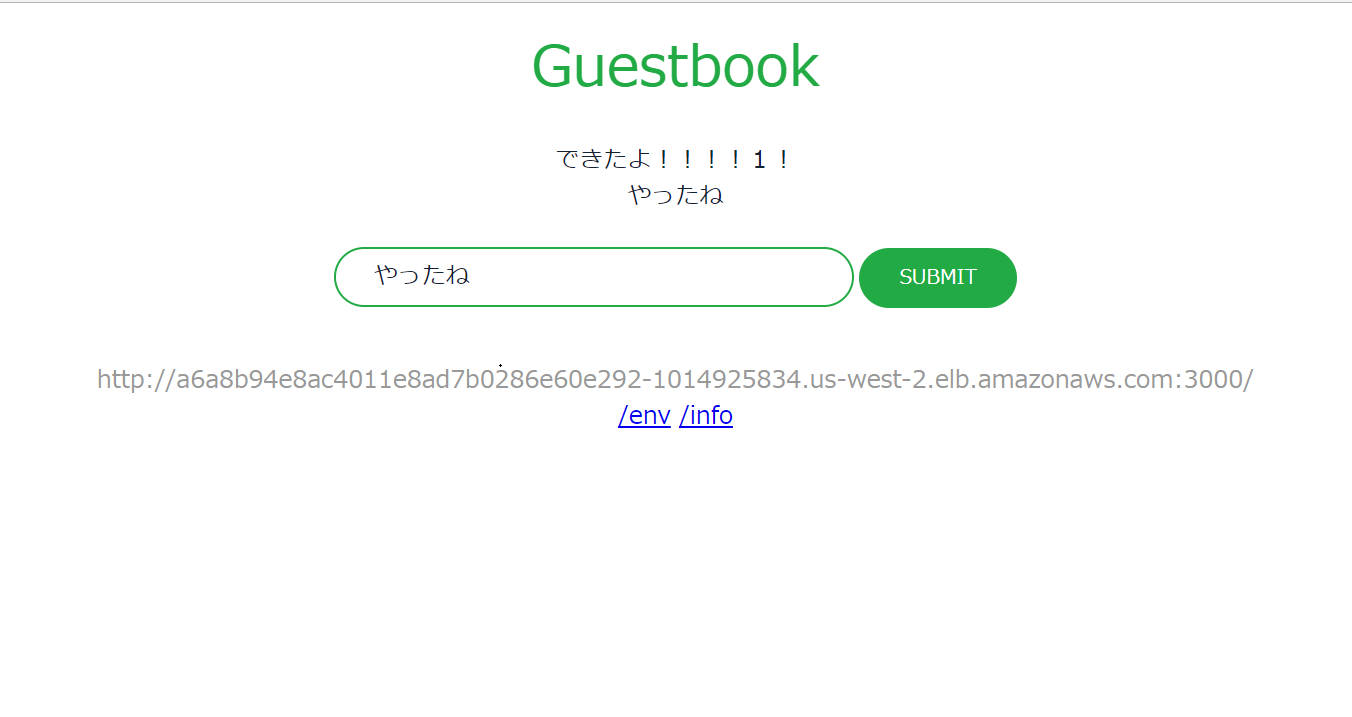

動作確認用のアプリケーションを稼働

Redis Master の Replication Controller を作成します

kubectl apply -f https://raw.githubusercontent.com/kubernetes/kubernetes/v1.10.3/examples/guestbook-go/redis-master-controller.json

作成されたことを確認します

[root@sugi-awscli eks]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE

redis-master-4rglw 1/1 Running 0 11s 10.10.1.211 ip-10-10-1-141.us-west-2.compute.internal

Redis MasterのService(ClusterIP)を作成します

kubectl apply -f https://raw.githubusercontent.com/kubernetes/kubernetes/v1.10.3/examples/guestbook-go/redis-master-service.json

確認します

[root@sugi-awscli eks]# kubectl get svc -o wide

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

kubernetes ClusterIP 172.20.0.1 <none> 443/TCP 1h <none>

redis-master ClusterIP 172.20.251.243 <none> 6379/TCP 7s app=redis,role=master

Redis Slaveの Replication Controller を作成します

kubectl apply -f https://raw.githubusercontent.com/kubernetes/kubernetes/v1.10.3/examples/guestbook-go/redis-slave-controller.json

確認します

[root@sugi-awscli eks]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE

redis-master-4rglw 1/1 Running 0 1m 10.10.1.211 ip-10-10-1-141.us-west-2.compute.internal

redis-slave-7s748 1/1 Running 0 54s 10.10.0.204 ip-10-10-0-95.us-west-2.compute.internal

redis-slave-ncqwp 1/1 Running 0 54s 10.10.1.90 ip-10-10-1-141.us-west-2.compute.internal

Redis Slaveの Service(ClusterIP) を作成します

kubectl apply -f https://raw.githubusercontent.com/kubernetes/kubernetes/v1.10.3/examples/guestbook-go/redis-slave-service.json

確認します

[root@sugi-awscli eks]# kubectl get svc -o wide

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

kubernetes ClusterIP 172.20.0.1 <none> 443/TCP 1h <none>

redis-master ClusterIP 172.20.251.243 <none> 6379/TCP 1m app=redis,role=master

redis-slave ClusterIP 172.20.76.31 <none> 6379/TCP 14s app=redis,role=slave

Guest Book の Application(Replication Controller) を作成します

kubectl apply -f https://raw.githubusercontent.com/kubernetes/kubernetes/v1.10.3/examples/guestbook-go/guestbook-controller.json

確認します

[root@sugi-awscli eks]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE

guestbook-7d77j 1/1 Running 0 5s 10.10.0.133 ip-10-10-0-95.us-west-2.compute.internal

guestbook-dqz4w 1/1 Running 0 5s 10.10.1.208 ip-10-10-1-141.us-west-2.compute.internal

guestbook-sjdhl 1/1 Running 0 5s 10.10.2.253 ip-10-10-2-118.us-west-2.compute.internal

redis-master-4rglw 1/1 Running 0 5m 10.10.1.211 ip-10-10-1-141.us-west-2.compute.internal

redis-slave-7s748 1/1 Running 0 4m 10.10.0.204 ip-10-10-0-95.us-west-2.compute.internal

redis-slave-ncqwp 1/1 Running 0 4m 10.10.1.90 ip-10-10-1-141.us-west-2.compute.internal

Guest Book の Service(LoadBalancer) を作成します

kubectl apply -f https://raw.githubusercontent.com/kubernetes/kubernetes/v1.10.3/examples/guestbook-go/guestbook-service.json

ServuceType LoadBalancer が作成されています。

AWS側では、自動的に、Classic Load Balancer が作成されています。

[root@sugi-awscli eks]# kubectl get svc -o wide

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

guestbook LoadBalancer 172.20.143.119 a6a8b94e8ac4011e8ad7b0286e60e292-1014925834.us-west-2.elb.amazonaws.com 3000:32290/TCP 7s app=guestbook

kubernetes ClusterIP 172.20.0.1 <none> 443/TCP 1h <none>

redis-master ClusterIP 172.20.251.243 <none> 6379/TCP 5m app=redis,role=master

redis-slave ClusterIP 172.20.76.31 <none> 6379/TCP 3m app=redis,role=slave

上記で表示されているEXTERNAL-IPを指定して、アクセスするとGuestbookが開けます。

※会社のネットワークやPCのFirewallなどで、80, 443以外のTCPポートを遮断している場合はアクセスできないので、注意してください

動作確認用アプリケーションの削除

kubectl delete rc/redis-master rc/redis-slave rc/guestbook svc/redis-master svc/redis-slave svc/guestbook

EKSクラスタの削除

EKSクラスタ一覧の確認

[root@sugi-awscli eks(default aws)]# aws eks list-clusters

CLUSTERS askbox

EKSクラスタの削除

aws eks delete-cluster --name askbox

削除中なことを確認します

[root@sugi-awscli policies]# aws eks describe-cluster --name askbox --query cluster.status

DELETING

EC2インスタンスを削除します

nodestack_arn=$(aws --output json cloudformation list-stacks --stack-status-filter CREATE_COMPLETE | jq -r '.StackSummaries | map(select(.StackName == "askboxnodes"))[].StackId')

aws cloudformation delete-stack --stack-name $nodestack_arn

VPCを削除します

vpcstack_arn=$(aws --output json cloudformation list-stacks --stack-status-filter CREATE_COMPLETE | jq -r '.StackSummaries | map(select(.StackName == "askboxvpc"))[].StackId')

aws cloudformation delete-stack --stack-name $vpcstack_arn

memo Type LoadbalancerのEXTERNAL-IPが設定されないとき

Pendingと長時間表示され要る場合は、IAMの権限周りが不足している

自分の場合は、iam:CreateServiceLinkedRole が不足していた

kubectl get events --all-namespaces

default 37s 5m 7 guestbook.154f82ecec90bd64 Service Normal EnsuringLoadBalancer service-controller Ensuring load balancer

default 5m 5m 1 guestbook.154f82ed4b148cd7 Service Warning CreatingLoadBalancerFailed service-controller Error creating load balancer (will retry): failed to ensure load balancer for service default/guestbook: AccessDenied: User: arn:aws:sts::539926008561:assumed-role/EKSRole/1535584612921554373 is not authorized to perform: iam:CreateServiceLinkedRole on resource: arn:aws:iam::539926008561:role/aws-service-role/elasticloadbalancing.amazonaws.com/AWSServiceRoleForElasticLoadBalancing

status code: 403, request id: 0c551a55-abee-11e8-a988-ed172e0e9ec0

default 5m 5m 1 guestbook.154f82ee9b4f6455 Service Warning CreatingLoadBalancerFailed service-controller Error creating load balancer (will retry): failed to ensure load balancer for service default/guestbook: AccessDenied: User: arn:aws:sts::539926008561:assumed-role/EKSRole/1535584612921554373 is not authorized to perform: iam:CreateServiceLinkedRole on resource: arn:aws:iam::539926008561:role/aws-service-role/elasticloadbalancing.amazonaws.com/AWSServiceRoleForElasticLoadBalancing

status code: 403, request id: 0fae7e82-abee-11e8-a540-136874c5f026

default 5m 5m 1 guestbook.154f82f10ed1cb8c Service Warning CreatingLoadBalancerFailed service-controller Error creating load balancer (will retry): failed to ensure load balancer for service default/guestbook: AccessDenied: User: arn:aws:sts::539926008561:assumed-role/EKSRole/1535584612921554373 is not authorized to perform: iam:CreateServiceLinkedRole on resource: arn:aws:iam::539926008561:role/aws-service-role/elasticloadbalancing.amazonaws.com/AWSServiceRoleForElasticLoadBalancing

status code: 403, request id: 15f739fd-abee-11e8-afcd-0b16639b5fa6

default 5m 5m 1 guestbook.154f82f5d70547c5 Service Warning CreatingLoadBalancerFailed service-controller Error creating load balancer (will retry): failed to ensure load balancer for service default/guestbook: AccessDenied: User: arn:aws:sts::539926008561:assumed-role/EKSRole/1535584612921554373 is not authorized to perform: iam:CreateServiceLinkedRole on resource: arn:aws:iam::539926008561:role/aws-service-role/elasticloadbalancing.amazonaws.com/AWSServiceRoleForElasticLoadBalancing

status code: 403, request id: 22356196-abee-11e8-b046-6bd5485f5242

default 4m 4m 1 guestbook.154f82ff4657970d Service Warning CreatingLoadBalancerFailed service-controller Error creating load balancer (will retry): failed to ensure load balancer for service default/guestbook: AccessDenied: User: arn:aws:sts::539926008561:assumed-role/EKSRole/1535584612921554373 is not authorized to perform: iam:CreateServiceLinkedRole on resource: arn:aws:iam::539926008561:role/aws-service-role/elasticloadbalancing.amazonaws.com/AWSServiceRoleForElasticLoadBalancing

status code: 403, request id: 3a5d2895-abee-11e8-b026-1b13d9bfa3e3

default 3m 3m 1 guestbook.154f83121bc74118 Service Warning CreatingLoadBalancerFailed service-controller Error creating load balancer (will retry): failed to ensure load balancer for service default/guestbook: AccessDenied: User: arn:aws:sts::539926008561:assumed-role/EKSRole/1535584612921554373 is not authorized to perform: iam:CreateServiceLinkedRole on resource: arn:aws:iam::539926008561:role/aws-service-role/elasticloadbalancing.amazonaws.com/AWSServiceRoleForElasticLoadBalancing

status code: 403, request id: 6a75c855-abee-11e8-a988-ed172e0e9ec0

default 36s 36s 1 guestbook.154f833788d26851 Service Warning CreatingLoadBalancerFailed service-controller Error creating load balancer (will retry): failed to ensure load balancer for service default/guestbook: AccessDenied: User: arn:aws:sts::539926008561:assumed-role/EKSRole/1535584612921554373 is not authorized to perform: iam:CreateServiceLinkedRole on resource: arn:aws:iam::539926008561:role/aws-service-role/elasticloadbalancing.amazonaws.com/AWSServiceRoleForElasticLoadBalancing

status code: 403, request id: ca5e8c5c-abee-11e8-b323-317336a5de97

参考URL

Quick Start Guide

設定方法