OSXで擬似分散モードのApacheHadoop2.7.0を動かす で構築したHadoopで、Apache Flink を動作させたメモ

Flinkってなに?

ダウンロード & 展開

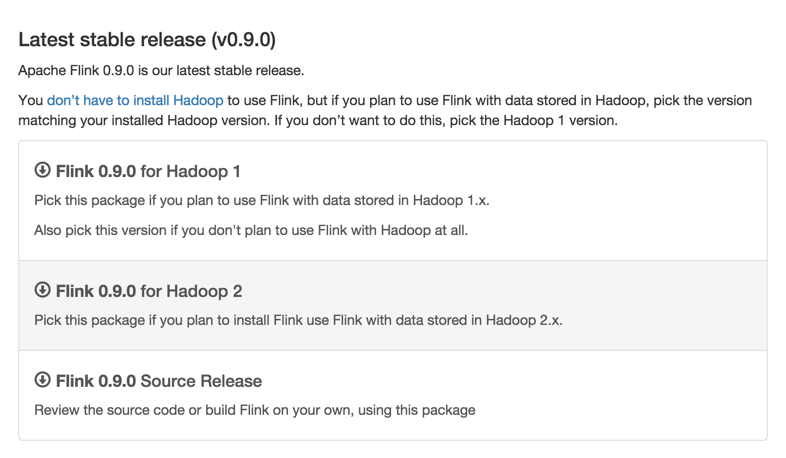

- Flink 0.9.0 for Hadoop2 を選択

/usr/local2/以下に展開

$ tar zxvf ~/Download/flink-0.9.0-bin-hadoop2.tgz -C /usr/local2/

Flink起動する

起動時、環境変数にHADOOP_CONF_DIRをセットする

$ HADOOP_CONF_DIR=/usr/local2/hadoop-2.7.0/etc/hadoop /usr/local2/flink-0.9.0/bin/yarn-session.sh -n 1 -jm 1024 -tm 1024

ズラズラと出力される

20:15:47,636 WARN org.apache.hadoop.util.NativeCodeLoader - Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

20:15:47,739 INFO org.apache.hadoop.yarn.client.RMProxy - Connecting to ResourceManager at /0.0.0.0:8032

20:15:47,759 INFO org.apache.flink.yarn.FlinkYarnClient - Using values:

20:15:47,760 INFO org.apache.flink.yarn.FlinkYarnClient - TaskManager count = 1

20:15:47,760 INFO org.apache.flink.yarn.FlinkYarnClient - JobManager memory = 1024

20:15:47,760 INFO org.apache.flink.yarn.FlinkYarnClient - TaskManager memory = 1024

20:15:48,040 INFO org.apache.flink.yarn.Utils - Copying from file:/usr/local2/flink-0.9.0/lib/flink-dist-0.9.0.jar to hdfs://localhost:9000/user/kodai_abe/.flink/application_1435403663845_0002/flink-dist-0.9.0.jar

20:15:48,966 INFO org.apache.flink.yarn.Utils - Copying from /usr/local2/flink-0.9.0/conf/flink-conf.yaml to hdfs://localhost:9000/user/kodai_abe/.flink/application_1435403663845_0002/flink-conf.yaml

20:15:48,980 INFO org.apache.flink.yarn.Utils - Copying from file:/usr/local2/flink-0.9.0/lib/flink-python-0.9.0.jar to hdfs://localhost:9000/user/kodai_abe/.flink/application_1435403663845_0002/flink-python-0.9.0.jar

20:15:48,990 INFO org.apache.flink.yarn.Utils - Copying from file:/usr/local2/flink-0.9.0/conf/logback.xml to hdfs://localhost:9000/user/kodai_abe/.flink/application_1435403663845_0002/logback.xml

20:15:49,000 INFO org.apache.flink.yarn.Utils - Copying from file:/usr/local2/flink-0.9.0/conf/log4j.properties to hdfs://localhost:9000/user/kodai_abe/.flink/application_1435403663845_0002/log4j.properties

20:15:49,018 INFO org.apache.flink.yarn.FlinkYarnClient - Submitting application master application_1435403663845_0002

20:15:49,036 INFO org.apache.hadoop.yarn.client.api.impl.YarnClientImpl - Submitted application application_1435403663845_0002 to ResourceManager at /0.0.0.0:8032

20:15:49,036 INFO org.apache.flink.yarn.FlinkYarnClient - Waiting for the cluster to be allocated

20:15:49,038 INFO org.apache.flink.yarn.FlinkYarnClient - Deploying cluster, current state ACCEPTED

20:15:50,042 INFO org.apache.flink.yarn.FlinkYarnClient - Deploying cluster, current state ACCEPTED

20:15:51,047 INFO org.apache.flink.yarn.FlinkYarnClient - Deploying cluster, current state ACCEPTED

20:15:52,051 INFO org.apache.flink.yarn.FlinkYarnClient - Deploying cluster, current state ACCEPTED

20:15:53,055 INFO org.apache.flink.yarn.FlinkYarnClient - YARN application has been deployed successfully.

20:15:53,232 INFO org.apache.flink.yarn.FlinkYarnCluster - Start actor system.

20:15:53,236 INFO org.apache.flink.runtime.net.NetUtils - Determined /192.168.100.101 as the machine's own IP address

20:15:53,465 INFO akka.event.slf4j.Slf4jLogger - Slf4jLogger started

20:15:53,495 INFO Remoting - Starting remoting

20:15:53,606 INFO Remoting - Remoting started; listening on addresses :[akka.tcp://flink@192.168.100.101:62167]

20:15:53,610 INFO org.apache.flink.yarn.FlinkYarnCluster - Start application client.

Flink JobManager is now running on 192.168.100.101:62160

JobManager Web Interface: http://frkout-mbp15-l13.local:8088/proxy/application_1435403663845_0002/

Number of connected TaskManagers changed to 1. Slots available: 1

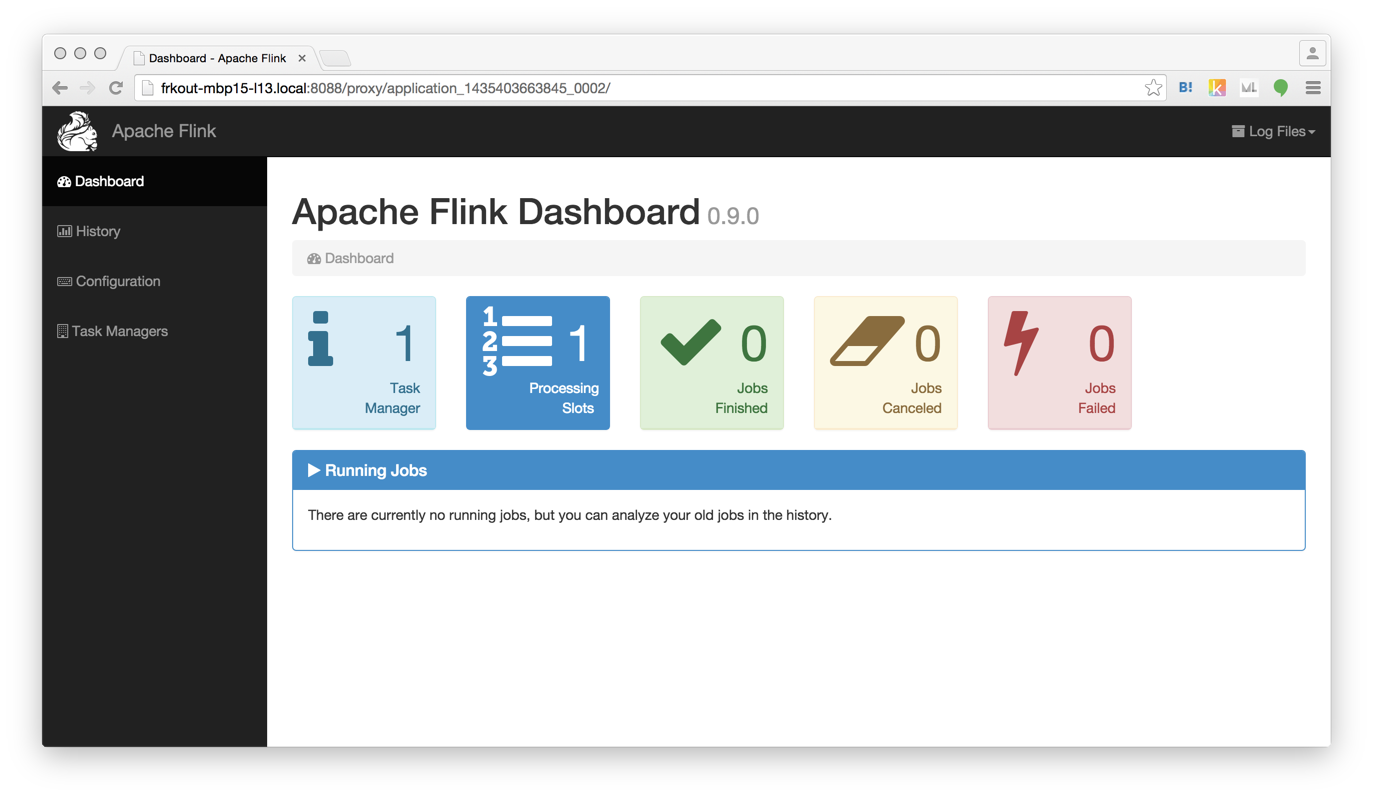

出力の中にあるFlink JobManager WebInterfaceのURLにアクセスする。

JobManager Web Interface: http://frkout-mbp15-l13.local:8088/proxy/application_1435403663845_0002/

Flinkジョブ投げてみる

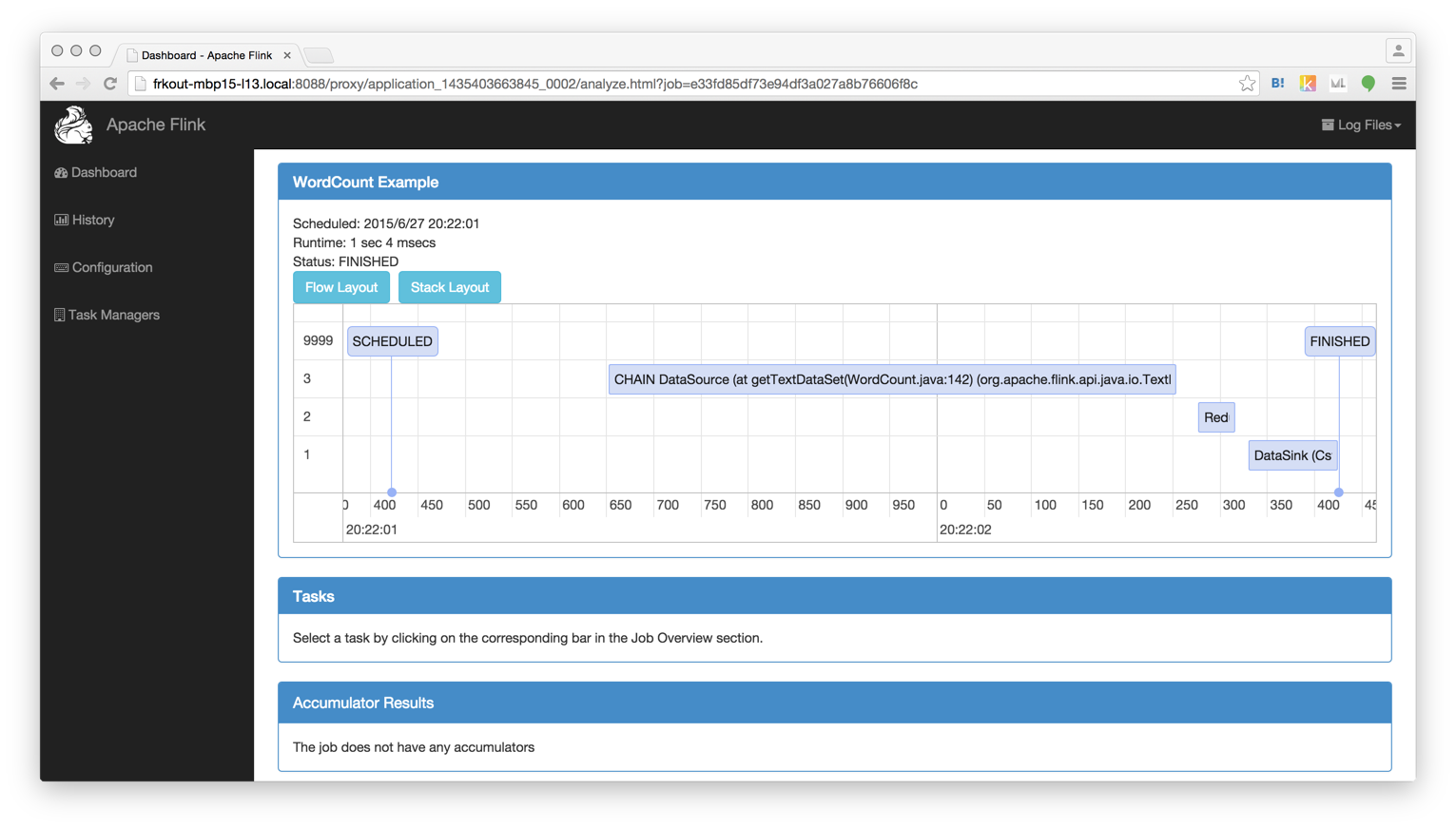

HDFS上にあるファイルにExampleにあるWordCountをかけてみる

$ /usr/local2/flink-0.9.0/bin/flink run /usr/local2/flink-0.9.0/examples/flink-java-examples-0.9.0-WordCount.jar hdfs://localhost:9000/user/kodai_abe/hadoop-env.sh hdfs://localhost:9000/user/kodai_abe/flink_wordcount_out