その1では女性2人を分類してみたが、今回は男女1名ずつ100枚の画像を使って分類してみた。

データ収集

Bing Search v7 APIsを使用

結果

- 石原さとみの画像:283枚

- 西島秀俊の画像:245枚

bing_api.py

# -*- coding: utf-8 -*-

import http.client

import json

import re

import requests

import os

import math

import pickle

import urllib

import hashlib

import sha3

import configparser # for Python3

import traceback

import sys

def make_dir(path):

if not os.path.isdir(path):

os.mkdir(path)

def make_correspondence_table(correspondence_table, original_url, hashed_url):

"""Create reference table of hash value and original URL.

"""

correspondence_table[original_url] = hashed_url

def make_img_path(save_dir_path, url):

"""Hash the image url and create the path

Args:

save_dir_path (str): Path to save image dir.

url (str): An url of image.

Returns:

Path of hashed image URL.

"""

save_img_path = os.path.join(save_dir_path, 'imgs')

make_dir(save_img_path)

file_extension = os.path.splitext(url)[-1]

if file_extension.lower() in ('.jpg', '.jpeg', '.gif', '.png', '.bmp'):

encoded_url = url.encode('utf-8') # required encoding for hashed

hashed_url = hashlib.sha3_256(encoded_url).hexdigest()

full_path = os.path.join(save_img_path, hashed_url + file_extension.lower())

make_correspondence_table(correspondence_table, url, hashed_url)

return full_path

else:

raise ValueError('Not applicable file extension')

def download_image(url, timeout=10):

response = requests.get(url, allow_redirects=True, timeout=timeout)

if response.status_code != 200:

error = Exception("HTTP status: " + response.status_code)

raise error

content_type = response.headers["content-type"]

if 'image' not in content_type:

error = Exception("Content-Type: " + content_type)

raise error

return response.content

def save_image(filename, image):

with open(filename, "wb") as fout:

fout.write(image)

if __name__ == '__main__':

config = configparser.ConfigParser()

config.read('authentication.ini')

bing_api_key = config['auth']['bing_api_key']

save_dir_path = '/Users/yuni/project/tensorflow/test/test1/nishijima_hidetoshi'

make_dir(save_dir_path)

num_imgs_required = 300 # Number of images you want.

num_imgs_per_transaction = 150 # default 30, Max 150 images

offset_count = math.floor(num_imgs_required / num_imgs_per_transaction)

url_list = []

correspondence_table = {}

# headers = {

# # Request headers

# 'Content-Type': 'multipart/form-data',

# 'Ocp-Apim-Subscription-Key': bing_api_key, # API key

# }

headers = {

# Request headers

# 'Content-Type': 'multipart/form-data',

'Ocp-Apim-Subscription-Key': bing_api_key # API key

}

for offset in range(offset_count):

params = urllib.parse.urlencode({

# Request parameters

'q': '西島秀俊',

'mkt': 'ja-JP',

'count': num_imgs_per_transaction,

'offset': offset * num_imgs_per_transaction # increment offset by 'num_imgs_per_transaction' (for example 0, 150, 300)

})

try:

conn = http.client.HTTPSConnection('api.cognitive.microsoft.com')

conn.request("GET", "/bing/v7.0/images/search?%s" % params, "{body}", headers)

response = conn.getresponse()

data = response.read()

save_res_path = os.path.join(save_dir_path, 'pickle_files')

make_dir(save_res_path)

with open(os.path.join(save_res_path, '{}.pickle'.format(offset)), mode='wb') as f:

pickle.dump(data, f)

conn.close()

except Exception as err:

# print("[Errno {0}] {1}".format(err.errno, err.strerror))

print("%s" % (err))

else:

decode_res = data.decode('utf-8')

data = json.loads(decode_res)

pattern = r"&r=(http.+)&p=" # extract an URL of image

for values in data['value']:

unquoted_url = urllib.parse.unquote(values['contentUrl'])

img_url = re.search(pattern, unquoted_url)

# if img_url:

# url_list.append(img_url.group(1))

url_list.append(unquoted_url)

for url in url_list:

try:

img_path = make_img_path(save_dir_path, url)

image = download_image(url)

save_image(img_path, image)

print('save_image')

print(image)

print('saved image... {}'.format(url))

except KeyboardInterrupt:

break

except Exception as err:

print("%s" % (err))

correspondence_table_path = os.path.join(save_dir_path, 'corr_table')

make_dir(correspondence_table_path)

with open(os.path.join(correspondence_table_path, 'corr_table.json'), mode='w') as f:

json.dump(correspondence_table, f)

これを動かす際にauthentication.iniというファイルを読み込む

authentication.ini

[auth]

bing_api_key = XXXXX

顔だけをトリミング

openCV(前回と同じface_trim.py)を使って顔部分の切り出しを実行

結果

- 石原さとみの画像:139枚

- 西島秀俊の画像:137枚

枚数が減った原因

- 顔認識のエラー(石原さとみ:94枚、西島秀俊:51枚)

- ごみデータの削除(1枚ずつ目で見て確認)

下準備(train/testデータの仕分けとラベルづけテキストの作成)

- trainデータ:石原さとみ(100枚), 西島秀俊(100枚)

- testデータ :石原さとみ(30枚), 西島秀俊(30枚)

face_only(顔切り出し後の画像フォルダ)の中身を仕分けたかったのに、下記のシェルスクリプリトが動かず、1行ずつ手で実施。

と、書いてないけど、上から100枚と101-130枚目でtrain/testのデータ仕分けしました。

ランダムじゃないけど、大丈夫かな。

label.sh

# !/bin/bash

ls face_only > train0.txt

sed -i -e "s/\$/ 0/g" train0.txt

sed -i"s@^@./data/@g" train0.txt

機械学習の実行

前回と同じtestFaceType.pyを実行

学習時間は多分5~10分くらい?でした。

結果

$python3 testFaceType.py

step 0, training accuracy 0.5

step 1, training accuracy 0.785

step 2, training accuracy 0.78

step 3, training accuracy 0.9

step 4, training accuracy 0.865

step 5, training accuracy 0.92

step 6, training accuracy 0.93

step 7, training accuracy 0.93

step 8, training accuracy 0.945

step 9, training accuracy 0.94

step 10, training accuracy 0.955

step 11, training accuracy 0.955

step 12, training accuracy 0.96

step 13, training accuracy 0.955

step 14, training accuracy 0.975

step 15, training accuracy 0.985

step 16, training accuracy 0.985

step 17, training accuracy 0.985

step 18, training accuracy 0.985

step 19, training accuracy 0.99

step 20, training accuracy 0.995

step 21, training accuracy 0.995

step 22, training accuracy 0.995

step 23, training accuracy 0.99

step 24, training accuracy 0.995

step 25, training accuracy 0.995

step 26, training accuracy 0.995

step 27, training accuracy 0.995

step 28, training accuracy 0.995

step 29, training accuracy 0.995

step 30, training accuracy 1

step 31, training accuracy 0.995

step 32, training accuracy 1

step 33, training accuracy 0.995

step 34, training accuracy 1

step 35, training accuracy 0.995

step 36, training accuracy 1

step 37, training accuracy 0.995

step 38, training accuracy 1

step 39, training accuracy 1

step 40, training accuracy 1

step 41, training accuracy 1

step 42, training accuracy 1

step 43, training accuracy 1

step 44, training accuracy 1

step 45, training accuracy 1

step 46, training accuracy 1

step 47, training accuracy 1

step 48, training accuracy 1

step 49, training accuracy 1

step 50, training accuracy 1

step 51, training accuracy 1

step 52, training accuracy 1

step 53, training accuracy 1

step 54, training accuracy 1

step 55, training accuracy 1

step 56, training accuracy 1

step 57, training accuracy 1

step 58, training accuracy 1

step 59, training accuracy 1

step 60, training accuracy 1

step 61, training accuracy 1

step 62, training accuracy 1

step 63, training accuracy 1

step 64, training accuracy 1

step 65, training accuracy 1

step 66, training accuracy 1

step 67, training accuracy 1

step 68, training accuracy 1

step 69, training accuracy 1

step 70, training accuracy 1

step 71, training accuracy 1

step 72, training accuracy 1

step 73, training accuracy 1

step 74, training accuracy 1

step 75, training accuracy 1

step 76, training accuracy 1

step 77, training accuracy 1

step 78, training accuracy 1

step 79, training accuracy 1

step 80, training accuracy 1

step 81, training accuracy 1

step 82, training accuracy 1

step 83, training accuracy 1

step 84, training accuracy 1

step 85, training accuracy 1

step 86, training accuracy 1

step 87, training accuracy 1

step 88, training accuracy 1

step 89, training accuracy 1

step 90, training accuracy 1

step 91, training accuracy 1

step 92, training accuracy 1

step 93, training accuracy 1

step 94, training accuracy 1

step 95, training accuracy 1

step 96, training accuracy 1

step 97, training accuracy 1

step 98, training accuracy 1

step 99, training accuracy 1

step 100, training accuracy 1

step 101, training accuracy 1

step 102, training accuracy 1

step 103, training accuracy 1

step 104, training accuracy 1

step 105, training accuracy 1

step 106, training accuracy 1

step 107, training accuracy 1

step 108, training accuracy 1

step 109, training accuracy 1

step 110, training accuracy 1

step 111, training accuracy 1

step 112, training accuracy 1

step 113, training accuracy 1

step 114, training accuracy 1

step 115, training accuracy 1

step 116, training accuracy 1

step 117, training accuracy 1

step 118, training accuracy 1

step 119, training accuracy 1

step 120, training accuracy 1

step 121, training accuracy 1

step 122, training accuracy 1

step 123, training accuracy 1

step 124, training accuracy 1

step 125, training accuracy 1

step 126, training accuracy 1

step 127, training accuracy 1

step 128, training accuracy 1

step 129, training accuracy 1

step 130, training accuracy 1

step 131, training accuracy 1

step 132, training accuracy 1

step 133, training accuracy 1

step 134, training accuracy 1

step 135, training accuracy 1

step 136, training accuracy 1

step 137, training accuracy 1

step 138, training accuracy 1

step 139, training accuracy 1

step 140, training accuracy 1

step 141, training accuracy 1

step 142, training accuracy 1

step 143, training accuracy 1

step 144, training accuracy 1

step 145, training accuracy 1

step 146, training accuracy 1

step 147, training accuracy 1

step 148, training accuracy 1

step 149, training accuracy 1

step 150, training accuracy 1

step 151, training accuracy 1

step 152, training accuracy 1

step 153, training accuracy 1

step 154, training accuracy 1

step 155, training accuracy 1

step 156, training accuracy 1

step 157, training accuracy 1

step 158, training accuracy 1

step 159, training accuracy 1

step 160, training accuracy 1

step 161, training accuracy 1

step 162, training accuracy 1

step 163, training accuracy 1

step 164, training accuracy 1

step 165, training accuracy 1

step 166, training accuracy 1

step 167, training accuracy 1

step 168, training accuracy 1

step 169, training accuracy 1

step 170, training accuracy 1

step 171, training accuracy 1

step 172, training accuracy 1

step 173, training accuracy 1

step 174, training accuracy 1

step 175, training accuracy 1

step 176, training accuracy 1

step 177, training accuracy 1

step 178, training accuracy 1

step 179, training accuracy 1

step 180, training accuracy 1

step 181, training accuracy 1

step 182, training accuracy 1

step 183, training accuracy 1

step 184, training accuracy 1

step 185, training accuracy 1

step 186, training accuracy 1

step 187, training accuracy 1

step 188, training accuracy 1

step 189, training accuracy 1

step 190, training accuracy 1

step 191, training accuracy 1

step 192, training accuracy 1

step 193, training accuracy 1

step 194, training accuracy 1

step 195, training accuracy 1

step 196, training accuracy 1

step 197, training accuracy 1

step 198, training accuracy 1

step 199, training accuracy 1

test accuracy 0.983333

正解率98%でした。いい感じなの?

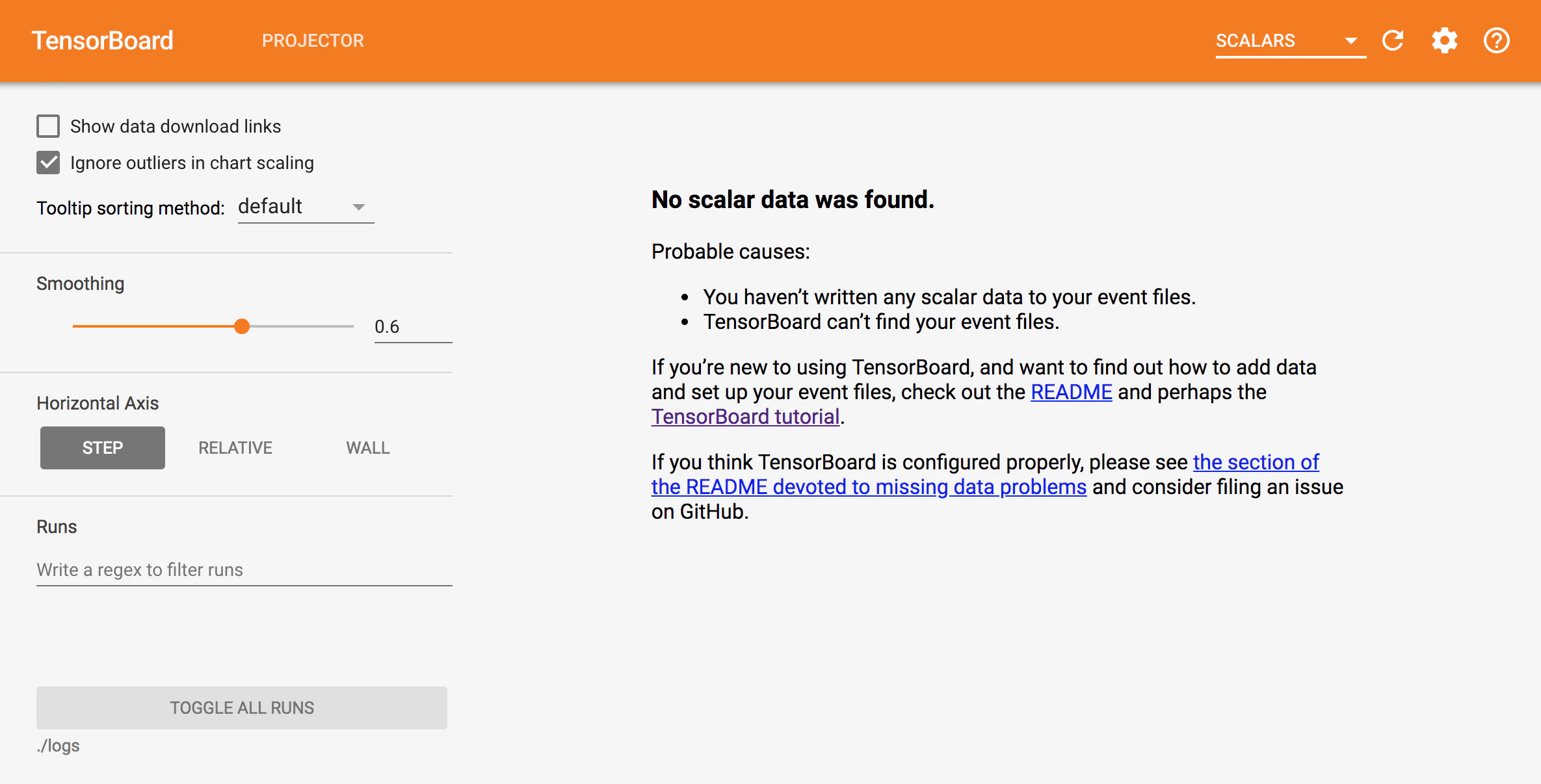

tensorboard見たいのに見れない...

コンソールにtensorboard --logdir=./logsと入力して

ブラウザから、localhost:6006へアクセスした画面

わからないので、ひとまずお疲れ様でした。