■ はじめに

前回に引き続き、決定木・ランダムフォレスト・勾配ブースティングについてまとめていきます。

データは、scikit-learnに実装されている「load_brest_cancer」を使用します。

【対象とする読者の方】

・3つのモデリングにおける基礎を学びたい、復習したい方

・理論は詳しく分からないが、実装を見てイメージをつけたい方 など

【全体構成】

・モジュールの用意

・データの準備

- 決定木

- ランダムフォレスト

- 勾配ブースティング

■ モジュールの用意

最初に、必要なモジュールをインポートしておきます。

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import graphviz

import mglearn

from sklearn.datasets import load_breast_cancer

from sklearn.model_selection import train_test_split

from sklearn.tree import DecisionTreeClassifier

from sklearn.ensemble import RandomForestClassifier

from sklearn.ensemble import GradientBoostingClassifier

from sklearn.tree import plot_tree

from sklearn.tree import export_graphviz

## ■ データの準備 データセットを使用します。

cancer = load_breast_cancer()

X, y = cancer.data, cancer.target

print(X.shape)

print(y.shape)

# (569, 30)

# (569,)

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=123)

print(X_train.shape)

print(y_train.shape)

print(X_test.shape)

print(y_train.shape)

# (398, 30)

# (398,)

# (171, 30)

# (171,)

決定木では個々の特徴量は独立に処理され、データの分割はスケールに依存しないため

正規化や標準化は不要となります。

1. 決定木

木の深さ(max_depth)について、正解率を比較していきます。

tree = DecisionTreeClassifier(max_depth=1, random_state=0)

tree.fit(X_train, y_train)

print('Accuracy on training set:{:.3f}'.format(tree.score(X_train, y_train)))

print('Accuracy on test set:{:.3f}'.format(tree.score(X_test, y_test)))

# Accuracy on training set:1.000

# Accuracy on test set:0.947

tree = DecisionTreeClassifier(max_depth=2, random_state=0)

tree.fit(X_train, y_train)

print('Accuracy on training set:{:.3f}'.format(tree.score(X_train, y_train)))

print('Accuracy on test set:{:.3f}'.format(tree.score(X_test, y_test)))

# Accuracy on training set:0.937

# Accuracy on test set:0.947

tree = DecisionTreeClassifier(max_depth=4, random_state=0)

tree.fit(X_train, y_train)

print('Accuracy on training set:{:.3f}'.format(tree.score(X_train, y_train)))

print('Accuracy on test set:{:.3f}'.format(tree.score(X_test, y_test)))

# Accuracy on training set:0.987

# Accuracy on test set:0.965

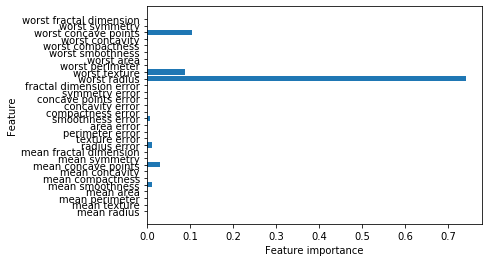

特徴量の重要度を確認します。

print('Feature importances:\n{}'.format(tree.feature_importances_))

'''

Feature importances:

[0. 0. 0. 0. 0.01265269 0.

0. 0.03105661 0. 0. 0.01105151 0.

0. 0. 0.00854057 0. 0. 0.

0. 0. 0.74107768 0.08863705 0. 0.

0. 0. 0.00275035 0.10423353 0. 0. ]

'''

プロットもして見てみます。

def plot_feature_importances_cancer(model):

n_features = cancer.data.shape[1] # 特徴量の数

plt.barh(range(n_features), model.feature_importances_, align='center')

plt.yticks(np.arange(n_features), cancer

.feature_names)

plt.xlabel('Feature importance')

plt.ylabel('Feature')

plot_feature_importances_cancer(tree)

2. ランダムフォレスト

ランダムフォレストは、複数の決定木を用いて分類を行います。

具体的には、それぞれの決定木で異なるデータを用意したり

1個のノードごとに使用する特徴量を変更したりして、複数の異なる決定木を作ります。

最後に、それらの決定木における予測値の平均を取って、最も高かったものを出力します。

forest = RandomForestClassifier(n_estimators=5, random_state=0)

forest.fit(X_train, y_train)

print('Accuracy on training set: {:.3f}'.format(forest.score(X_train, y_train)))

print('Accuracy on test set: {:.3f}'.format(forest.score(X_test, y_test)))

# Accuracy on training set: 0.992

# Accuracy on test set: 0.959

forest = RandomForestClassifier(n_estimators=7, random_state=0)

forest.fit(X_train, y_train)

print('Accuracy on training set: {:.3f}'.format(forest.score(X_train, y_train)))

print('Accuracy on test set: {:.3f}'.format(forest.score(X_test, y_test)))

# Accuracy on training set: 0.997

# Accuracy on test set: 0.982

forest = RandomForestClassifier(n_estimators=10, random_state=0)

forest.fit(X_train, y_train)

print('Accuracy on training set: {:.3f}'.format(forest.score(X_train, y_train)))

print('Accuracy on test set: {:.3f}'.format(forest.score(X_test, y_test)))

# Accuracy on training set: 0.997

# Accuracy on test set: 0.982

n_estimators:決定木の個数

決定木よりも、正解率(汎化性能)が向上しています。

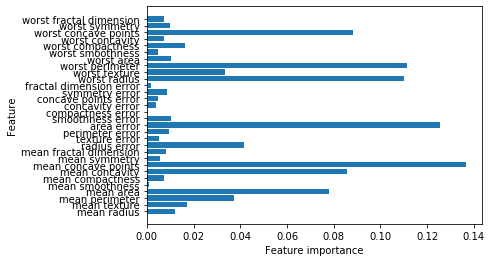

特徴量の重要度を確認します。

plot_feature_importances_cancer(forest)

複数の異なる決定木を使用しているため、より多くの特徴量を使用していることが分かります。

3. 勾配ブースティング

ランダムフォレストが複数の決定木における予測値の平均を取って出力するのに対して

勾配ブースティングは、1つ前の決定木の誤りを次の決定木が修正するように、順番に決定木を作ります。

gbrt = GradientBoostingClassifier(random_state=0, max_depth=3, learning_rate=0.1)

gbrt.fit(X_train, y_train)

print('Accuracy Training set score: {:.3f}'.format(gbrt.score(X_train, y_train)))

print('Accuracy Test set score: {:.3f}'.format(gbrt.score(X_test, y_test)))

# Accuracy Training set score: 1.000

# Accuracy Test set score: 0.965

gbrt = GradientBoostingClassifier(random_state=0, max_depth=1, learning_rate=0.7)

gbrt.fit(X_train, y_train)

print('Training set score: {:.3f}'.format(gbrt.score(X_train, y_train)))

print('Test set score: {:.3f}'.format(gbrt.score(X_test, y_test)))

# Training set score: 1.000

# Test set score: 0.977

gbrt = GradientBoostingClassifier(random_state=0, max_depth=1, learning_rate=0.1)

gbrt.fit(X_train, y_train)

print('Accuracy Training set score: {:.3f}'.format(gbrt.score(X_train, y_train)))

print('Accuracy Test set score: {:.3f}'.format(gbrt.score(X_test, y_test)))

# Accuracy Training set score: 0.992

# Accuracy Test set score: 0.982

learning_rate:学習率(個々の決定木が、それまでの決定木の過ちをどれくらい強く補正するかのパラメータ)

勾配ブースティングはパラメータの影響を受けやすいですが

正しく設定さえできれば、性能が高い傾向にあります。

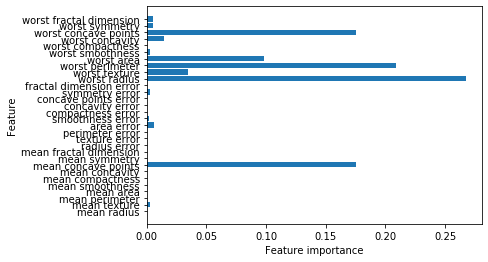

特徴量の重要度についても見ておきます。

gbrt = GradientBoostingClassifier(random_state=0, max_depth=1, learning_rate=0.1)

gbrt.fit(X_train, y_train)

plot_feature_importances_cancer(gbrt)

ランダムフォレストと似ていますが、いくつかの特徴量については完全に無視されていることが分かります。

■ 結論

決定木を用いてモデリングをする際は、まずランダムフォレストで試してから

勾配ブースティングを実践してみると良いです。

■ 最後に

今回は、決定木・ランダムフォレスト・勾配ブースティングについて比較を行いました。

少しでも多くの方にとって、お役に立ちましたら幸いです。