Reference

ディープラーニングによるホットドッグ検出器のレシピ

料理きろくにおける料理/非料理判別モデルの詳細

Library

import os

import numpy as np

import matplotlib.pyplot as plt

%matplotlib inline

from PIL import Image

from keras.preprocessing.image import ImageDataGenerator

from keras.applications.resnet50 import ResNet50

from keras.models import Model

from keras.layers import Conv2D, SpatialDropout2D, GlobalAveragePooling2D

from keras.layers import Activation, GlobalAveragePooling2D

from keras.regularizers import l2

from keras.callbacks import EarlyStopping

Data

train_path = '../input/hot-dog-not-hot-dog/seefood/train'

test_path = '../input/hot-dog-not-hot-dog/seefood/test'

train_hot_dog_path = '../input/hot-dog-not-hot-dog/seefood/train/hot_dog'

train_not_hot_dog_path = '../input/hot-dog-not-hot-dog/seefood/train/not_hot_dog'

train_data_hd = [os.path.join(train_hot_dog_path, filename)

for filename in os.listdir(train_hot_dog_path)]

train_data_nhd = [os.path.join(train_not_hot_dog_path, filename)

for filename in os.listdir(train_not_hot_dog_path)]

test_hot_dog_path = '../input/hot-dog-not-hot-dog/seefood/test/hot_dog'

test_not_hot_dog_path = '../input/hot-dog-not-hot-dog/seefood/test/not_hot_dog'

test_data_hd = [os.path.join(test_hot_dog_path, filename)

for filename in os.listdir(test_hot_dog_path)]

test_data_nhd = [os.path.join(test_not_hot_dog_path, filename)

for filename in os.listdir(test_not_hot_dog_path)]

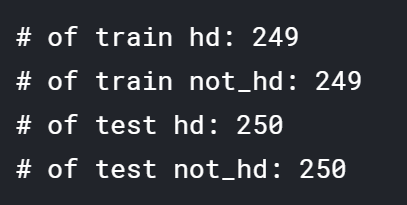

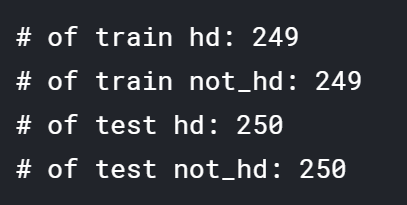

print ('# of train hd:', len(train_data_hd))

print ('# of train not_hd:', len(train_data_nhd))

print ('# of test hd:', len(test_data_hd))

print ('# of test not_hd:', len(test_data_nhd))

img_size = 224

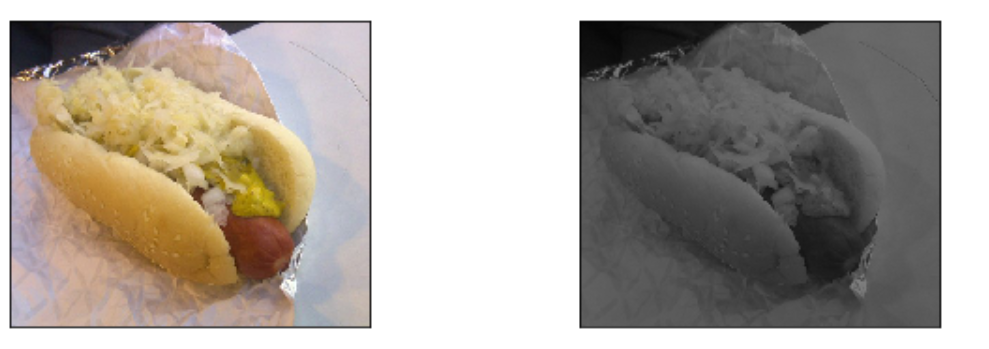

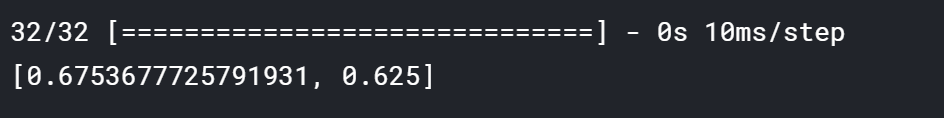

index = np.random.randint(len(train_data_hd))

print (train_data_hd[index])

img_hd = Image.open(train_data_hd[index]).resize((img_size, img_size))

print (train_data_nhd[index])

img_nhd = Image.open(train_data_nhd[index]).resize((img_size, img_size))

fig = plt.figure(figsize = (10, 3))

ax1 = fig.add_subplot(1, 2, 1)

ax1.imshow(img_hd)

ax1.set_xticks([])

ax1.set_yticks([])

ax1.set_title('Hot Dog')

ax2 = fig.add_subplot(1, 2, 2)

ax2.imshow(img_nhd)

ax2.set_xticks([])

ax2.set_yticks([])

ax2.set_title('No Hot Dog')

plt.show()

img_size = 224

batch_size = 32

train_datagen = ImageDataGenerator(

rescale=1./255,

shear_range=0.2,

zoom_range=0.2,

horizontal_flip=True)

test_datagen = ImageDataGenerator(rescale=1./255)

train_generator = train_datagen.flow_from_directory(

train_path,

target_size=(img_size, img_size),

batch_size=batch_size,

class_mode='categorical',

classes=['not_hot_dog', 'hot_dog']

)

validation_generator = test_datagen.flow_from_directory(

test_path,

target_size=(img_size, img_size),

batch_size=batch_size,

class_mode='categorical',

classes=['not_hot_dog', 'hot_dog']

)

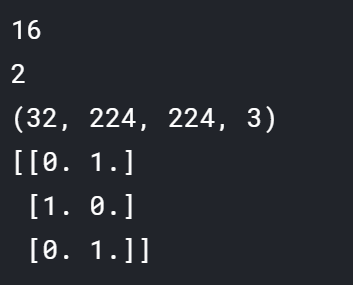

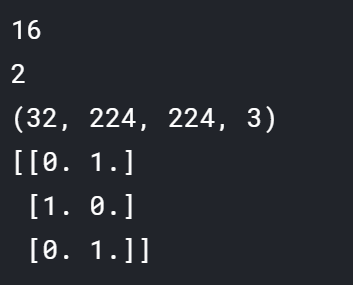

print (len(train_generator))

print (len(train_generator[0]))

print (train_generator[0][0].shape)

print (train_generator[0][1][:3])

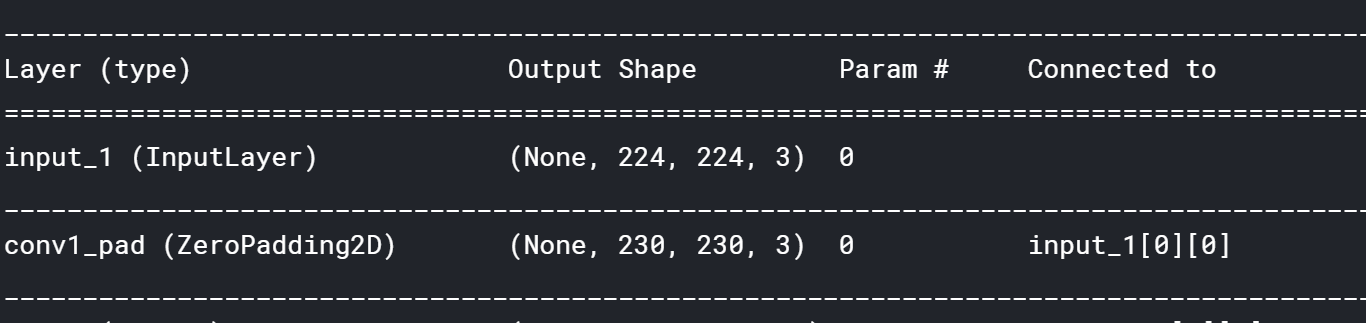

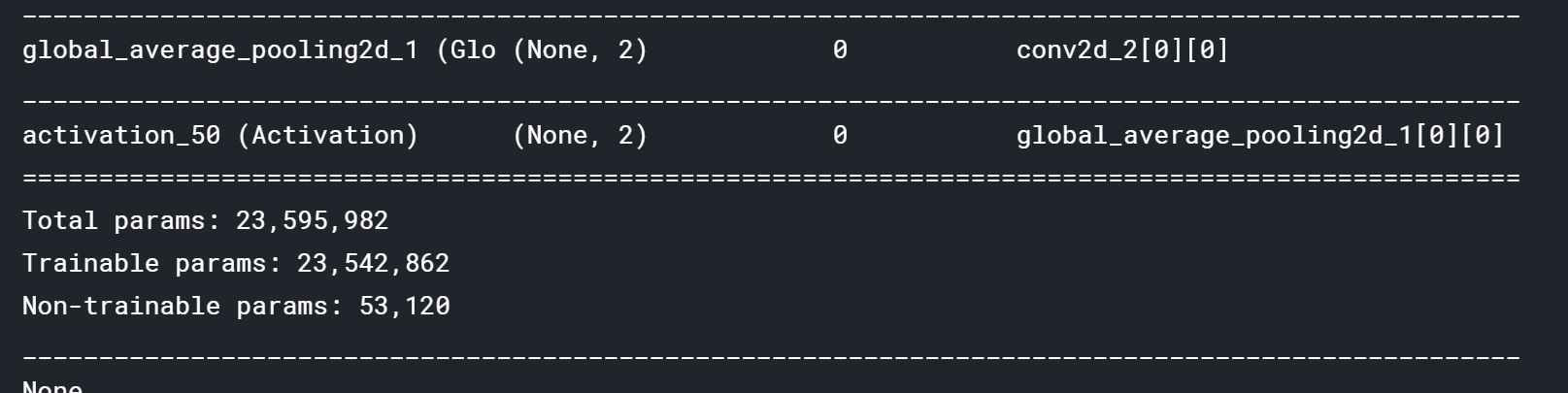

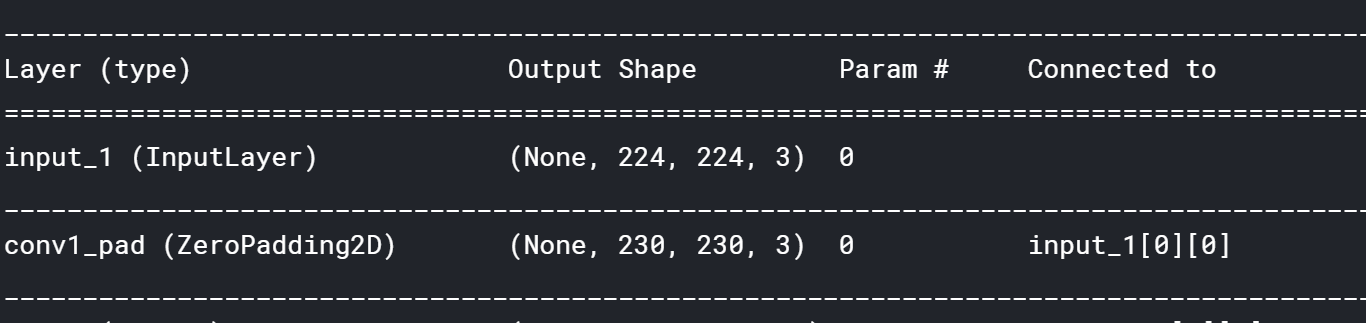

ResNet

weights = '../input/resnet50/resnet50_weights_tf_dim_ordering_tf_kernels_notop.h5'

base_model = ResNet50(input_shape=(img_size, img_size, 3),

weights=weights,

include_top=False)

x = base_model.output

x = SpatialDropout2D(0.3)(x)

x = Conv2D(4, (1,1), activation="relu", use_bias=True,

kernel_regularizer=l2(0.001))(x)

x = SpatialDropout2D(0.3)(x)

patch = Conv2D(2, (3, 3), activation="softmax", use_bias=True,

padding="same",

kernel_regularizer=l2(0.001))(x)

patch_model = Model(inputs=base_model.input, outputs=patch)

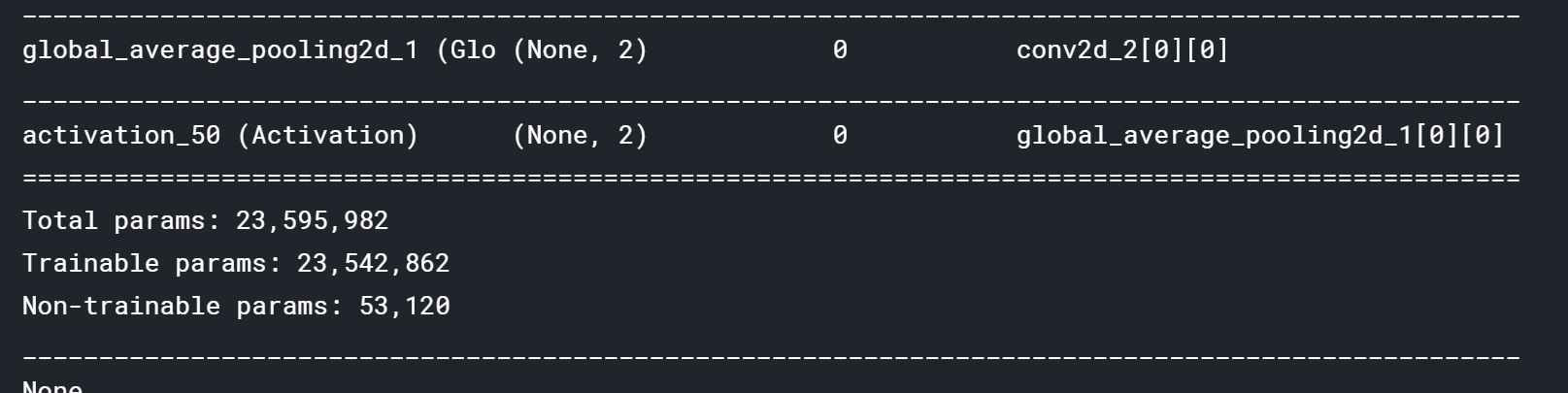

x = GlobalAveragePooling2D()(patch)

logits = Activation("softmax")(x)

classifier = Model(inputs=base_model.input, outputs=logits)

print (patch_model.summary())

print (classifier.summary())

for layer in base_model.layers:

layer.trainable = False

classifier.compile(optimizer="adam",

loss="categorical_crossentropy", metrics=["accuracy"])

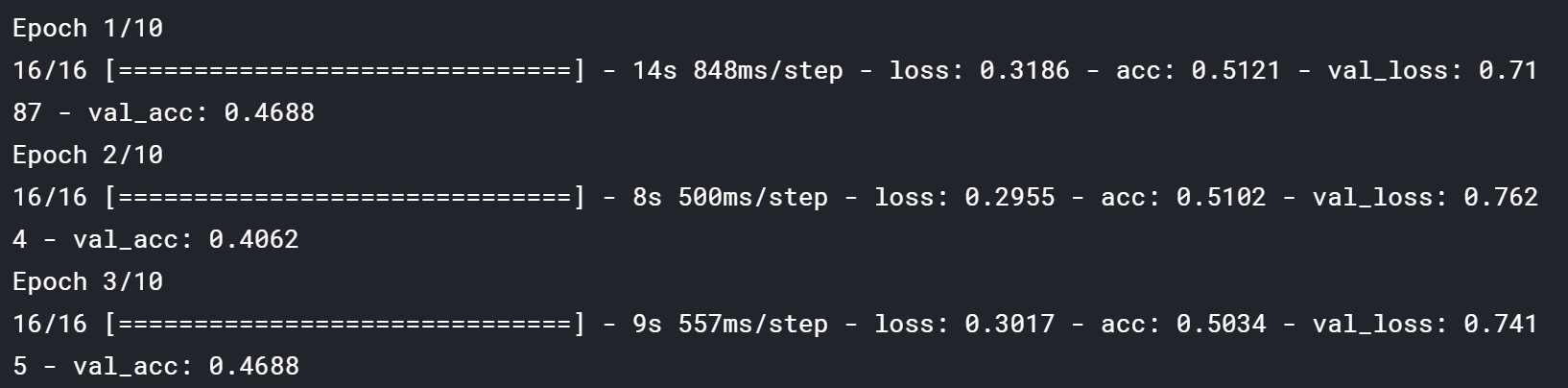

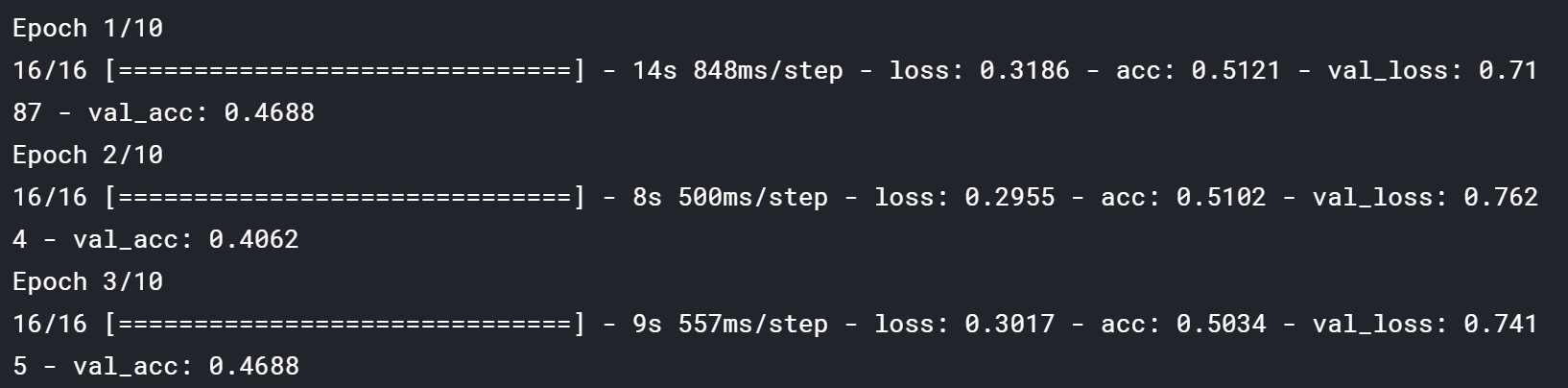

%%time

epochs = 10

earlystop = EarlyStopping(monitor='val_acc', mode='max', patience=3, verbose=1)

history = classifier.fit_generator(train_generator,

steps_per_epoch=len(train_generator),

class_weight={0: .75, 1: .25},

epochs=epochs,

validation_data=validation_generator,

validation_steps=1,

callbacks=[earlystop],

verbose=1)

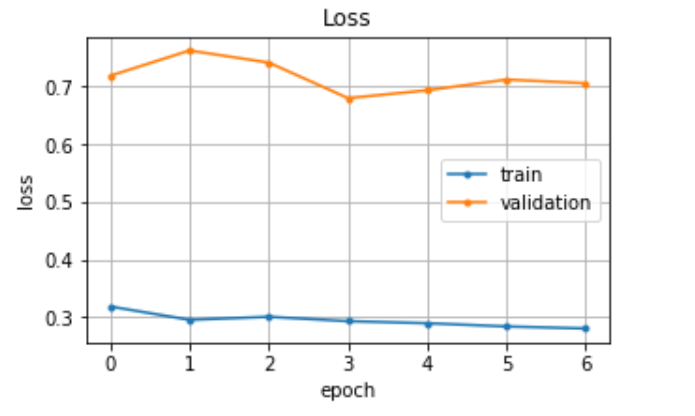

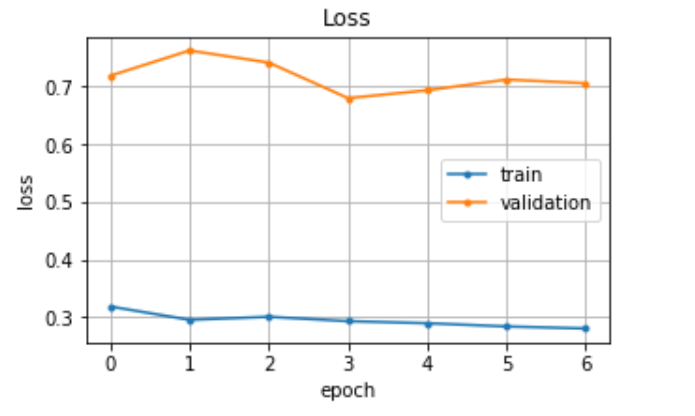

plt.figure(figsize =(5,3))

plt.plot(history.history['loss'], marker='.', label='train')

plt.plot(history.history['val_loss'], marker='.', label='validation')

plt.title('Loss')

plt.grid(True)

plt.xlabel('epoch')

plt.ylabel('loss')

plt.legend(loc='best')

plt.show()

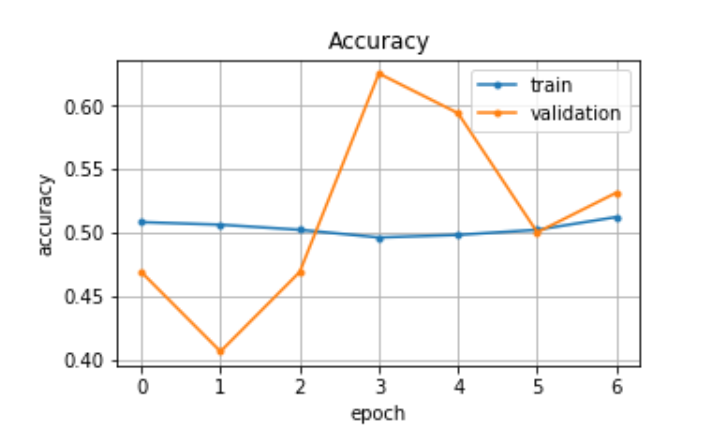

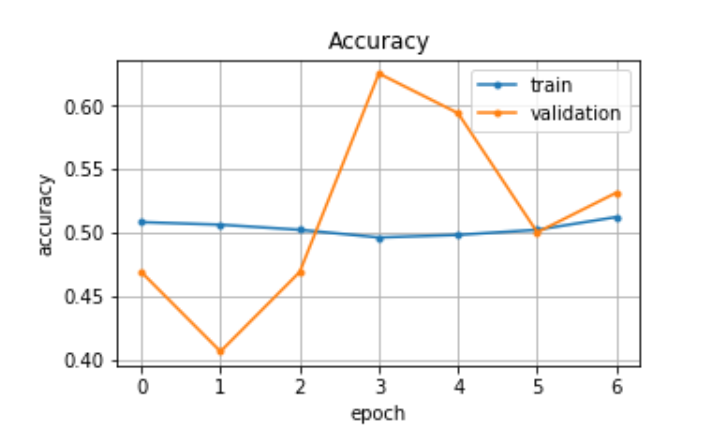

plt.figure(figsize =(5,3))

plt.plot(history.history['acc'], marker='.', label='train')

plt.plot(history.history['val_acc'], marker='.', label='validation')

plt.title('Accuracy')

plt.grid(True)

plt.xlabel('epoch')

plt.ylabel('accuracy')

plt.legend(loc='best')

plt.show()

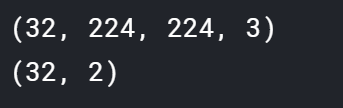

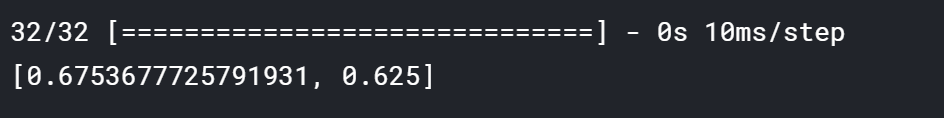

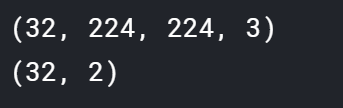

output_x = (validation_generator[0][0])

output_y = validation_generator[0][1]

print (output_x.shape)

print (output_y.shape)

print(classifier.evaluate(output_x, output_y))

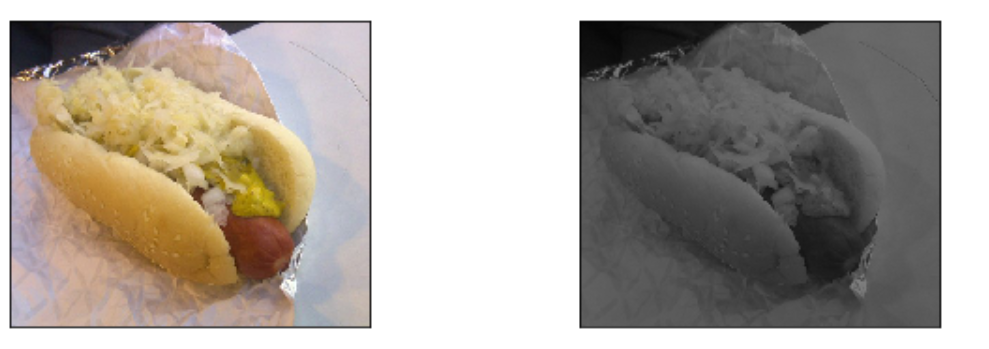

img_size = 224

threshold = 0.99

border = 8

index = np.random.randint(len(train_data_hd))

path = train_data_hd[index]

img = Image.open(path)

data = np.array(img.resize((img_size, img_size)))/255.0

patches = patch_model.predict(data[np.newaxis])

masks = patches[0].transpose(2, 0, 1)

masks = masks[1] - masks[0]

masks = np.clip(masks, threshold, 1.0)

masks = 255.0 * (masks - threshold) / (1.0 - threshold)

masks = Image.fromarray(masks.astype(np.uint8)).resize(img.size, Image.BICUBIC)

grayscale = img.convert("L").convert("RGB").point(lambda p: p * 0.5)

composite = Image.composite(img, grayscale, masks)

# canvas = Image.new(mode="RGB", size=(img.width * 2 + border, img.height),

# color="white")

# canvas.paste(img, (0,0))

# canvas.paste(composite, (img.width + border, 0))

# canvas

fig = plt.figure(figsize = (10, 3))

ax1 = fig.add_subplot(1, 2, 1)

ax1.imshow(img)

ax1.set_xticks([])

ax1.set_yticks([])

ax2 = fig.add_subplot(1, 2, 2)

ax2.imshow(composite)

ax2.set_xticks([])

ax2.set_yticks([])

plt.show()

ref

def patch_infer(img):

data = np.array(img.resize((img_size, img_size)))/255.0

patches = patch_model.predict(data[np.newaxis])

return patches

def overlay(img, patches, threshold=0.99):

# transposeはパッチをクラスごとに分けます。

patches = patches[0].transpose(2, 0, 1)

# hot_dogパッチ - not_hot_dogパッチ

patches = patches[1] - patches[0]

# 微妙なパッチをなくして

patches = np.clip(patches, threshold, 1.0)

patches = 255.0 * (patches - threshold) / (1.0 - threshold)

# 数字を画像にして

patches = Image.fromarray(patches.astype(np.uint8)).resize(img.size, Image.BICUBIC)

# もとの画像を白黒に

grayscale = img.convert("L").convert("RGB").point(lambda p: p * 0.5)

# パッチをマスクに使って、元の画像と白黒の画像をあわせて

composite = Image.composite(img, grayscale, patches)

return composite

def process_image(path, border=8):

img = Image.open(path)

patches = patch_infer(img)

result = overlay(img, patches)

# 元の画像と変換された画像をカンバスに並べます

canvas = Image.new(

mode="RGB",

size=(img.width * 2 + border, img.height),

color="white")

canvas.paste(img, (0,0))

canvas.paste(result, (img.width + border, 0))

return canvas