tensorlfowに慣れてきたので、株価をdeeplearningで予測してみる

基準日を作り、その過去90日(営業日で)の株価とその30日後の株価を使って、株価が上がる可能性が高い銘柄を出す

30日後の正解データをその基準に対して、x%上がったか、下がったか、その間にいたかという3択にして、

分類問題として解くことにした。

まずは、隠れ層2つにして、必要な関数を作る

import tensorflow as tf

import numpy as np

sess = tf.InteractiveSession()

def inference(x_placeholder,SCORE_SIZE,NUM_HIDDEN1,NUM_OUTPUT,keep_prob):

with tf.name_scope('hidden1') as scope:

hidden1_weight = tf.Variable(tf.truncated_normal([SCORE_SIZE, NUM_HIDDEN1], stddev=0.1), name="hidden1_weight")

hidden1_bias = tf.Variable(tf.constant(0.1, shape=[NUM_HIDDEN1]), name="hidden1_bias")

mean1, variance1 = tf.nn.moments(x_placeholder,[0])

bn1 = tf.nn.batch_normalization(x_placeholder, mean1, variance1, None, None, 1e-5)

hidden1_output = tf.nn.relu(tf.matmul(bn1, hidden1_weight) + hidden1_bias)

with tf.name_scope('hidden2'):

hidden2_weight = tf.Variable(tf.truncated_normal([NUM_HIDDEN1, NUM_HIDDEN2], stddev=0.01), name='hidden2_weight')

hidden2_bias = tf.Variable(tf.constant(0.1, shape=[NUM_HIDDEN2]), name="hidden2_bias")

mean2, variance2 = tf.nn.moments(hidden1_output,[0])

bn2 = tf.nn.batch_normalization(hidden1_output, mean2, variance2, None, None, 1e-5)

hidden2_output = tf.nn.relu(tf.matmul(bn2, hidden2_weight) + hidden2_bias)

dropout = tf.nn.dropout(hidden1_output, keep_prob)

with tf.name_scope('output') as scope:

output_weight = tf.Variable(tf.truncated_normal([NUM_HIDDEN1, NUM_OUTPUT], stddev=0.1), name="output_weight")

output_bias = tf.Variable(tf.constant(0.1, shape=[NUM_OUTPUT]), name="output_bias")

mean_out, variance_out = tf.nn.moments(dropout,[0])

bn_out = tf.nn.batch_normalization(dropout, mean_out, variance_out, None, None, 1e-5)

output = tf.nn.softmax(tf.matmul(bn_out, output_weight) + output_bias)

return output

def loss(output, y_placeholder, loss_label_placeholder):

with tf.name_scope('loss') as scope:

loss = tf.reduce_mean(-tf.reduce_sum(y_placeholder * tf.log(tf.clip_by_value(output,1e-10,1.0)), reduction_indices=[1]))

return loss

def training(loss,rate):

with tf.name_scope('training') as scope:

train_step = tf.train.GradientDescentOptimizer(rate).minimize(loss)

return train_step

ここで、気をつけたのが、batch normalizationをしたほうが良いというので、すべての層に突っ込んでみた

隠れ層の数や学習率は適当、、、今後調整していく

実行!

rate=0.08

SCORE_SIZE=90

NUM_OUTPUT=3

NUM_HIDDEN1=90

NUM_HIDDEN2=45

y_placeholder = tf.placeholder("float", [None, NUM_OUTPUT], name="y_placeholder")

x_placeholder = tf.placeholder("float", [None, SCORE_SIZE], name="x_placeholder")

loss_label_placeholder = tf.placeholder("string", name="loss_label_placeholder")

keep_prob = tf.placeholder("float")

output = inference(x_placeholder,SCORE_SIZE,NUM_HIDDEN1,NUM_OUTPUT,keep_prob)

loss1 = loss(output, y_placeholder, loss_label_placeholder)

training_op = training(loss1,rate)

correct_prediction = tf.equal(tf.argmax(output,1), tf.argmax(y_placeholder,1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

best_loss = float("inf")

sess.run(tf.initialize_all_variables())

for step in range(1001):

x_train,y_train,c_train=train_data_make(SCORE_SIZE,200)

feed_dict_train={y_placeholder: y_train,x_placeholder: x_train,keep_prob: 0.8}

feed_dict_test={y_placeholder: y_test,x_placeholder: x_test, keep_prob: 1.0}

if step%100 == 0:print "size %d,step %d,rate %g loss %g,test accuracy %g"%(num_long,step,rate,loss1.eval(feed_dict=feed_dict_train),accuracy.eval(feed_dict=feed_dict_test))

sess.run(training_op, feed_dict=feed_dict_train)

# test_result

print "final_result : size %d,step %d, test accuracy %g"%(num_long,step,accuracy.eval(feed_dict=feed_dict_test))

結果、9割当てれている、、、、まあ、1ヶ月では、株価が変わらない株がほとんどだから、変化無しによっているのかも。というかテストデータの9割が、変化無しに分類されるものだった、、、

size 90,step 0,rate 0.08 loss 1.31044,test accuracy 0.257594

size 90,step 100,rate 0.08 loss 0.369047,test accuracy 0.91373

size 90,90,step 200,rate 0.08 loss 0.249306,test accuracy 0.90887

・・・

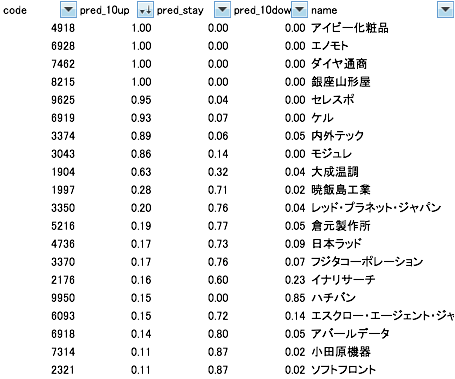

ちょっと、それぞれの株に対して、どのような数値が出ているのかを見てみた。

やっぱり、基本的には、変わらないという予想が多かったが、、、、

あれ?上がる可能性が高い順に並べてみると、そう予想されている銘柄もある

株価が上がる前の独特な動きでもあるのかな?

しばらく様子を見て、本当に上がるのか観察していきたい。

以下によると、バッチサイズはもっと小さくしたほうがいいみたい

http://postd.cc/26-things-i-learned-in-the-deep-learning-summer-school/

(株への投資はご自身の責任で行いください。等記事は一切の責任を持ちません)