はじめに

この本をGoogle Colaboratoryを使って勉強してます。

https://www.oreilly.co.jp/books/9784873118345/

「9章 TensorFlowを立ち上げる」で、TensorBoardの使い方が出てきたのですが、これもColabratory上で完結させたいなと思って調べました。

実装

今回は、本の「9.9 TensorBoardを使ったグラフと訓練曲線の可視化」 (p.240)

https://github.com/ageron/handson-ml/blob/master/09_up_and_running_with_tensorflow.ipynb

の 「Using TensorBoard」 のコードを実行できるように継ぎ接ぎしたものを使います。

import tensorflow as tf

from sklearn.datasets import fetch_california_housing

from datetime import datetime

import numpy as np

from sklearn.preprocessing import StandardScaler

housing = fetch_california_housing()

m, n = housing.data.shape

scaler = StandardScaler()

scaled_housing_data = scaler.fit_transform(housing.data)

scaled_housing_data_plus_bias = np.c_[np.ones((m, 1)), scaled_housing_data]

n_epochs = 1000

learning_rate = 0.01

X = tf.placeholder(tf.float32, shape=(None, n + 1), name="X")

y = tf.placeholder(tf.float32, shape=(None, 1), name="y")

theta = tf.Variable(tf.random_uniform([n + 1, 1], -1.0, 1.0, seed=42), name="theta")

y_pred = tf.matmul(X, theta, name="predictions")

error = y_pred - y

mse = tf.reduce_mean(tf.square(error), name="mse")

optimizer = tf.train.GradientDescentOptimizer(learning_rate=learning_rate)

training_op = optimizer.minimize(mse)

init = tf.global_variables_initializer()

now = datetime.utcnow().strftime("%Y%m%d%H%M%S")

root_logdir = "tf_logs"

logdir = "{}/run-{}/".format(root_logdir, now)

mse_summary = tf.summary.scalar('MSE', mse)

file_writer = tf.summary.FileWriter(logdir, tf.get_default_graph())

n_epochs = 10

batch_size = 100

n_batches = int(np.ceil(m / batch_size))

def fetch_batch(epoch, batch_index, batch_size):

np.random.seed(epoch * n_batches + batch_index) # not shown in the book

indices = np.random.randint(m, size=batch_size) # not shown

X_batch = scaled_housing_data_plus_bias[indices] # not shown

y_batch = housing.target.reshape(-1, 1)[indices] # not shown

return X_batch, y_batch

with tf.Session() as sess:

sess.run(init)

for epoch in range(n_epochs):

for batch_index in range(n_batches):

X_batch, y_batch = fetch_batch(epoch, batch_index, batch_size)

if batch_index % 10 == 0:

summary_str = mse_summary.eval(feed_dict={X: X_batch, y: y_batch})

step = epoch * n_batches + batch_index

file_writer.add_summary(summary_str, step)

sess.run(training_op, feed_dict={X: X_batch, y: y_batch})

best_theta = theta.eval()

file_writer.close()

best_theta

localtunnelのインストール

! npm install -g localtunnel

https://github.com/localtunnel/localtunnel

localtunnelはサーバーを適当なURLで公開するツールです。

他にも、ngrokというサービスもあります。

https://ngrok.com/

どちらでも良いと思います。

localtunnelを実行

get_ipython().system_raw(

'tensorboard --logdir {} --host 0.0.0.0 --port 6006 &'

.format(logdir)

)

get_ipython().system_raw('lt --port 6006 >> url.txt 2>&1 &')

logがあるディレクトリを指定して実行します。

URLを開く

!cat url.txt

-> your url is: https://****.localtunnel.me

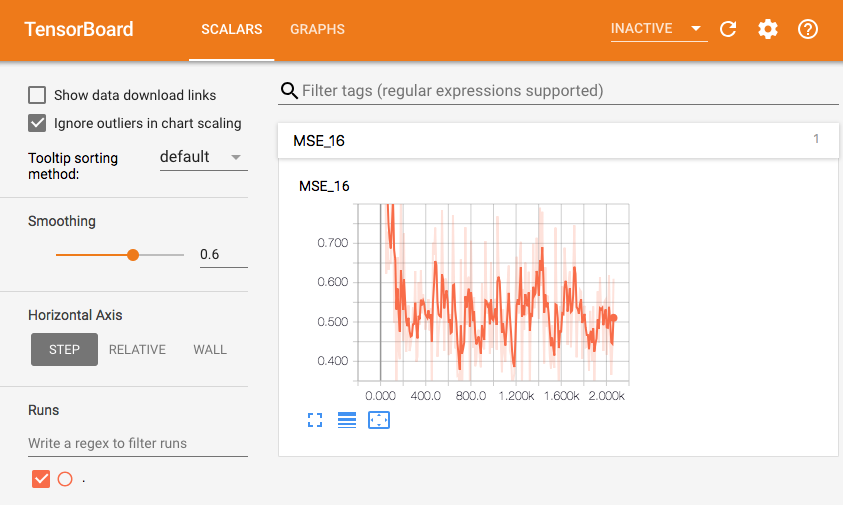

結果

以上です。

URLは、Colaboratoryのインスタンスが終了したら404になります。

注意点

localtunnelを使うと、URLを知っていたら誰でも見れます。

なので、練習用途以外では使わない方が良いかなと思います。

自己責任でお試しください。