この記事は [Wanoグループアドベントカレンダー ] (https://qiita.com/advent-calendar/2019/wano-group)の15日目の記事です。

rcloneとは

色々なクラウドストレージをコマンドラインから操作することができる便利なツールです

https://github.com/rclone/rclone

インストール

Homebrew利用の場合

brew install rclone

バイナリー利用の場合

ソースからインストールの場合

環境設定

rclone config

2019/12/09 11:42:44 NOTICE: Config file "/Users/xxxx/.config/rclone/rclone.conf" not found - using defaults

No remotes found - make a new one

n) New remote

s) Set configuration password

q) Quit config

n/s/q> n

name> gdrive

まず新規追加なので、nを選択 & name入力

Type of storage to configure.

Enter a string value. Press Enter for the default ("").

Choose a number from below, or type in your own value

1 / 1Fichier

\ "fichier"

2 / Alias for an existing remote

\ "alias"

3 / Amazon Drive

\ "amazon cloud drive"

4 / Amazon S3 Compliant Storage Provider (AWS, Alibaba, Ceph, Digital Ocean, Dreamhost, IBM COS, Minio, etc)

\ "s3"

5 / Backblaze B2

\ "b2"

6 / Box

\ "box"

7 / Cache a remote

\ "cache"

8 / Citrix Sharefile

\ "sharefile"

9 / Dropbox

\ "dropbox"

10 / Encrypt/Decrypt a remote

\ "crypt"

11 / FTP Connection

\ "ftp"

12 / Google Cloud Storage (this is not Google Drive)

\ "google cloud storage"

13 / Google Drive

\ "drive"

14 / Google Photos

\ "google photos"

15 / Hubic

\ "hubic"

16 / JottaCloud

\ "jottacloud"

17 / Koofr

\ "koofr"

18 / Local Disk

\ "local"

19 / Mail.ru Cloud

\ "mailru"

20 / Mega

\ "mega"

21 / Microsoft Azure Blob Storage

\ "azureblob"

22 / Microsoft OneDrive

\ "onedrive"

23 / OpenDrive

\ "opendrive"

24 / Openstack Swift (Rackspace Cloud Files, Memset Memstore, OVH)

\ "swift"

25 / Pcloud

\ "pcloud"

26 / Put.io

\ "putio"

27 / QingCloud Object Storage

\ "qingstor"

28 / SSH/SFTP Connection

\ "sftp"

29 / Transparently chunk/split large files

\ "chunker"

30 / Union merges the contents of several remotes

\ "union"

31 / Webdav

\ "webdav"

32 / Yandex Disk

\ "yandex"

33 / http Connection

\ "http"

34 / premiumize.me

\ "premiumizeme"

Storage> 13

次はリモート種別を選択(2019年12月の時点で34種類選択可)、先ずはGoogle Driveを選択

Google Application Client Id

Setting your own is recommended.

See https://rclone.org/drive/#making-your-own-client-id for how to create your own.

If you leave this blank, it will use an internal key which is low performance.

Enter a string value. Press Enter for the default ("").

client_id>

Google Application Client Secret

Setting your own is recommended.

Enter a string value. Press Enter for the default ("").

client_secret>

client_idとclient_secretは未入力大丈夫ですから、一旦そのままEnter (二回)

Scope that rclone should use when requesting access from drive.

Enter a string value. Press Enter for the default ("").

Choose a number from below, or type in your own value

1 / Full access all files, excluding Application Data Folder.

\ "drive"

2 / Read-only access to file metadata and file contents.

\ "drive.readonly"

/ Access to files created by rclone only.

3 | These are visible in the drive website.

| File authorization is revoked when the user deauthorizes the app.

\ "drive.file"

/ Allows read and write access to the Application Data folder.

4 | This is not visible in the drive website.

\ "drive.appfolder"

/ Allows read-only access to file metadata but

5 | does not allow any access to read or download file content.

\ "drive.metadata.readonly"

scope> 2

Google Driveへのアクセス権限、今回はcopy元なので、2で選択しますが、適宜選択してください。

ID of the root folder

Leave blank normally.

Fill in to access "Computers" folders (see docs), or for rclone to use

a non root folder as its starting point.

Note that if this is blank, the first time rclone runs it will fill it

in with the ID of the root folder.

Enter a string value. Press Enter for the default ("").

root_folder_id>

Service Account Credentials JSON file path

Leave blank normally.

Needed only if you want use SA instead of interactive login.

Enter a string value. Press Enter for the default ("").

service_account_file>

root_folder_idとservice_account_fileの入力、一旦そのままEnter (二回)

Edit advanced config? (y/n)

y) Yes

n) No

y/n> n

詳細設定、今回は省略します。

Remote config

Use auto config?

* Say Y if not sure

* Say N if you are working on a remote or headless machine

y) Yes

n) No

y/n> n

アクセス許可のための認証を自動で行うかどうか。

今回はブラウザから認証するため、nを選択します。

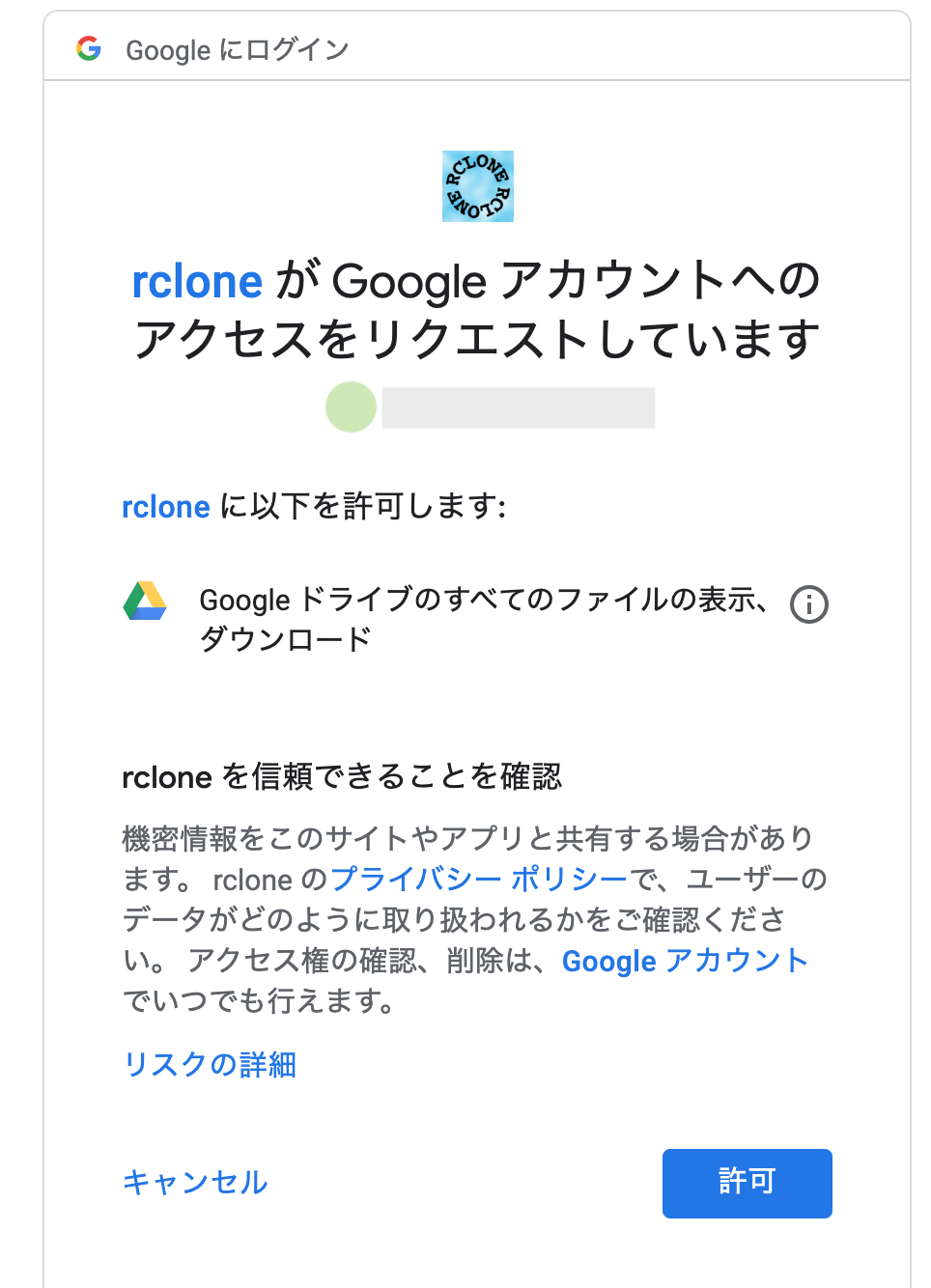

ここで、ブラウザが起動し、Googleのログイン画面に遷移するので、ログインします。

ログイン後に、アクセス許可を求める画面が表示されるので、「許可」をクリックします。

If your browser doesn't open automatically go to the following link:

xxxxx

Log in and authorize rclone for access

Enter verification code> XXXXXXXXXXXX

表示されたコードをコピーして貼り付けます。

Configure this as a team drive?

y) Yes

n) No

y/n> n

チームドライブ設定、一旦skipします(nを選択)

[gdrive]

type = drive

scope = drive.readonly

token = {"access_token":"xxx"}

--------------------

y) Yes this is OK

e) Edit this remote

d) Delete this remote

y/e/d> y

最後情報に確認、間違いがなければyを選択します

Current remotes:

Name Type

==== ====

gdrive drive

e) Edit existing remote

n) New remote

d) Delete remote

r) Rename remote

c) Copy remote

s) Set configuration password

q) Quit config

e/n/d/r/c/s/q> n

name> aws_s3

Type of storage to configure.

Enter a string value. Press Enter for the default ("").

Choose a number from below, or type in your own value

1 / 1Fichier

\ "fichier"

2 / Alias for an existing remote

\ "alias"

3 / Amazon Drive

\ "amazon cloud drive"

4 / Amazon S3 Compliant Storage Provider (AWS, Alibaba, Ceph, Digital Ocean, Dreamhost, IBM COS, Minio, etc)

\ "s3"

以下略

.

.

.

Storage> 4

次はawsの設定、もう一度n(New remote)を選択、同じように名前とリモート種別を入力、今度は4で選択

** See help for s3 backend at: https://rclone.org/s3/ **

Choose your S3 provider.

Enter a string value. Press Enter for the default ("").

Choose a number from below, or type in your own value

1 / Amazon Web Services (AWS) S3

\ "AWS"

2 / Alibaba Cloud Object Storage System (OSS) formerly Aliyun

\ "Alibaba"

3 / Ceph Object Storage

\ "Ceph"

4 / Digital Ocean Spaces

\ "DigitalOcean"

5 / Dreamhost DreamObjects

\ "Dreamhost"

6 / IBM COS S3

\ "IBMCOS"

7 / Minio Object Storage

\ "Minio"

8 / Netease Object Storage (NOS)

\ "Netease"

9 / Wasabi Object Storage

\ "Wasabi"

10 / Any other S3 compatible provider

\ "Other"

provider> 1

providerの設定、1(AWS)を選択

Get AWS credentials from runtime (environment variables or EC2/ECS meta data if no env vars).

Only applies if access_key_id and secret_access_key is blank.

Enter a boolean value (true or false). Press Enter for the default ("false").

Choose a number from below, or type in your own value

1 / Enter AWS credentials in the next step

\ "false"

2 / Get AWS credentials from the environment (env vars or IAM)

\ "true"

env_auth> 1

AWS Access Key ID.

Leave blank for anonymous access or runtime credentials.

Enter a string value. Press Enter for the default ("").

access_key_id> xxxxxxx

AWS Secret Access Key (password)

Leave blank for anonymous access or runtime credentials.

Enter a string value. Press Enter for the default ("").

secret_access_key> xxxxxxx

aws認証情報入力、環境file(~/.aws/credentials)存在すれば2でOK、今回は1で

access_key_id と secret_access_key を入力

Region to connect to.

Enter a string value. Press Enter for the default ("").

Choose a number from below, or type in your own value

/ The default endpoint - a good choice if you are unsure.

1 | US Region, Northern Virginia or Pacific Northwest.

| Leave location constraint empty.

\ "us-east-1"

/ US East (Ohio) Region

2 | Needs location constraint us-east-2.

\ "us-east-2"

/ US West (Oregon) Region

3 | Needs location constraint us-west-2.

\ "us-west-2"

/ US West (Northern California) Region

4 | Needs location constraint us-west-1.

\ "us-west-1"

/ Canada (Central) Region

5 | Needs location constraint ca-central-1.

\ "ca-central-1"

/ EU (Ireland) Region

6 | Needs location constraint EU or eu-west-1.

\ "eu-west-1"

/ EU (London) Region

7 | Needs location constraint eu-west-2.

\ "eu-west-2"

/ EU (Stockholm) Region

8 | Needs location constraint eu-north-1.

\ "eu-north-1"

/ EU (Frankfurt) Region

9 | Needs location constraint eu-central-1.

\ "eu-central-1"

/ Asia Pacific (Singapore) Region

10 | Needs location constraint ap-southeast-1.

\ "ap-southeast-1"

/ Asia Pacific (Sydney) Region

11 | Needs location constraint ap-southeast-2.

\ "ap-southeast-2"

/ Asia Pacific (Tokyo) Region

12 | Needs location constraint ap-northeast-1.

\ "ap-northeast-1"

/ Asia Pacific (Seoul)

13 | Needs location constraint ap-northeast-2.

\ "ap-northeast-2"

/ Asia Pacific (Mumbai)

14 | Needs location constraint ap-south-1.

\ "ap-south-1"

/ South America (Sao Paulo) Region

15 | Needs location constraint sa-east-1.

\ "sa-east-1"

region> 12

regionを選択、ここで適宜選択してください、今回は12 (Tokyo)を選択

Endpoint for S3 API.

Leave blank if using AWS to use the default endpoint for the region.

Enter a string value. Press Enter for the default ("").

endpoint>

Location constraint - must be set to match the Region.

Used when creating buckets only.

Enter a string value. Press Enter for the default ("").

Choose a number from below, or type in your own value

1 / Empty for US Region, Northern Virginia or Pacific Northwest.

\ ""

2 / US East (Ohio) Region.

\ "us-east-2"

3 / US West (Oregon) Region.

\ "us-west-2"

4 / US West (Northern California) Region.

\ "us-west-1"

5 / Canada (Central) Region.

\ "ca-central-1"

6 / EU (Ireland) Region.

\ "eu-west-1"

7 / EU (London) Region.

\ "eu-west-2"

8 / EU (Stockholm) Region.

\ "eu-north-1"

9 / EU Region.

\ "EU"

10 / Asia Pacific (Singapore) Region.

\ "ap-southeast-1"

11 / Asia Pacific (Sydney) Region.

\ "ap-southeast-2"

12 / Asia Pacific (Tokyo) Region.

\ "ap-northeast-1"

13 / Asia Pacific (Seoul)

\ "ap-northeast-2"

14 / Asia Pacific (Mumbai)

\ "ap-south-1"

15 / South America (Sao Paulo) Region.

\ "sa-east-1"

location_constraint> 12

location_constraintを選択、ここで適宜選択してください、今回は12 (Tokyo)を選択

Canned ACL used when creating buckets and storing or copying objects.

This ACL is used for creating objects and if bucket_acl isn't set, for creating buckets too.

For more info visit https://docs.aws.amazon.com/AmazonS3/latest/dev/acl-overview.html#canned-acl

Note that this ACL is applied when server side copying objects as S3

doesn't copy the ACL from the source but rather writes a fresh one.

Enter a string value. Press Enter for the default ("").

Choose a number from below, or type in your own value

1 / Owner gets FULL_CONTROL. No one else has access rights (default).

\ "private"

2 / Owner gets FULL_CONTROL. The AllUsers group gets READ access.

\ "public-read"

/ Owner gets FULL_CONTROL. The AllUsers group gets READ and WRITE access.

3 | Granting this on a bucket is generally not recommended.

\ "public-read-write"

4 / Owner gets FULL_CONTROL. The AuthenticatedUsers group gets READ access.

\ "authenticated-read"

/ Object owner gets FULL_CONTROL. Bucket owner gets READ access.

5 | If you specify this canned ACL when creating a bucket, Amazon S3 ignores it.

\ "bucket-owner-read"

/ Both the object owner and the bucket owner get FULL_CONTROL over the object.

6 | If you specify this canned ACL when creating a bucket, Amazon S3 ignores it.

\ "bucket-owner-full-control"

acl>

The server-side encryption algorithm used when storing this object in S3.

Enter a string value. Press Enter for the default ("").

Choose a number from below, or type in your own value

1 / None

\ ""

2 / AES256

\ "AES256"

3 / aws:kms

\ "aws:kms"

server_side_encryption>

If using KMS ID you must provide the ARN of Key.

Enter a string value. Press Enter for the default ("").

Choose a number from below, or type in your own value

1 / None

\ ""

2 / arn:aws:kms:*

\ "arn:aws:kms:us-east-1:*"

sse_kms_key_id>

次はaclとserver_side_encryption、sse_kms_key_idの設定、一旦そのまま

The storage class to use when storing new objects in S3.

Enter a string value. Press Enter for the default ("").

Choose a number from below, or type in your own value

1 / Default

\ ""

2 / Standard storage class

\ "STANDARD"

3 / Reduced redundancy storage class

\ "REDUCED_REDUNDANCY"

4 / Standard Infrequent Access storage class

\ "STANDARD_IA"

5 / One Zone Infrequent Access storage class

\ "ONEZONE_IA"

6 / Glacier storage class

\ "GLACIER"

7 / Glacier Deep Archive storage class

\ "DEEP_ARCHIVE"

8 / Intelligent-Tiering storage class

\ "INTELLIGENT_TIERING"

storage_class>

Edit advanced config? (y/n)

y) Yes

n) No

y/n> n

最後はstore_classとadvanced、一旦そのまま

Remote config

--------------------

[aws_s3]

type = s3

provider = AWS

access_key_id = xxxxxxxxxxxxx

secret_access_key = xxxxxxxxxxxxxxxxxxxxxxxxxx

region = ap-northeast-1

--------------------

y) Yes this is OK

e) Edit this remote

d) Delete this remote

y/e/d> y

情報確認終ったら完成、早速確認しましょう。

rclone ls gdrive:

209162 testaws/testfile.png

-1 xxxx

219015 xxxxxx

24349627 xxxxxxxxxxxxx

rclone ls aws_s3:

227 xxxxxxxxxxxxx

15 xxxxxx

copyはこの感じ:

rclone -P copy gdrive:tmp_folder/testfile.jpg aws_s3:/tmp-bucket