目的

Titanicコンペで素振りをした後、もうひと素振りしてみる用。

データ分析・探索編:その1はコチラ

参考にさせていただいたのは、こちらのNotebook

https://www.kaggle.com/gpreda/santander-eda-and-prediction

特徴量エンジニアリング

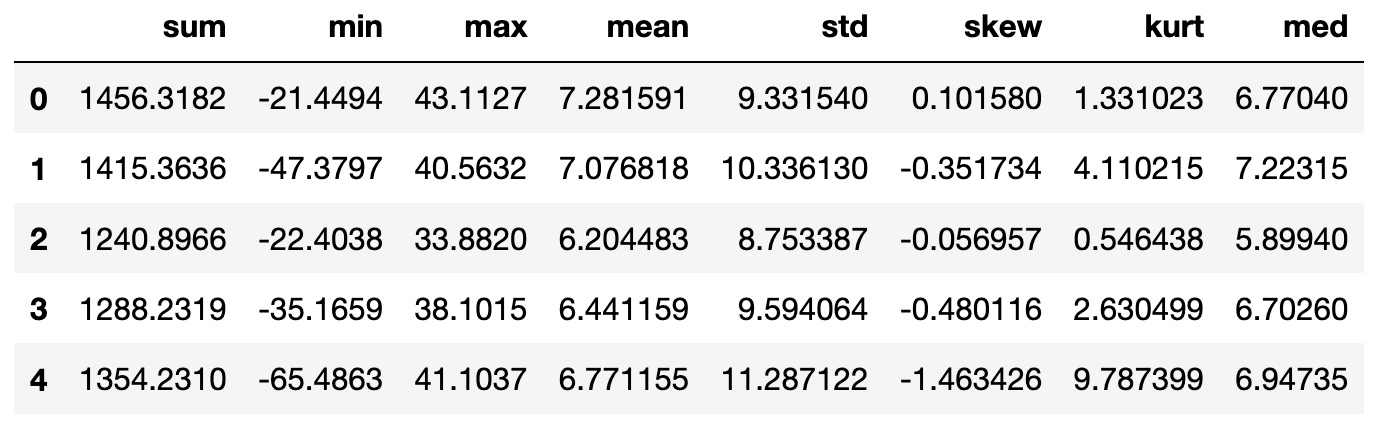

各統計量を特徴量として追加する

var_XXについて

sum:合計値

min:最小値

max:最大値

mean:平均

std:標準偏差

skew:歪度

kurt:尖度

med:中央値

idx = features = train_df.columns.values[2:202]

for df in [test_df, train_df]:

df['sum'] = df[idx].sum(axis=1)

df['min'] = df[idx].min(axis=1)

df['max'] = df[idx].max(axis=1)

df['mean'] = df[idx].mean(axis=1)

df['std'] = df[idx].std(axis=1)

df['skew'] = df[idx].skew(axis=1)

df['kurt'] = df[idx].kurtosis(axis=1)

df['med'] = df[idx].median(axis=1)

実際に値を確認してみる

train_df[train_df.columns[202:]].head()

test_df[test_df.columns[201:]].head()

これらの新しい特徴量の分布を可視化してみる。

Targetの値ごとで確認してみる

Seabornのkdeplotで可視化してみる。

def plot_new_feature_distribution(df1, df2, label1, label2, features):

i = 0

sns.set_style('whitegrid')

plt.figure()

fig, ax = plt.subplots(2,4,figsize=(18,8))

for feature in features:

i += 1

plt.subplot(2,4,i)

sns.kdeplot(df1[feature], bw=0.5,label=label1)

sns.kdeplot(df2[feature], bw=0.5,label=label2)

plt.xlabel(feature, fontsize=11)

locs, labels = plt.xticks()

plt.tick_params(axis='x', which='major', labelsize=8)

plt.tick_params(axis='y', which='major', labelsize=8)

plt.show();

t0 = train_df.loc[train_df['target'] == 0]

t1 = train_df.loc[train_df['target'] == 1]

features = train_df.columns.values[202:]

plot_new_feature_distribution(t0, t1, 'target: 0', 'target: 1', features)

次は、trainデータとtestデータ毎で

features = train_df.columns.values[202:]

plot_new_feature_distribution(train_df, test_df, 'train', 'test', features)

※これらの追加した特徴量はまだWorking Nowとのことでいくつかは削除されるかもしれないらしい。Try and Errorかな。

features = [c for c in train_df.columns if c not in ['ID_code', 'target']]

for feature in features:

train_df['r2_'+feature] = np.round(train_df[feature], 2)

test_df['r2_'+feature] = np.round(test_df[feature], 2)

train_df['r1_'+feature] = np.round(train_df[feature], 1)

test_df['r1_'+feature] = np.round(test_df[feature], 1)

モデリング

LightGBMで学習・評価している。

学習で用いる特徴量と目的変数をそれぞれ指定する

features = [c for c in train_df.columns if c not in ['ID_code', 'target']]

target = train_df['target']

続いてLightGBMで用いるハイパーパラメータを指定

param = {

'bagging_freq': 5,

'bagging_fraction': 0.4,

'boost_from_average':'false',

'boost': 'gbdt',

'feature_fraction': 0.05,

'learning_rate': 0.01,

'max_depth': -1,

'metric':'auc',

'min_data_in_leaf': 80,

'min_sum_hessian_in_leaf': 10.0,

'num_leaves': 13,

'num_threads': 8,

'tree_learner': 'serial',

'objective': 'binary',

'verbosity': 1

}

KFoldのCross Validationでデータ分割

それぞれのターンで、トレーニング、バリデーションデータのそれぞれの精度(auc)を算出。

folds = StratifiedKFold(n_splits=10, shuffle=False, random_state=44000)

oof = np.zeros(len(train_df))

predictions = np.zeros(len(test_df))

feature_importance_df = pd.DataFrame()

for fold_, (trn_idx, val_idx) in enumerate(folds.split(train_df.values, target.values)):

print("Fold {}".format(fold_))

trn_data = lgb.Dataset(train_df.iloc[trn_idx][features], label=target.iloc[trn_idx])

val_data = lgb.Dataset(train_df.iloc[val_idx][features], label=target.iloc[val_idx])

num_round = 1000000

clf = lgb.train(param, trn_data, num_round, valid_sets = [trn_data, val_data], verbose_eval=1000, early_stopping_rounds = 3000)

oof[val_idx] = clf.predict(train_df.iloc[val_idx][features], num_iteration=clf.best_iteration)

fold_importance_df = pd.DataFrame()

fold_importance_df["Feature"] = features

fold_importance_df["importance"] = clf.feature_importance()

fold_importance_df["fold"] = fold_ + 1

feature_importance_df = pd.concat([feature_importance_df, fold_importance_df], axis=0)

predictions += clf.predict(test_df[features], num_iteration=clf.best_iteration) / folds.n_splits

print("CV score: {:<8.5f}".format(roc_auc_score(target, oof)))```

次に特徴量の重要度について可視化する

cols = (feature_importance_df[["Feature", "importance"]]

.groupby("Feature")

.mean()

.sort_values(by="importance", ascending=False)[:150].index)

best_features = feature_importance_df.loc[feature_importance_df.Feature.isin(cols)]

plt.figure(figsize=(14,28))

sns.barplot(x="importance", y="Feature", data=best_features.sort_values(by="importance",ascending=False))

plt.title('Features importance (averaged/folds)')

plt.tight_layout()

plt.savefig('FI.png')

サブミッション

sub_df = pd.DataFrame({"ID_code":test_df["ID_code"].values})

sub_df["target"] = predictions

sub_df.to_csv("submission.csv", index=False)

作成されたcsvをKaggleに投稿