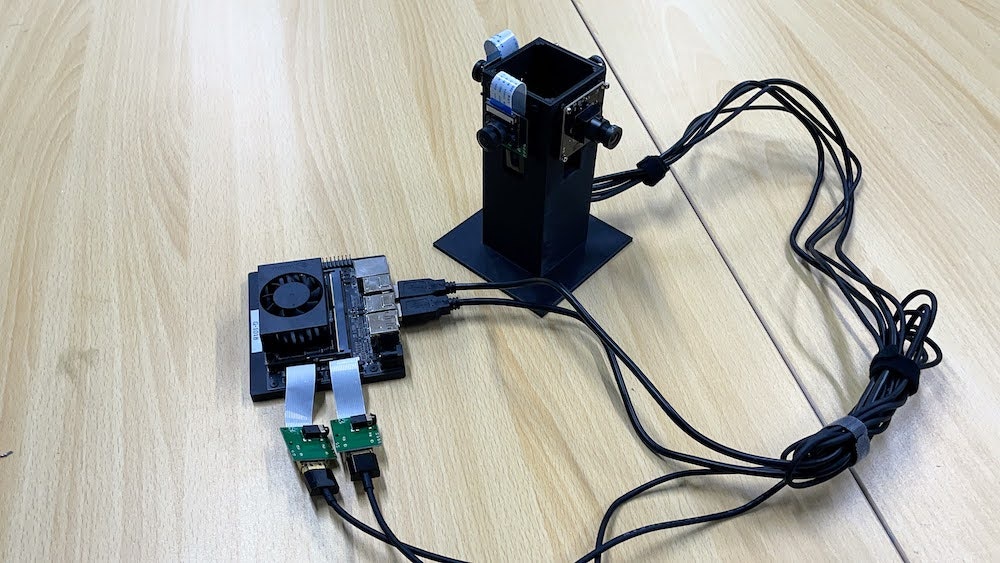

標準のJetson Nano(4GB) Developer Kitか、Jetson Xavier NX Developer Kitで4カメラから映像を取りこみ、4ラインでDeepStreamで処理するためのまとめ。

対応機種

- Jetson Nano (4GB)

- Jetson Xavier NX

Imageの作成

JetPack 4.5.1をSDカードに焼き込みます

https://developer.nvidia.com/jetpack-sdk-45-archive

Dockerの展開

NVIDIA L4T MLを起動します。

sudo docker run \

--runtime=nvidia \

-it \

-v /tmp/.X11-unix:/tmp/.X11-unix \

-v $HOME/data:/home/jetson/data \

-e DISPLAY \

-e QT_GRAPHICSSYSTEM=native \

-e QT_X11_NO_MITSHM=1 \

-v /var/run/dbus/system_bus_socket:/var/run/dbus/system_bus_socket:ro \

-v /etc/localtime:/localtime:ro \

-v /tmp/argus_socket:/tmp/argus_socket \

-v /dev/:/dev/ \

--privileged \

--network=host \

nvcr.io/nvidia/deepstream-l4t:5.0.1-20.09-samples

パッケージのアップデートと必要なパッケージのインストール

apt update

apt install libgstrtspserver-1.0-dev v4l-utils vim python3-pip python3-gi python3-dev python3-gst-1.0 -y

DeepStream Python Bindingをgit Clone

cd /opt/nvidia/deepstream/deepstream-5.0/sources

git clone https://github.com/NVIDIA-AI-IOT/deepstream_python_apps

cd deepstream_python_apps/apps/deepstream-test3

サンプルの実行

Dockerではなく大本のUbuntuで、xhost +を実行し、画面へのアクセスを許可する。

xhost +

Dockerに戻り、画面の転送先を設定する

export DISPLAY=:1

python3 deepstream_test_3.py file:///opt/nvidia/deepstream/deepstream-5.0/samples/streams/sample_1080p_h264.mp4

Unable to create NvStreamMux

のエラーが発生する場合は、

キャッシュを消す

rm ~/.cache/gstreamer-1.0/registry*

参照 : [Unable to create NvStreamMux ,pgie, nvvidconv, nvosd]

(https://forums.developer.nvidia.com/t/unable-to-create-nvstreammux-pgie-nvvidconv-nvosd/170649)

4カメラ装着

CSIは2ポート、USBは3カメラ以上つなぐとうまくいかないので、CSI x 2 , USB x 2で4カメラ構成にする。

v4l2-ctl --list-device

vi-output, imx219 9-0010 (platform:15c10000.vi:0):

/dev/video0

vi-output, imx219 10-0010 (platform:15c10000.vi:2):

/dev/video1

HD USB Camera (usb-3610000.xhci-2.1):

/dev/video3

HD USB Camera (usb-3610000.xhci-2.3):

/dev/video2

/dev/video3(USBカメラ)のプロファイルは以下の通り。ここは各自使用するカメラのコーデック、解像度、FPSをメモっておく。

v4l2-ctl -d /dev/video3 --list-formats-ext

ioctl: VIDIOC_ENUM_FMT

Index : 0

Type : Video Capture

Pixel Format: 'MJPG' (compressed)

Name : Motion-JPEG

Size: Discrete 640x360

Interval: Discrete 0.004s (260.004 fps)

Size: Discrete 1280x720

Interval: Discrete 0.008s (120.000 fps)

Size: Discrete 1920x1080

Interval: Discrete 0.017s (60.000 fps)

2つのUSBカメラは、解像度とFPSとコーデックを装着するUSBカメラのスペックにあわせて、SampleプログラムのGStreamer周りを書き直す。

ソース

deepstream_test_3.pyを下記のコードに書き直す

# !/usr/bin/env python3

################################################################################

# Copyright (c) 2020, NVIDIA CORPORATION. All rights reserved.

#

# Permission is hereby granted, free of charge, to any person obtaining a

# copy of this software and associated documentation files (the "Software"),

# to deal in the Software without restriction, including without limitation

# the rights to use, copy, modify, merge, publish, distribute, sublicense,

# and/or sell copies of the Software, and to permit persons to whom the

# Software is furnished to do so, subject to the following conditions:

#

# The above copyright notice and this permission notice shall be included in

# all copies or substantial portions of the Software.

#

# THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

# IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

# FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL

# THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

# LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING

# FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER

# DEALINGS IN THE SOFTWARE.

################################################################################

import sys

sys.path.append('../')

import gi

import configparser

gi.require_version('Gst', '1.0')

from gi.repository import GObject, Gst

from gi.repository import GLib

from ctypes import *

import time

import sys

import math

import platform

from common.is_aarch_64 import is_aarch64

from common.bus_call import bus_call

from common.FPS import GETFPS

import pyds

fps_streams={}

MAX_DISPLAY_LEN=64

PGIE_CLASS_ID_VEHICLE = 0

PGIE_CLASS_ID_BICYCLE = 1

PGIE_CLASS_ID_PERSON = 2

PGIE_CLASS_ID_ROADSIGN = 3

MUXER_OUTPUT_WIDTH=1280

MUXER_OUTPUT_HEIGHT=720

MUXER_BATCH_TIMEOUT_USEC=4000000

TILED_OUTPUT_WIDTH=1280 * 2

TILED_OUTPUT_HEIGHT=720 * 2

GST_CAPS_FEATURES_NVMM="memory:NVMM"

OSD_PROCESS_MODE= 0

OSD_DISPLAY_TEXT= 0

pgie_classes_str= ["Vehicle", "TwoWheeler", "Person","RoadSign"]

# tiler_sink_pad_buffer_probe will extract metadata received on OSD sink pad

# and update params for drawing rectangle, object information etc.

def tiler_src_pad_buffer_probe(pad,info,u_data):

frame_number=0

num_rects=0

gst_buffer = info.get_buffer()

if not gst_buffer:

print("Unable to get GstBuffer ")

return

# Retrieve batch metadata from the gst_buffer

# Note that pyds.gst_buffer_get_nvds_batch_meta() expects the

# C address of gst_buffer as input, which is obtained with hash(gst_buffer)

batch_meta = pyds.gst_buffer_get_nvds_batch_meta(hash(gst_buffer))

l_frame = batch_meta.frame_meta_list

while l_frame is not None:

try:

# Note that l_frame.data needs a cast to pyds.NvDsFrameMeta

# The casting is done by pyds.NvDsFrameMeta.cast()

# The casting also keeps ownership of the underlying memory

# in the C code, so the Python garbage collector will leave

# it alone.

frame_meta = pyds.NvDsFrameMeta.cast(l_frame.data)

except StopIteration:

break

'''

print("Frame Number is ", frame_meta.frame_num)

print("Source id is ", frame_meta.source_id)

print("Batch id is ", frame_meta.batch_id)

print("Source Frame Width ", frame_meta.source_frame_width)

print("Source Frame Height ", frame_meta.source_frame_height)

print("Num object meta ", frame_meta.num_obj_meta)

'''

frame_number=frame_meta.frame_num

l_obj=frame_meta.obj_meta_list

num_rects = frame_meta.num_obj_meta

obj_counter = {

PGIE_CLASS_ID_VEHICLE:0,

PGIE_CLASS_ID_PERSON:0,

PGIE_CLASS_ID_BICYCLE:0,

PGIE_CLASS_ID_ROADSIGN:0

}

while l_obj is not None:

try:

# Casting l_obj.data to pyds.NvDsObjectMeta

obj_meta=pyds.NvDsObjectMeta.cast(l_obj.data)

except StopIteration:

break

obj_counter[obj_meta.class_id] += 1

try:

l_obj=l_obj.next

except StopIteration:

break

"""display_meta=pyds.nvds_acquire_display_meta_from_pool(batch_meta)

display_meta.num_labels = 1

py_nvosd_text_params = display_meta.text_params[0]

py_nvosd_text_params.display_text = "Frame Number={} Number of Objects={} Vehicle_count={} Person_count={}".format(frame_number, num_rects, vehicle_count, person)

py_nvosd_text_params.x_offset = 10;

py_nvosd_text_params.y_offset = 12;

py_nvosd_text_params.font_params.font_name = "Serif"

py_nvosd_text_params.font_params.font_size = 10

py_nvosd_text_params.font_params.font_color.red = 1.0

py_nvosd_text_params.font_params.font_color.green = 1.0

py_nvosd_text_params.font_params.font_color.blue = 1.0

py_nvosd_text_params.font_params.font_color.alpha = 1.0

py_nvosd_text_params.set_bg_clr = 1

py_nvosd_text_params.text_bg_clr.red = 0.0

py_nvosd_text_params.text_bg_clr.green = 0.0

py_nvosd_text_params.text_bg_clr.blue = 0.0

py_nvosd_text_params.text_bg_clr.alpha = 1.0

#print("Frame Number=", frame_number, "Number of Objects=",num_rects,"Vehicle_count=",vehicle_count,"Person_count=",person)

pyds.nvds_add_display_meta_to_frame(frame_meta, display_meta)"""

print("Frame Number=", frame_number, "Number of Objects=",num_rects,"Vehicle_count=",obj_counter[PGIE_CLASS_ID_VEHICLE],"Person_count=",obj_counter[PGIE_CLASS_ID_PERSON])

# Get frame rate through this probe

fps_streams["stream{0}".format(frame_meta.pad_index)].get_fps()

try:

l_frame=l_frame.next

except StopIteration:

break

return Gst.PadProbeReturn.OK

def cb_newpad(decodebin, decoder_src_pad,data):

print("In cb_newpad\n")

caps=decoder_src_pad.get_current_caps()

gststruct=caps.get_structure(0)

gstname=gststruct.get_name()

source_bin=data

features=caps.get_features(0)

# Need to check if the pad created by the decodebin is for video and not

# audio.

print("gstname=",gstname)

if(gstname.find("video")!=-1):

# Link the decodebin pad only if decodebin has picked nvidia

# decoder plugin nvdec_*. We do this by checking if the pad caps contain

# NVMM memory features.

print("features=",features)

if features.contains("memory:NVMM"):

# Get the source bin ghost pad

bin_ghost_pad=source_bin.get_static_pad("src")

if not bin_ghost_pad.set_target(decoder_src_pad):

sys.stderr.write("Failed to link decoder src pad to source bin ghost pad\n")

else:

sys.stderr.write(" Error: Decodebin did not pick nvidia decoder plugin.\n")

def decodebin_child_added(child_proxy,Object,name,user_data):

print("Decodebin child added:", name, "\n")

if(name.find("decodebin") != -1):

Object.connect("child-added",decodebin_child_added,user_data)

if(is_aarch64() and name.find("nvv4l2decoder") != -1):

print("Seting bufapi_version\n")

Object.set_property("bufapi-version",True)

def create_source_bin(index,uri):

print("Creating source bin")

if uri == "/dev/video0" or uri == "/dev/video1":

cam_type = 'CSI'

else:

cam_type = 'USB'

# we need a jpegparser

print("Creating JPEGParser \n")

jpegparser = Gst.ElementFactory.make("jpegparse", "jpeg-parser")

if not jpegparser:

sys.stderr.write(" Unable to create jpeg parser \n")

# Use nvjpegdec for hardware accelerated decode on GPU

print("Creating Decoder \n")

decoder = Gst.ElementFactory.make("jpegdec", "jpeg-decoder")

if not decoder:

sys.stderr.write(" Unable to create NvJPEG Decoder \n")

if cam_type == 'CSI':

nvv4l2decoder = Gst.ElementFactory.make("nvv4l2decoder", "nvv4l2")

# Create a source GstBin to abstract this bin's content from the rest of the

# pipeline

bin_name="source-bin-%02d" %index

print(bin_name)

nbin=Gst.Bin.new(bin_name)

if not nbin:

sys.stderr.write(" Unable to create source bin \n")

# Source element for reading from the uri.

# We will use decodebin and let it figure out the container format of the

# stream and the codec and plug the appropriate demux and decode plugins.

# uri_decode_bin=Gst.ElementFactory.make("uridecodebin", "uri-decode-bin")

# if not uri_decode_bin:

# sys.stderr.write(" Unable to create uri decode bin \n")

# # We set the input uri to the source element

# uri_decode_bin.set_property("uri",uri)

print("Creating Source \n ")

if cam_type == 'CSI':

source = Gst.ElementFactory.make("nvarguscamerasrc", "src-elem")

elif cam_type == 'USB':

source = Gst.ElementFactory.make("v4l2src", "usb-cam-source")

if not source:

sys.stderr.write(" Unable to create source \n")

if cam_type == 'USB':

caps_v4l2src = Gst.ElementFactory.make("capsfilter", "v4l2src_caps")

if not caps_v4l2src:

sys.stderr.write("Could not create caps_v4l2src")

# videoconvert to make sure a superset of raw formats are supported

vidconvsrc = Gst.ElementFactory.make("videoconvert", "convertor_src1")

if not vidconvsrc:

sys.stderr.write(" Unable to create videoconvert \n")

if cam_type == 'CSI':

# Converter to scale the image

nvvidconv_src = Gst.ElementFactory.make("nvvideoconvert", "convertor_src")

if not nvvidconv_src:

sys.stderr.write(" Unable to create nvvidconv_src \n")

# nvvideoconvert to convert incoming raw buffers to NVMM Mem (NvBufSurface API)

nvvidconvsrc = Gst.ElementFactory.make("nvvideoconvert", "convertor_src2")

if not nvvidconvsrc:

sys.stderr.write(" Unable to create Nvvideoconvert \n")

caps_vidconvsrc = Gst.ElementFactory.make("capsfilter", "nvmm_caps")

if not caps_vidconvsrc:

sys.stderr.write(" Unable to create capsfilter \n")

if cam_type == 'CSI':

# Caps for NVMM and resolution scaling

caps_nvvidconv_src = Gst.ElementFactory.make("capsfilter", "nvmm_caps2")

if not caps_nvvidconv_src:

sys.stderr.write(" Unable to create capsfilter \n")

if cam_type == 'CSI':

caps_nvvidconv_src.set_property('caps', Gst.Caps.from_string('video/x-raw(memory:NVMM), width=1280, height=720, framerate=60/1'))

caps_vidconvsrc.set_property('caps', Gst.Caps.from_string("video/x-raw(memory:NVMM)"))

print("_________________________________")

print(f"{cam_type} Camera")

source.set_property('bufapi-version', True)

if uri == "/dev/video0":

source.set_property('sensor-id', 1)

if uri == "/dev/video1":

source.set_property('sensor-id', 0)

nbin.add(source)

nbin.add(nvvidconv_src)

nbin.add(caps_nvvidconv_src)

nbin.add(vidconvsrc)

nbin.add(nvvidconvsrc)

nbin.add(caps_vidconvsrc)

source.link(nvvidconv_src)

nvvidconv_src.link(caps_nvvidconv_src)

caps_nvvidconv_src.link(vidconvsrc)

vidconvsrc.link(nvvidconvsrc)

nvvidconvsrc.link(caps_vidconvsrc)

elif cam_type == 'USB':

caps_v4l2src.set_property('caps', Gst.Caps.from_string("image/jpeg, width=1280, height=720,framerate=120/1"))

caps_vidconvsrc.set_property('caps', Gst.Caps.from_string("video/x-raw(memory:NVMM)"))

print("_________________________________")

print(f"{cam_type} Camera")

source.set_property('device', uri)

nbin.add(source)

nbin.add(caps_v4l2src)

nbin.add(jpegparser)

nbin.add(decoder)

nbin.add(vidconvsrc)

nbin.add(nvvidconvsrc)

nbin.add(caps_vidconvsrc)

source.link(caps_v4l2src)

caps_v4l2src.link(jpegparser)

jpegparser.link(decoder)

decoder.link(vidconvsrc)

vidconvsrc.link(nvvidconvsrc)

nvvidconvsrc.link(caps_vidconvsrc)

# Connect to the "pad-added" signal of the decodebin which generates a

# callback once a new pad for raw data has beed created by the decodebin

# uri_decode_bin.connect("pad-added",cb_newpad,nbin)

# uri_decode_bin.connect("child-added",decodebin_child_added,nbin)

# We need to create a ghost pad for the source bin which will act as a proxy

# for the video decoder src pad. The ghost pad will not have a target right

# now. Once the decode bin creates the video decoder and generates the

# cb_newpad callback, we will set the ghost pad target to the video decoder

# src pad.

# Gst.Bin.add(nbin,uri_decode_bin)

srcpad = caps_vidconvsrc.get_static_pad("src")

bin_pad=nbin.add_pad(Gst.GhostPad.new("src",srcpad))

if not bin_pad:

sys.stderr.write(" Failed to add ghost pad in source bin \n")

return None

return nbin

# For reference here is the code for setting up the pipelines and the linking for the app:

def main(args):

# Check input arguments

if len(args) < 2:

sys.stderr.write("usage: %s <uri1> [uri2] ... [uriN]\n" % args[0])

sys.exit(1)

for i in range(0,len(args)-1):

fps_streams["stream{0}".format(i)]=GETFPS(i)

print(GETFPS(i))

number_sources=len(args)-1

# Standard GStreamer initialization

GObject.threads_init()

Gst.init(None)

# Create gstreamer elements */

# Create Pipeline element that will form a connection of other elements

print("Creating Pipeline \n ")

pipeline = Gst.Pipeline()

is_live = False

if not pipeline:

sys.stderr.write(" Unable to create Pipeline \n")

print("Creating streamux \n ")

# Create nvstreammux instance to form batches from one or more sources.

streammux = Gst.ElementFactory.make("nvstreammux", "Stream-muxer")

if not streammux:

sys.stderr.write(" Unable to create NvStreamMux \n")

pipeline.add(streammux)

for i in range(number_sources):

print("=========================== Creating source_bin ",i," \n ")

uri=args[i+1]

# if uri_name.find("rtsp://") == 0 :

is_live = True

source_bin=create_source_bin(i, uri)

if not source_bin:

sys.stderr.write("Unable to create source bin \n")

pipeline.add(source_bin)

padname="sink_%u" %i

sinkpad= streammux.get_request_pad(padname)

if not sinkpad:

sys.stderr.write("Unable to create sink pad bin \n")

srcpad=source_bin.get_static_pad("src")

if not srcpad:

sys.stderr.write("Unable to create src pad bin \n")

srcpad.link(sinkpad)

queue1=Gst.ElementFactory.make("queue","queue1")

queue2=Gst.ElementFactory.make("queue","queue2")

queue3=Gst.ElementFactory.make("queue","queue3")

queue4=Gst.ElementFactory.make("queue","queue4")

queue5=Gst.ElementFactory.make("queue","queue5")

pipeline.add(queue1)

pipeline.add(queue2)

pipeline.add(queue3)

pipeline.add(queue4)

pipeline.add(queue5)

print("Creating Pgie \n ")

pgie = Gst.ElementFactory.make("nvinfer", "primary-inference")

if not pgie:

sys.stderr.write(" Unable to create pgie \n")

print("Creating tiler \n ")

tiler=Gst.ElementFactory.make("nvmultistreamtiler", "nvtiler")

if not tiler:

sys.stderr.write(" Unable to create tiler \n")

print("Creating nvvidconv \n ")

nvvidconv = Gst.ElementFactory.make("nvvideoconvert", "convertor")

if not nvvidconv:

sys.stderr.write(" Unable to create nvvidconv \n")

print("Creating nvosd \n ")

nvosd = Gst.ElementFactory.make("nvdsosd", "onscreendisplay")

if not nvosd:

sys.stderr.write(" Unable to create nvosd \n")

nvosd.set_property('process-mode',OSD_PROCESS_MODE)

nvosd.set_property('display-text',OSD_DISPLAY_TEXT)

if(is_aarch64()):

print("Creating transform \n ")

transform=Gst.ElementFactory.make("nvegltransform", "nvegl-transform")

if not transform:

sys.stderr.write(" Unable to create transform \n")

print("Creating EGLSink \n")

sink = Gst.ElementFactory.make("nveglglessink", "nvvideo-renderer")

#sink = Gst.ElementFactory.make("nvoverlaysink", "nvvideo-renderer")

sink.set_property('sync', 0)

if not sink:

sys.stderr.write(" Unable to create egl sink \n")

if is_live:

print("Atleast one of the sources is live")

streammux.set_property('live-source', 1)

streammux.set_property('width', 1280)

streammux.set_property('height', 720)

streammux.set_property('batch-size', number_sources)

streammux.set_property('batched-push-timeout', 4000000)

pgie.set_property('config-file-path', "dstest3_pgie_config.txt")

pgie_batch_size=pgie.get_property("batch-size")

if(pgie_batch_size != number_sources):

print("WARNING: Overriding infer-config batch-size",pgie_batch_size," with number of sources ", number_sources," \n")

pgie.set_property("batch-size",number_sources)

#tiler_rows=int(math.sqrt(number_sources))

tiler_rows=2

#tiler_columns=int(math.ceil((1.0*number_sources)/tiler_rows))

tiler_columns=2

tiler.set_property("rows",tiler_rows)

tiler.set_property("columns",tiler_columns)

tiler.set_property("width", TILED_OUTPUT_WIDTH)

tiler.set_property("height", TILED_OUTPUT_HEIGHT)

sink.set_property("qos",0)

print("Adding elements to Pipeline \n")

pipeline.add(pgie)

pipeline.add(tiler)

pipeline.add(nvvidconv)

pipeline.add(nvosd)

if is_aarch64():

pipeline.add(transform)

pipeline.add(sink)

print("Linking elements in the Pipeline \n")

streammux.link(queue1)

queue1.link(pgie)

pgie.link(queue2)

queue2.link(tiler)

tiler.link(queue3)

queue3.link(nvvidconv)

nvvidconv.link(queue4)

queue4.link(nvosd)

if is_aarch64():

nvosd.link(queue5)

queue5.link(transform)

transform.link(sink)

else:

nvosd.link(queue5)

queue5.link(sink)

# create an event loop and feed gstreamer bus mesages to it

loop = GObject.MainLoop()

bus = pipeline.get_bus()

bus.add_signal_watch()

bus.connect ("message", bus_call, loop)

tiler_src_pad=pgie.get_static_pad("src")

if not tiler_src_pad:

sys.stderr.write(" Unable to get src pad \n")

else:

tiler_src_pad.add_probe(Gst.PadProbeType.BUFFER, tiler_src_pad_buffer_probe, 0)

# List the sources

print("Now playing...")

for i, source in enumerate(args):

if (i != 0):

print(i, ": ", source)

print("Starting pipeline \n")

# start play back and listed to events

pipeline.set_state(Gst.State.PLAYING)

try:

loop.run()

except:

pass

# cleanup

print("Exiting app\n")

pipeline.set_state(Gst.State.NULL)

if __name__ == '__main__':

sys.exit(main(sys.argv))

USBカメラのパラメーター調整

elif cam_type == 'USB':

caps_v4l2src.set_property('caps', Gst.Caps.from_string("image/jpeg, width=1280, height=720,framerate=120/1"))

の箇所はUSBカメラにパラメーターに応じて修正してください。

例えば、MJPEG, 1280x720, 30fpsのカメラを取り込む場合は、framerate=30/1のように修正します。

elif cam_type == 'USB':

caps_v4l2src.set_property('caps', Gst.Caps.from_string("image/jpeg, width=1280, height=720,framerate=30/1"))

実行

GstreamerはCSIカメラのコードを先に実行すると、それ以降CSIカメラしか読めなくなってしまうため、先にUSBカメラを指定する。

python3 deepstream_test_3.py /dev/video2 /dev/video3 /dev/video0 /dev/video1