"""

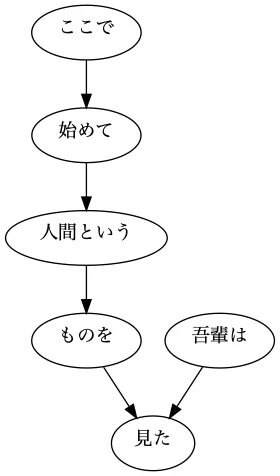

## 44. 係り受け木の可視化[Permalink](https://nlp100.github.io/ja/ch05.html#44-係り受け木の可視化)

与えられた文の係り受け木を有向グラフとして可視化せよ.可視化には,[Graphviz](http://www.graphviz.org/)等を用いるとよい.

"""

from collections import defaultdict

from typing import List, Tuple

import pydot

def read_file(fpath: str) -> List[List[str]]:

"""Get clear format of parsed sentences.

Args:

fpath (str): File path.

Returns:

List[List[str]]: List of sentences, and each sentence contains a word list.

e.g. result[1]:

['* 0 2D 0/0 -0.764522',

'\u3000\t記号,空白,*,*,*,*,\u3000,\u3000,\u3000',

'* 1 2D 0/1 -0.764522',

'吾輩\t名詞,代名詞,一般,*,*,*,吾輩,ワガハイ,ワガハイ',

'は\t助詞,係助詞,*,*,*,*,は,ハ,ワ',

'* 2 -1D 0/2 0.000000',

'猫\t名詞,一般,*,*,*,*,猫,ネコ,ネコ',

'で\t助動詞,*,*,*,特殊・ダ,連用形,だ,デ,デ',

'ある\t助動詞,*,*,*,五段・ラ行アル,基本形,ある,アル,アル',

'。\t記号,句点,*,*,*,*,。,。,。']

"""

with open(fpath, mode="rt", encoding="utf-8") as f:

sentences = f.read().split("EOS\n")

return [sent.strip().split("\n") for sent in sentences if sent.strip() != ""]

class Morph:

"""Morph information for each token.

Args:

data (dict): A dictionary contains necessary information.

Attributes:

surface (str): 表層形(surface)

base (str): 基本形(base)

pos (str): 品詞(base)

pos1 (str): 品詞細分類1(pos1

"""

def __init__(self, data):

self.surface = data["surface"]

self.base = data["base"]

self.pos = data["pos"]

self.pos1 = data["pos1"]

def __repr__(self):

return f"Morph({self.surface})"

def __str__(self):

return "surface[{}]\tbase[{}]\tpos[{}]\tpos1[{}]".format(

self.surface, self.base, self.pos, self.pos1

)

class Chunk:

"""Containing information for Clause/phrase.

Args:

data (dict): A dictionary contains necessary information.

Attributes:

chunk_id (str): The number of clause chunk (文節番号).

morphs List[Morph]: Morph (形態素) list.

dst (str): The index of dependency target (係り先文節インデックス番号).

srcs (List[str]): The index list of dependency source. (係り元文節インデックス番号).

"""

def __init__(self, chunk_id, dst):

self.id = chunk_id

self.morphs = []

self.dst = dst

self.srcs = []

def __repr__(self):

return "Chunk( id: {}, dst: {}, srcs: {}, morphs: {} )".format(

self.id, self.dst, self.srcs, self.morphs

)

def get_surface(self) -> str:

"""Concatenate morph surfaces in a chink.

Args:

chunk (Chunk): e.g. Chunk( id: 0, dst: 5, srcs: [], morphs: [Morph(吾輩), Morph(は)]

Return:

e.g. '吾輩は'

"""

morphs = self.morphs

res = ""

for morph in morphs:

if morph.pos != "記号":

res += morph.surface

return res

def validate_pos(self, pos: str) -> bool:

"""Return Ture if '名詞' or '動詞' in chunk's morphs. Otherwise, return False."""

morphs = self.morphs

return any([morph.pos == pos for morph in morphs])

def convert_sent_to_chunks(sent: List[str]) -> List[Morph]:

"""Extract word and convert to morph.

Args:

sent (List[str]): A sentence contains a word list.

e.g. sent:

['* 0 1D 0/1 0.000000',

'吾輩\t名詞,代名詞,一般,*,*,*,吾輩,ワガハイ,ワガハイ',

'は\t助詞,係助詞,*,*,*,*,は,ハ,ワ',

'* 1 -1D 0/2 0.000000',

'猫\t名詞,一般,*,*,*,*,猫,ネコ,ネコ',

'で\t助動詞,*,*,*,特殊・ダ,連用形,だ,デ,デ',

'ある\t助動詞,*,*,*,五段・ラ行アル,基本形,ある,アル,アル',

'。\t記号,句点,*,*,*,*,。,。,。']

Parsing format:

e.g. "* 0 1D 0/1 0.000000"

| カラム | 意味 |

| :----: | :----------------------------------------------------------- |

| 1 | 先頭カラムは`*`。係り受け解析結果であることを示す。 |

| 2 | 文節番号(0から始まる整数) |

| 3 | 係り先番号+`D` |

| 4 | 主辞/機能語の位置と任意の個数の素性列 |

| 5 | 係り関係のスコア。係りやすさの度合で、一般に大きな値ほど係りやすい。 |

Returns:

List[Chunk]: List of chunks.

"""

chunks = []

chunk = None

srcs = defaultdict(list)

for i, word in enumerate(sent):

if word[0] == "*":

# Add chunk to chunks

if chunk is not None:

chunks.append(chunk)

# eNw Chunk beggin

chunk_id = word.split(" ")[1]

dst = word.split(" ")[2].rstrip("D")

chunk = Chunk(chunk_id, dst)

srcs[dst].append(chunk_id) # Add target->source to mapping list

else: # Add Morch to chunk.morphs

features = word.split(",")

dic = {

"surface": features[0].split("\t")[0],

"base": features[6],

"pos": features[0].split("\t")[1],

"pos1": features[1],

}

chunk.morphs.append(Morph(dic))

if i == len(sent) - 1: # Add the last chunk

chunks.append(chunk)

# Add srcs to each chunk

for chunk in chunks:

chunk.srcs = list(srcs[chunk.id])

return chunks

def get_edges(chunks: List[Chunk]) -> List[Tuple[str, str]]:

"""Get edges from sentence chunks.

Args:

chunks (List[Chunk]): A sentence contains many chunks.

e.g. [Chunk( id: 0, dst: 5, srcs: [], morphs: [Morph(吾輩), Morph(は)] ),

Chunk( id: 1, dst: 2, srcs: [], morphs: [Morph(ここ), Morph(で)] ),

Chunk( id: 2, dst: 3, srcs: ['1'], morphs: [Morph(始め), Morph(て)] ),

Chunk( id: 3, dst: 4, srcs: ['2'], morphs: [Morph(人間), Morph(という)] ),

Chunk( id: 4, dst: 5, srcs: ['3'], morphs: [Morph(もの), Morph(を)] ),

Chunk( id: 5, dst: -1, srcs: ['0', '4'], morphs: [Morph(見), Morph(た), Morph(。)] )]

Returns:

List[Tuple[str, str]]: Edges.

e.g. [('ここで', '始めて'),

('始めて', '人間という'),

('人間という', 'ものを'),

('吾輩は', '見た'),

('ものを', '見た')]

"""

edges = []

for chunk in chunks:

if len(chunk.srcs) == 0:

continue

post_node = chunk.get_surface()

for src in chunk.srcs:

src_chunk = chunks[int(src)]

pre_node = src_chunk.get_surface()

edges.append((pre_node, post_node))

return edges

def draw_graph(edges):

graph = pydot.Dot(graph_type="digraph")

for edge in edges:

graph.add_edge(pydot.Edge(edge[0], edge[1]))

graph.write_png("demo_graph.png")

fpath = "neko.txt.cabocha"

sentences = read_file(fpath)

sentences = [convert_sent_to_chunks(sent) for sent in sentences] # ans41

# ans44

edges = get_edges(sentences[5]) # May return empty list

draw_graph(edges)

# edges:

# [('ここで', '始めて'),

# ('始めて', '人間という'),

# ('人間という', 'ものを'),

# ('吾輩は', '見た'),

# ('ものを', '見た')]

More than 5 years have passed since last update.

Register as a new user and use Qiita more conveniently

- You get articles that match your needs

- You can efficiently read back useful information

- You can use dark theme