自動Canaryリリース

Linkerdのトラフィック分割機能により、サービス間でトラフィックを動的にシフトできます。

これを使用して、ブルーグリーン展開やCanaryリリースなどを実装できます。

Linkerdでは、単にあるバージョンのサービスから次のバージョンにトラフィックを移行するだけでなく、トラフィックの分割をゴールデンメトリックテレメトリと組み合わせ、メトリックに基づいて自動的にトラフィックを決定できます。

例として、成功率を継続的に監視しながら、トラフィックを古い展開から新しい展開に徐々にシフトできます。

その際、いずれかの時点で成功率が低下した場合、トラフィックを元のdeploymentに戻すことができます。

Flaggerのインストール (kubernetes v1.14移行)

Linkerdが実際のトラフィックルーティングを管理し、Flaggerが新しいKubernetesリソースの作成、メトリックの監視、およびユーザーを新しいバージョンに段階的に送信するプロセスを自動化します。

Flaggerをクラスターに追加し、Linkerdと連携させるために、以下を実行します。

# kubectl apply -k github.com/weaveworks/flagger/kustomize/linkerd

customresourcedefinition.apiextensions.k8s.io/canaries.flagger.app created

serviceaccount/flagger created

clusterrole.rbac.authorization.k8s.io/flagger created

clusterrolebinding.rbac.authorization.k8s.io/flagger created

deployment.apps/flagger created

sample アプリケーションをデプロイ

# kubectl create ns test

namespace/test created

# kubectl apply -f https://run.linkerd.io/flagger.yml

deployment.apps/load created

configmap/frontend created

deployment.apps/frontend created

service/frontend created

deployment.apps/podinfo created

service/podinfo created

# k get all

NAME READY STATUS RESTARTS AGE

pod/frontend-66db854c46-vwhbg 2/2 Running 0 57s

pod/load-7fbb5f6f84-8mb99 2/2 Running 0 57s

pod/podinfo-6664bccb6-88rgd 2/2 Running 0 57s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/frontend ClusterIP 10.100.200.132 <none> 8080/TCP 57s

service/podinfo ClusterIP 10.100.200.12 <none> 9898/TCP 57s

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/frontend 1/1 1 1 57s

deployment.apps/load 1/1 1 1 57s

deployment.apps/podinfo 1/1 1 1 57s

NAME DESIRED CURRENT READY AGE

replicaset.apps/frontend-66db854c46 1 1 1 57s

replicaset.apps/load-7fbb5f6f84 1 1 1 57s

replicaset.apps/podinfo-6664bccb6 1 1 1 57s

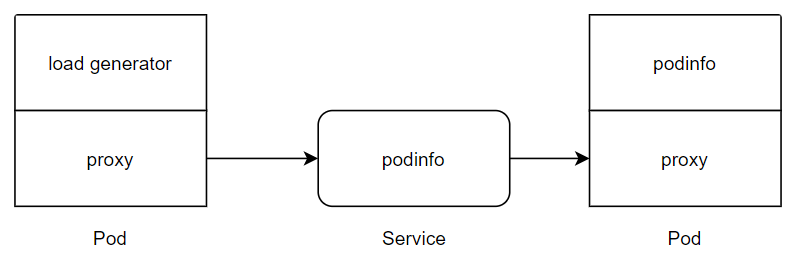

現在の状態としてはこんな感じになっています。

それでは、port forwardingをして、podから情報を取得してみます。

# kubectl -n test port-forward svc/podinfo 9898

Forwarding from 127.0.0.1:9898 -> 9898

# curl 127.0.0.1:9898

{

"hostname": "podinfo-6664bccb6-88rgd",

"version": "1.7.0",

"revision": "4fc593f42c7cd2e7319c83f6bfd3743c05523883",

"color": "blue",

"message": "greetings from podinfo v1.7.0",

"goos": "linux",

"goarch": "amd64",

"runtime": "go1.11.2",

"num_goroutine": "7",

"num_cpu": "2"

}

バージョンとしては、1.7.0として取得できました。

Canary releaseのpolicyを定義

これから、自動でCanaryリリースをするためのPolicyを適用していきます。

apiVersion: flagger.app/v1alpha3

kind: Canary

metadata:

name: podinfo

namespace: test

spec:

targetRef:

apiVersion: apps/v1

kind: Deployment

name: podinfo

service:

port: 9898

canaryAnalysis:

interval: 10s

threshold: 5

stepWeight: 10

metrics:

- name: request-success-rate

threshold: 99

interval: 1m

これを適用し、動きを見ていきます。

# k apply -f canary.yaml

canary.flagger.app/podinfo created

kubectl -n test get ev --watch

<省略>

24s Warning Synced canary/podinfo Halt advancement podinfo-primary.test waiting for rollout to finish: observed deployment generation less then desired generation

4s Warning Synced canary/podinfo Halt advancement podinfo-primary.test waiting for rollout to finish: 0 of 1 updated replicas are available

0s Warning Synced canary/podinfo Halt advancement podinfo-primary.test waiting for rollout to finish: 0 of 1 updated replicas are available

0s Normal Pulled pod/podinfo-primary-8c85f78c4-99bpn Successfully pulled image "quay.io/stefanprodan/podinfo:1.7.0"

0s Normal Created pod/podinfo-primary-8c85f78c4-99bpn Created container podinfod

0s Normal Started pod/podinfo-primary-8c85f78c4-99bpn Started container podinfod

0s Normal Pulled pod/podinfo-primary-8c85f78c4-99bpn Container image "gcr.io/linkerd-io/proxy:stable-2.6.0" already present on machine

0s Normal Created pod/podinfo-primary-8c85f78c4-99bpn Created container linkerd-proxy

0s Normal Started pod/podinfo-primary-8c85f78c4-99bpn Started container linkerd-proxy

0s Warning Synced canary/podinfo Halt advancement podinfo-primary.test waiting for rollout to finish: 0 of 1 updated replicas are available

0s Normal ScalingReplicaSet deployment/podinfo Scaled down replica set podinfo-6664bccb6 to 0

0s Normal SuccessfulDelete replicaset/podinfo-6664bccb6 Deleted pod: podinfo-6664bccb6-88rgd

0s Normal Killing pod/podinfo-6664bccb6-88rgd Stopping container linkerd-proxy

0s Normal Killing pod/podinfo-6664bccb6-88rgd Stopping container podinfod

0s Normal Synced canary/podinfo Initialization done! podinfo.test

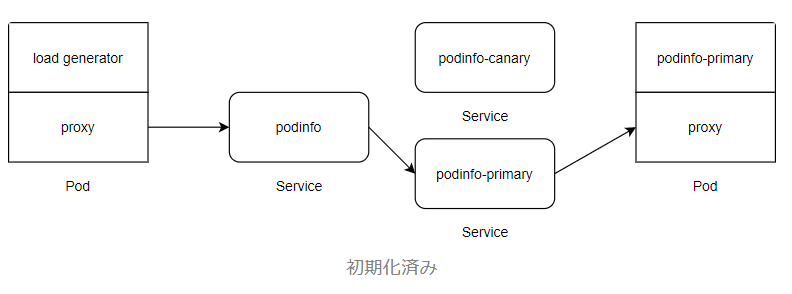

Initializeが終了しました。

リソースたちを見ていきます。

# k get all

NAME READY STATUS RESTARTS AGE

pod/frontend-66db854c46-vwhbg 2/2 Running 0 24m

pod/load-7fbb5f6f84-8mb99 2/2 Running 0 24m

pod/podinfo-primary-8c85f78c4-99bpn 2/2 Running 0 6m38s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/frontend ClusterIP 10.100.200.132 <none> 8080/TCP 24m

service/podinfo ClusterIP 10.100.200.12 <none> 9898/TCP 24m

service/podinfo-canary ClusterIP 10.100.200.167 <none> 9898/TCP 6m38s

service/podinfo-primary ClusterIP 10.100.200.23 <none> 9898/TCP 6m38s

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/frontend 1/1 1 1 24m

deployment.apps/load 1/1 1 1 24m

deployment.apps/podinfo 0/0 0 0 24m

deployment.apps/podinfo-primary 1/1 1 1 6m38s

NAME DESIRED CURRENT READY AGE

replicaset.apps/frontend-66db854c46 1 1 1 24m

replicaset.apps/load-7fbb5f6f84 1 1 1 24m

replicaset.apps/podinfo-6664bccb6 0 0 0 24m

replicaset.apps/podinfo-primary-8c85f78c4 1 1 1 6m38s

NAME STATUS WEIGHT LASTTRANSITIONTIME

canary.flagger.app/podinfo Initialized 0 2019-12-14T07:41:25Z

primaryというものが増えました。

現在、このアプリケーションは以下の状態となっています。

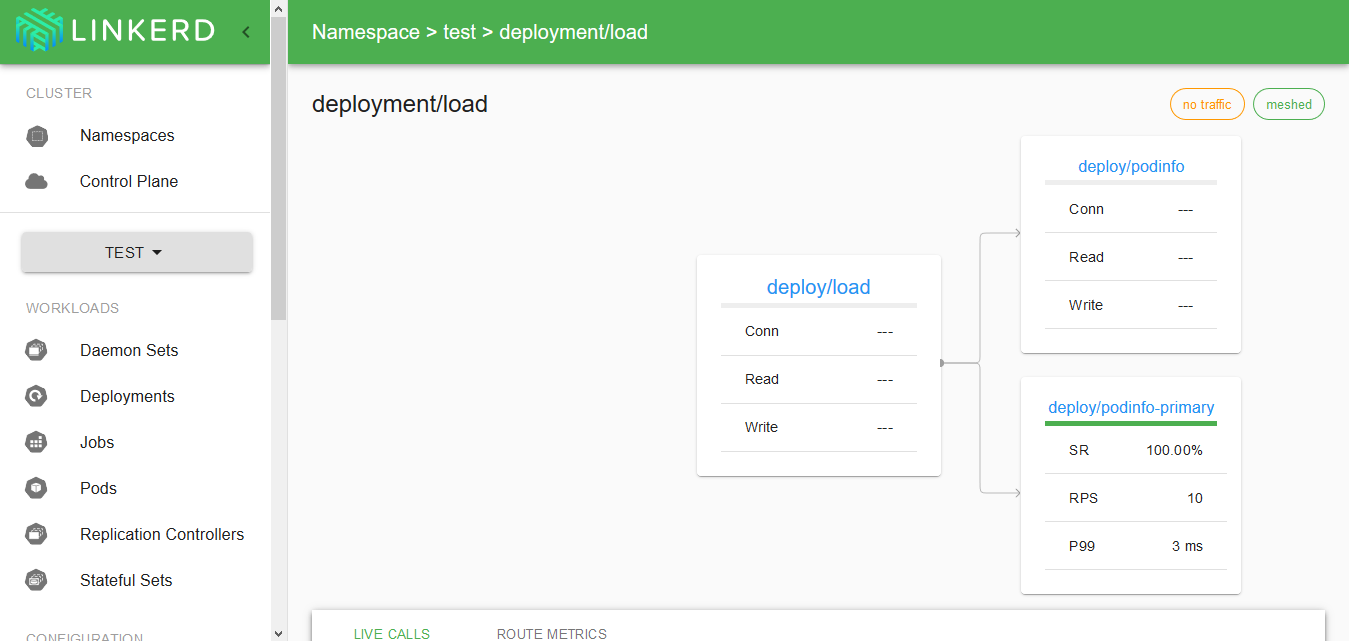

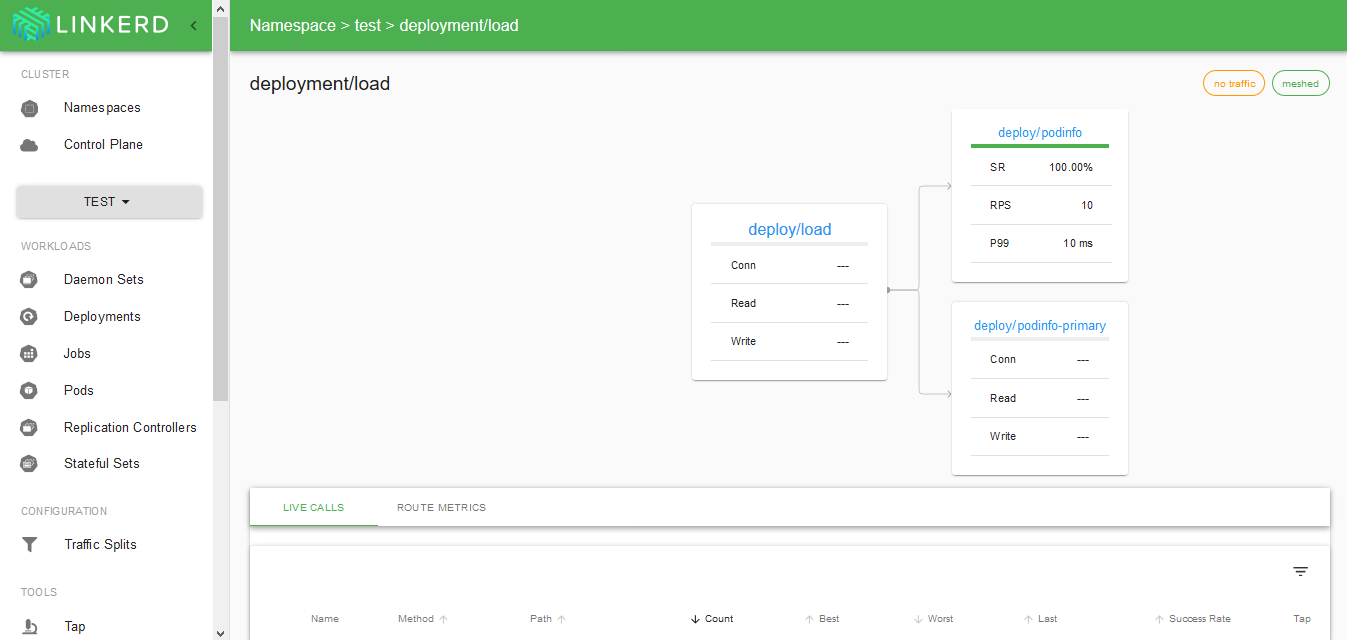

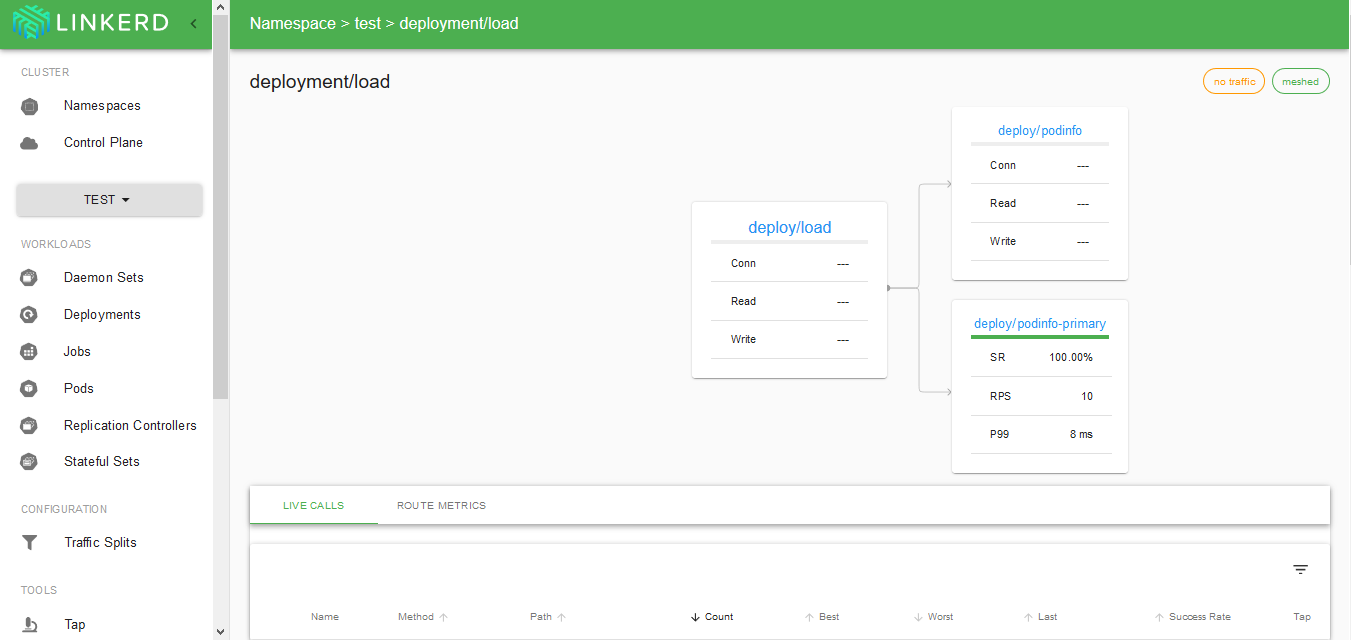

Linkerdからも確認してみます。

ロールアウトを開始

kubectl set image コマンドは、Deployment のポッドの nginx イメージを 1 つずつ更新します。

使用例:

kubectl set image deployment <deployment名> <container名> = <新image名(:tag)>

これを用いて、新versionのデプロイを行います。

# kubectl -n test set image deployment/podinfo podinfod=quay.io/stefanprodan/podinfo:1.7.1

Canaryの動きを見ていきます。

# kubectl -n test get ev --watch

1s Normal Synced canary/podinfo New revision detected! Scaling up podinfo.test

1s Normal ScalingReplicaSet deployment/podinfo Scaled up replica set podinfo-5b549f6b9c to 1

0s Normal Pulling pod/podinfo-5b549f6b9c-xmvcw Pulling image "quay.io/stefanprodan/podinfo:1.7.1"

0s Warning Synced canary/podinfo Halt advancement podinfo.test waiting for rollout to finish: 0 of 1 updated replicas are available

0s Warning Synced canary/podinfo Halt advancement podinfo.test waiting for rollout to finish: 0 of 1 updated replicas are available

0s Warning Synced canary/podinfo Halt advancement podinfo.test waiting for rollout to finish: 0 of 1 updated replicas are available

0s Normal Pulled pod/podinfo-5b549f6b9c-xmvcw Successfully pulled image "quay.io/stefanprodan/podinfo:1.7.1"

0s Normal Created pod/podinfo-5b549f6b9c-xmvcw Created container podinfod

0s Normal Started pod/podinfo-5b549f6b9c-xmvcw Started container podinfod

0s Normal Pulled pod/podinfo-5b549f6b9c-xmvcw Container image "gcr.io/linkerd-io/proxy:stable-2.6.0" already present on machine

0s Normal Created pod/podinfo-5b549f6b9c-xmvcw Created container linkerd-proxy

0s Normal Started pod/podinfo-5b549f6b9c-xmvcw Started container linkerd-proxy

0s Normal Synced canary/podinfo Starting canary analysis for podinfo.test

0s Normal Synced canary/podinfo Advance podinfo.test canary weight 10

0s Warning Synced canary/podinfo Halt advancement no values found for metric request-success-rate probably podinfo.test is not receiving traffic

0s Normal Synced canary/podinfo Advance podinfo.test canary weight 20

0s Normal Synced canary/podinfo Advance podinfo.test canary weight 30

0s Normal Synced canary/podinfo Advance podinfo.test canary weight 40

0s Normal Synced canary/podinfo Advance podinfo.test canary weight 50

0s Normal Synced canary/podinfo Advance podinfo.test canary weight 60

0s Normal Synced canary/podinfo Advance podinfo.test canary weight 70

0s Normal Synced canary/podinfo (combined from similar events): Advance podinfo.test canary weight 80

0s Normal Synced canary/podinfo (combined from similar events): Advance podinfo.test canary weight 90

0s Normal Synced canary/podinfo (combined from similar events): Advance podinfo.test canary weight 100

0s Normal Synced canary/podinfo (combined from similar events): Copying podinfo.test template spec to podinfo-primary.test

0s Normal ScalingReplicaSet deployment/podinfo-primary Scaled up replica set podinfo-primary-694659d58d to 1

0s Normal Injected deployment/podinfo-primary Linkerd sidecar proxy injected

0s Normal SuccessfulCreate replicaset/podinfo-primary-694659d58d Created pod: podinfo-primary-694659d58d-lzlkx

0s Normal Scheduled pod/podinfo-primary-694659d58d-lzlkx Successfully assigned test/podinfo-primary-694659d58d-lzlkx to b5db1b15-ec4c-4ae6-a8da-4a2ffb3e4446

0s Normal Pulled pod/podinfo-primary-694659d58d-lzlkx Container image "gcr.io/linkerd-io/proxy-init:v1.2.0" already present on machine

0s Normal Created pod/podinfo-primary-694659d58d-lzlkx Created container linkerd-init

0s Normal Started pod/podinfo-primary-694659d58d-lzlkx Started container linkerd-init

0s Normal Pulling pod/podinfo-primary-694659d58d-lzlkx Pulling image "quay.io/stefanprodan/podinfo:1.7.1"

0s Warning Synced canary/podinfo Halt advancement podinfo-primary.test waiting for rollout to finish: 1 old replicas are pending termination

0s Warning Synced canary/podinfo Halt advancement podinfo-primary.test waiting for rollout to finish: 1 old replicas are pending termination

0s Warning Synced canary/podinfo Halt advancement podinfo-primary.test waiting for rollout to finish: 1 old replicas are pending termination

0s Normal Pulled pod/podinfo-primary-694659d58d-lzlkx Successfully pulled image "quay.io/stefanprodan/podinfo:1.7.1"

0s Normal Created pod/podinfo-primary-694659d58d-lzlkx Created container podinfod

0s Normal Started pod/podinfo-primary-694659d58d-lzlkx Started container podinfod

0s Normal Pulled pod/podinfo-primary-694659d58d-lzlkx Container image "gcr.io/linkerd-io/proxy:stable-2.6.0" already present on machine

0s Normal Created pod/podinfo-primary-694659d58d-lzlkx Created container linkerd-proxy

0s Normal Started pod/podinfo-primary-694659d58d-lzlkx Started container linkerd-proxy

0s Warning Synced canary/podinfo Halt advancement podinfo-primary.test waiting for rollout to finish: 1 old replicas are pending termination

0s Normal ScalingReplicaSet deployment/podinfo-primary Scaled down replica set podinfo-primary-8c85f78c4 to 0

0s Normal SuccessfulDelete replicaset/podinfo-primary-8c85f78c4 Deleted pod: podinfo-primary-8c85f78c4-99bpn

0s Normal Killing pod/podinfo-primary-8c85f78c4-99bpn Stopping container linkerd-proxy

0s Normal Killing pod/podinfo-primary-8c85f78c4-99bpn Stopping container podinfod

0s Normal Synced canary/podinfo (combined from similar events): Routing all traffic to primary

0s Normal ScalingReplicaSet deployment/podinfo Scaled down replica set podinfo-5b549f6b9c to 0

0s Normal Synced canary/podinfo (combined from similar events): Promotion completed! Scaling down podinfo.test

0s Normal SuccessfulDelete replicaset/podinfo-5b549f6b9c Deleted pod: podinfo-5b549f6b9c-xmvcw

0s Normal Killing pod/podinfo-5b549f6b9c-xmvcw Stopping container linkerd-proxy

0s Normal Killing pod/podinfo-5b549f6b9c-xmvcw Stopping container podinfod

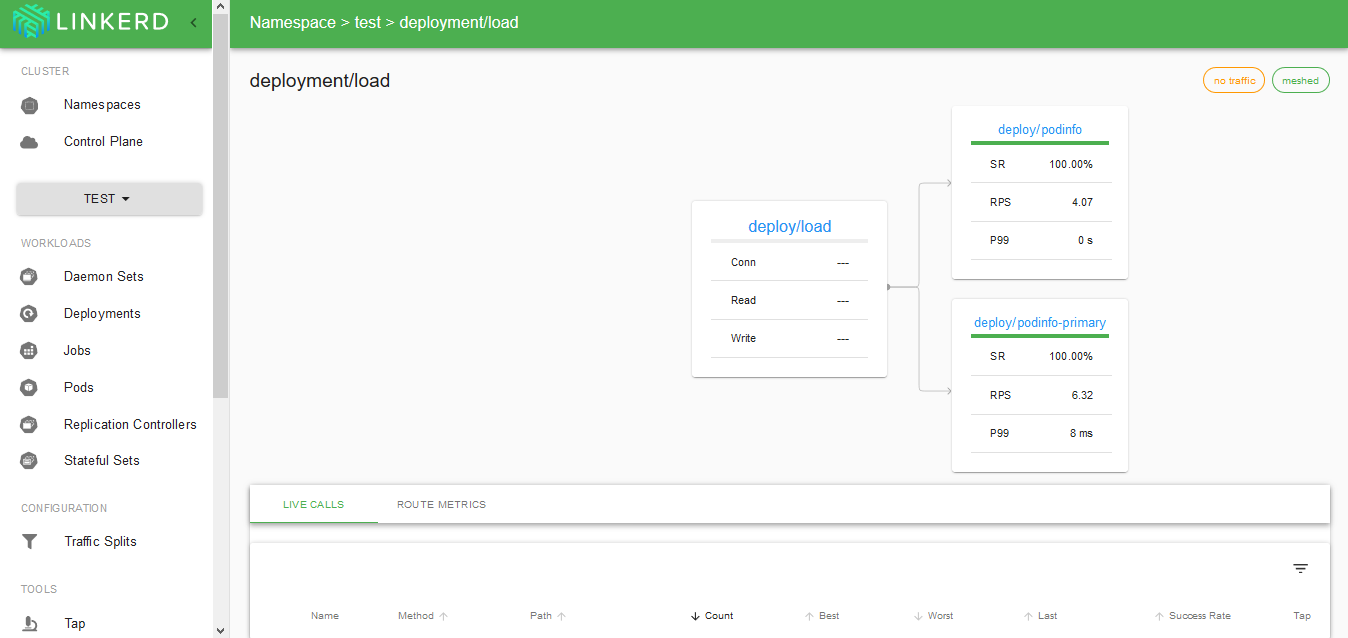

Linkerdを使って、動きを監視していきます。

- 更新がかかった、deploy/podinfoにもトラフィックが流れるようになる

- トラフィックが新versionの方だけになる。

- 新versionの方がprimaryのpodとして再デプロイされ、再度deploy/podinfoは落ちる。

という流れになっていることがわかりました。

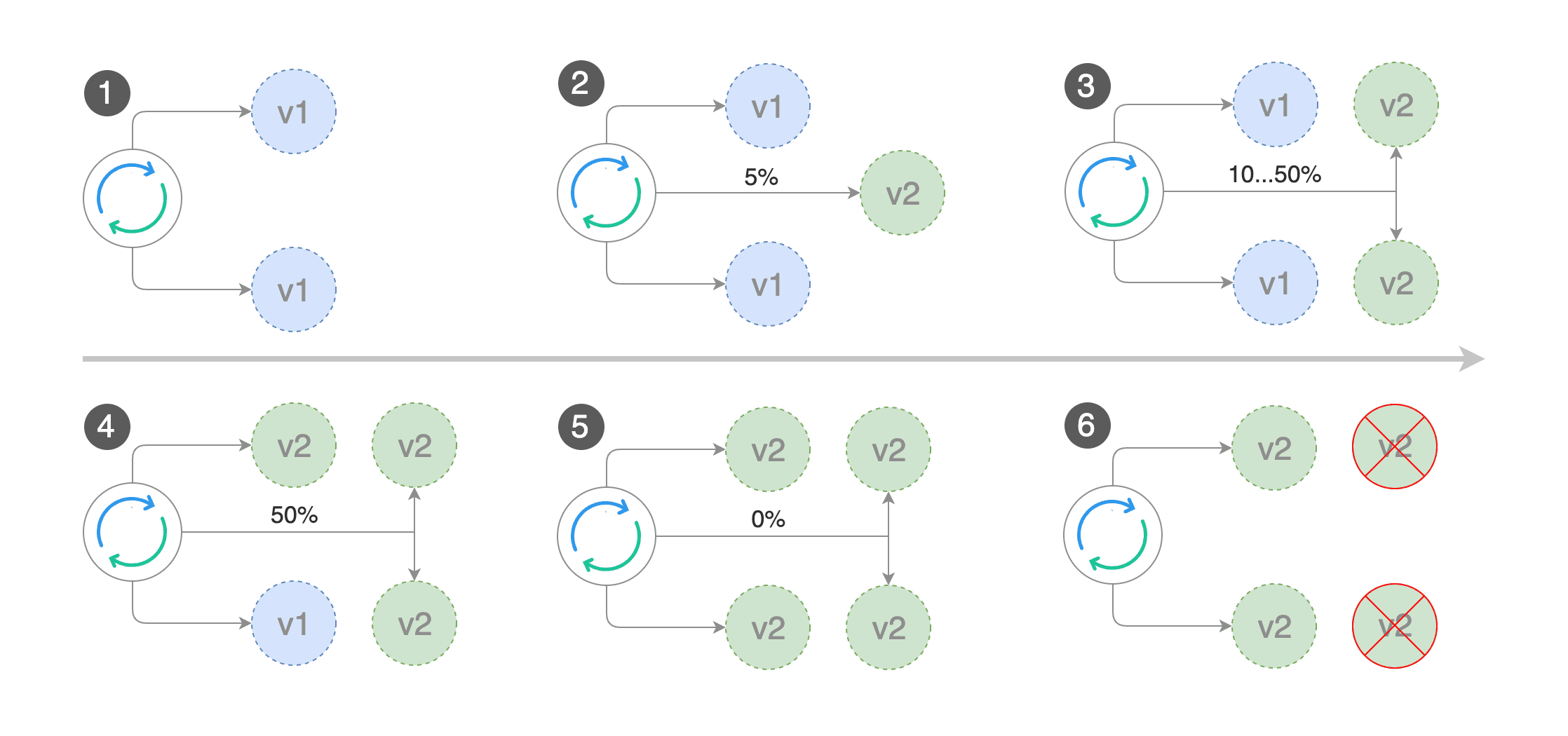

replica数が多い場合などは、以下の動きになるようです。

Canary リリースが成功したことが以下のコマンドからも確認できます。

# watch kubectl -n test get canary

Every 2.0s: kubectl -n test get canary Sat Dec 14 17:28:16 2019

NAME STATUS WEIGHT LASTTRANSITIONTIME

podinfo Succeeded 0 2019-12-14T08:20:55Z

それでは、先ほどと同様にport forwarding をして確認していきます。

# kubectl -n test port-forward svc/podinfo 9898

Forwarding from 127.0.0.1:9898 -> 9898

# curl 127.0.0.1:9898

{

"hostname": "podinfo-primary-694659d58d-lzlkx",

"version": "1.7.1",

"revision": "c9dc78f29c5087e7c181e58a56667a75072e6196",

"color": "blue",

"message": "greetings from podinfo v1.7.1",

"goos": "linux",

"goarch": "amd64",

"runtime": "go1.11.12",

"num_goroutine": "7",

"num_cpu": "2"

}

しっかり、versionが1.7.1にアップグレードされています。