概要

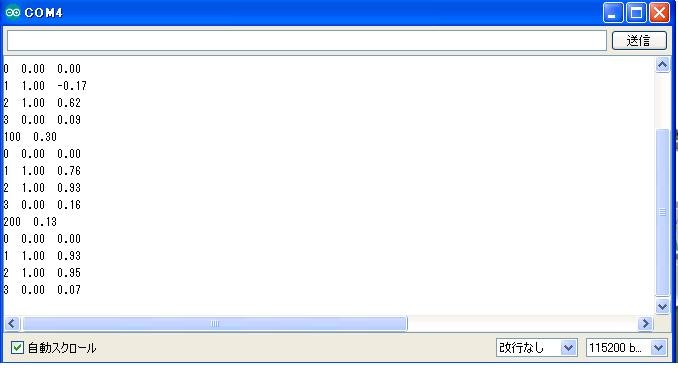

wemos d1でxor問題やってみた。NeuralNetworkで。

写真

サンプルコード

# include <math.h>

# define NPATTERNS 4

# define NHIDDEN 8

# define randomdef (double) ((rand() % 2000) - 1000) / 1000

double trainInputs[4][2] = {

{0, 0},

{1, 0},

{0, 1},

{1, 1}

};

static double trainOutputs[] = {

0,

1,

1,

0

};

static int numEpochs = 200;

static int numInputs = 2;

static int numPatterns = NPATTERNS;

static double LR_IH = 0.7;

static double LR_HO = 0.07;

static double h[NHIDDEN];

static double wih[4][NHIDDEN];

static double who[NHIDDEN];

static int patNum;

static double error;

static double outPred;

static double RMSerror;

double forward(double state[])

{

int i,

j;

for (i = 0; i < NHIDDEN; i++)

{

h[i] = 0.0;

for (j = 0; j < numInputs; j++)

{

h[i] = h[i] + (state[j] * wih[j][i]);

}

h[i] = tanh(h[i]);

}

double push = 0.0,

sum = 0.0;

for (i = 0; i < NHIDDEN; i++)

{

sum += h[i] * who[i];

}

push = sum;

return push;

}

void WeightChangesHO(double error)

{

int k;

for (k = 0; k < NHIDDEN; k++)

{

double weightChange = LR_HO * error * h[k];

who[k] = who[k] - weightChange;

if (who[k] < -5) who[k] = -5;

else if (who[k] > 5) who[k] = 5;

}

}

void WeightChangesIH(double error)

{

int i,

k;

for (i = 0; i < NHIDDEN; i++)

{

double gradient = (1 - (h[i]) * h[i]) * who[i] * error * LR_IH;

for (k = 0; k < numInputs; k++)

{

double weightChange = gradient * trainInputs[patNum][k];

wih[k][i] = wih[k][i] - weightChange;

}

}

}

void backprop(double push, double target)

{

error = push - target;

WeightChangesHO(error);

WeightChangesIH(error);

}

double tanh(double x)

{

if (x > 20) return 1;

else if (x < -20) return -1;

else

{

double a = exp(x);

double b = exp(-x);

return (a - b) / (a + b);

}

}

double test(int patternNumber)

{

patNum = patternNumber;

return forward(trainInputs[patNum]);

}

void calcOverallError()

{

int i;

RMSerror = 0.0;

for (i = 0; i < numPatterns; i++)

{

patNum = i;

forward(trainInputs[patNum]);

RMSerror = RMSerror + (error * error);

}

RMSerror = RMSerror / numPatterns;

RMSerror = sqrt(RMSerror);

}

void testAll()

{

int i;

double push;

for (i = 0; i < numPatterns; i++)

{

push = test(i);

Serial.print(patNum);

Serial.print(" ");

Serial.print(trainOutputs[patNum]);

Serial.print(" ");

Serial.println(push);

}

}

static void train()

{

int i,

j;

double push;

for (j = 0; j <= numEpochs; j++)

{

for (i = 0; i < numPatterns; i++)

{

patNum = (int) ((randomdef * numPatterns) - 0.001);

push = forward(trainInputs[patNum]);

backprop(push, trainOutputs[patNum]);

}

calcOverallError();

if (j % 100 == 0)

{

Serial.print(j);

Serial.print(" ");

Serial.println(RMSerror);

testAll();

}

delay(1);

}

}

void initWeights()

{

int i,

j;

for (j = 0; j < NHIDDEN; j++)

{

who[j] = (randomdef - 0.5) / 2;

for (i = 0; i < numInputs; i++)

{

wih[i][j] = (randomdef - 0.5) / 5;

}

}

}

void setup()

{

Serial.begin(115200);

while (!Serial) delay(250);

Serial.print("ok");

Serial.println(randomdef);

Serial.println(randomdef);

Serial.println(randomdef);

Serial.println(randomdef);

initWeights();

train();

}

void loop()

{

delay(5000);

}

以上。