概要

kerasのbackendで、autoencoderやってみた。

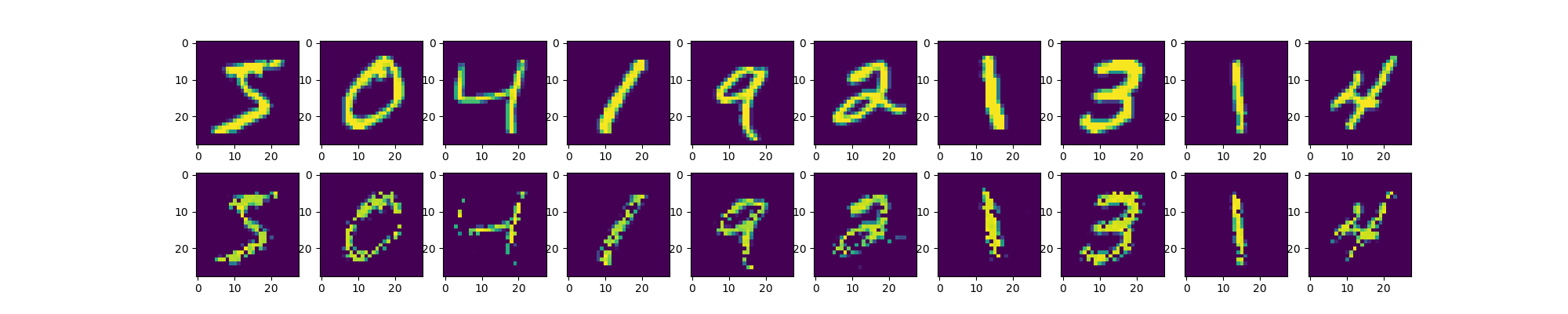

実行結果

サンプルコード

from tensorflow.contrib.keras.python.keras import backend as K

from tensorflow.contrib.keras.python.keras.optimizers import SGD, Adam, RMSprop, Adagrad, Adadelta

from tensorflow.contrib.keras.python.keras.datasets import mnist

import numpy as np

import matplotlib.pyplot as plt

(x_train, _), (x_test, _) = mnist.load_data()

x_train = x_train.astype('float32') / 255.

x_test = x_test.astype('float32') / 255.

x_train = x_train.reshape((len(x_train), np.prod(x_train.shape[1 : ])))

x_test = x_test.reshape((len(x_test), np.prod(x_test.shape[1 : ])))

input_dim = 784

output_dim = 784

hidden_dim = 32

x = K.placeholder(shape = (None, input_dim), name = "x")

ytrue = K.placeholder(shape = (None, output_dim), name = "y")

W1 = K.random_uniform_variable((input_dim, hidden_dim), 0, 1, name = "W1")

W2 = K.random_uniform_variable((hidden_dim, output_dim), 0, 1, name = "W2")

b1 = K.random_uniform_variable((hidden_dim, ), 0, 1, name = "b1")

b2 = K.random_uniform_variable((output_dim, ), 0, 1, name = "b2")

params = [W1, b1, W2, b2]

hidden = K.relu(K.dot(x, W1) + b1)

ypred = K.relu(K.dot(hidden, W2) + b2)

loss = K.mean(K.square(ypred - ytrue), axis = -1)

opt = RMSprop()

updates = opt.get_updates(params, [], loss)

train = K.function(inputs = [x, ytrue], outputs = [loss], updates = updates)

test = K.function(inputs = [x], outputs = [ypred])

for ep in range(10000):

for i in range(10):

st = train([[x_train[i]], [x_train[i]]])

if ep % 1000 == 0:

print (ep, st[0])

n = 10

plt.figure(figsize = (20, 4))

for i in range(n):

ax = plt.subplot(2, n, i + 1)

plt.imshow(x_train[i].reshape(28, 28))

ax = plt.subplot(2, n, i + 1 + n)

img = test([[x_train[i]]])

plt.imshow(img[0].reshape(28, 28))

plt.savefig("auto0.png")

plt.show()

以上。