import numpy as np

f = lambda x: x**2 + 2 * x + 1

g = lambda x: x**4 - 4 * x**3 - 36 * x**2

%matplotlib inline

import matplotlib.pyplot as plt

x_latent = np.linspace(-5, 5, 100)

plt.plot(x_latent, f(x_latent))

plt.grid()

def numerical_differentiation(f, x):

h = 1e-4

return (f(x+h) - f(x-h)) / (2 * h)

grad_history = []

x_history = []

def gradient_decent(f, init_x, lr=0.01, step_num=300):

x = init_x

for i in range(step_num):

grad = numerical_differentiation(f, x)

grad_history.append(grad)

x = x - lr * grad

x_history.append(x)

return x

grad_history = []

x_optimum = gradient_decent(f, 0)

minimum = f(x_optimum)

x_optimum, minimum

(-0.997667494332044, 5.4405826910297606e-06)

plt.plot(grad_history)

plt.grid()

plt.plot(x_history)

plt.grid()

grad_history = []

x_history = []

x_optimum = gradient_decent(f, 0, lr=0.1)

minimum = f(x_optimum)

x_optimum, minimum

(-1.0000000000000564, 0.0)

plt.plot(grad_history)

plt.grid()

plt.plot(x_history)

plt.grid()

%matplotlib inline

import matplotlib.pyplot as plt

x_latent = np.linspace(-8, 10, 100)

plt.plot(x_latent, g(x_latent))

plt.grid()

grad_history = []

x_history = []

x_optimum = gradient_decent(g, 0)

minimum = g(x_optimum)

x_optimum, minimum

(5.106003984774603, -791.3346632735172)

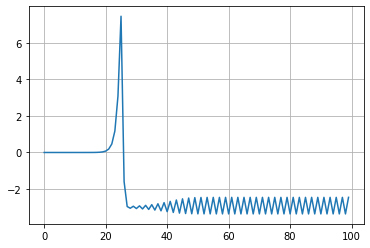

plt.plot(grad_history)

plt.grid()

plt.plot(x_history)

plt.grid()

grad_history = []

x_history = []

x_optimum = gradient_decent(g, 0, lr=0.02, step_num=100)

minimum = g(x_optimum)

x_optimum, minimum

(-2.4538370070007725, -121.40981830661639)

plt.plot(grad_history)

plt.grid()

plt.plot(x_history)

plt.grid()