Reference

こちらの記事にあげられているツールを中心に、IBM Cloud Object Storage (S3) からダウンロードするテストします。

Moving data from COS to File or Block Storage - developerWorks Recipes

Overview

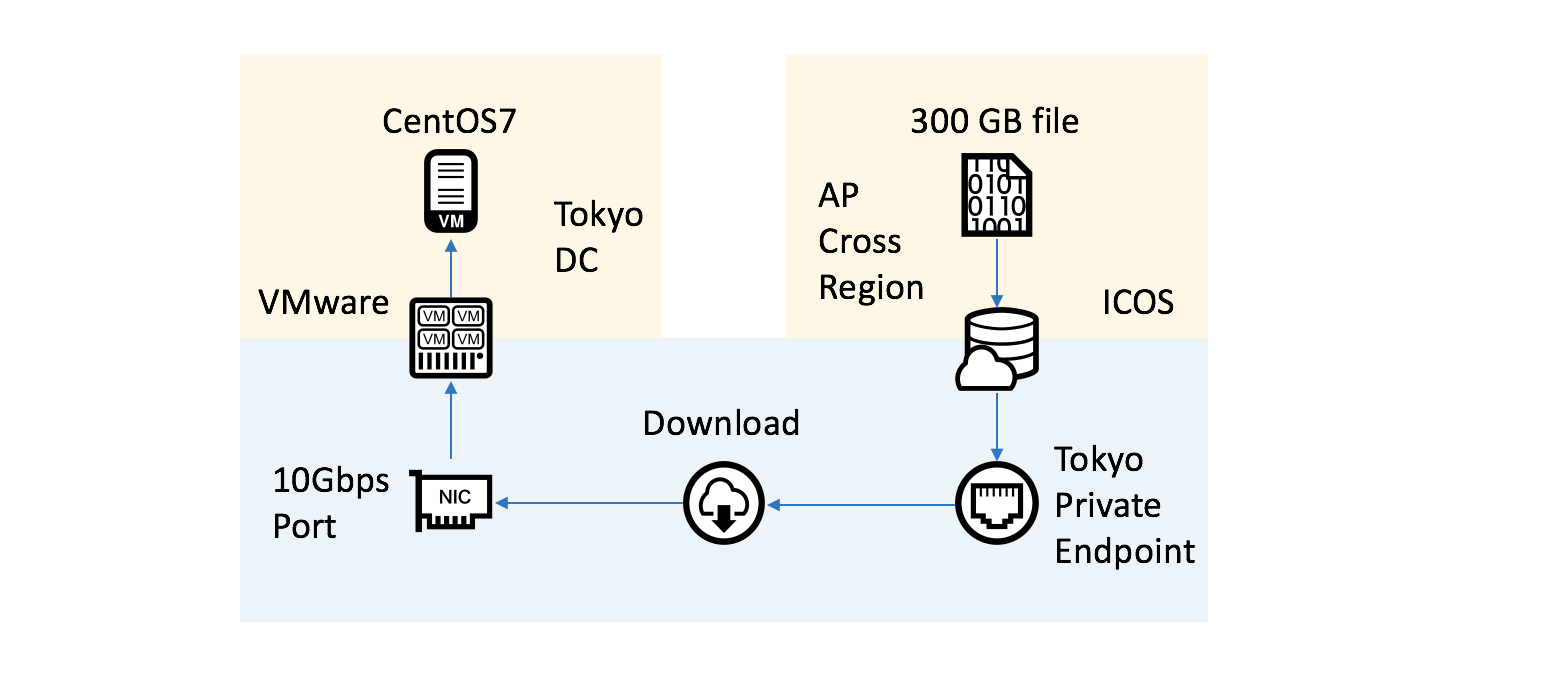

東京DCのベアメタル(VMware)上にあるCentOS7からダウンロードをおこなって、どれくらいの速度で転送できるかを確認します。

1. rclone

バージョン

rclone --version

rclone v1.43-116-g22ac80e8-beta

- os/arch: linux/amd64

- go version: go1.11

設定

cat /root/.config/rclone/rclone.conf

[icos]

type = s3

provider = IBMCOS

env_auth = false

access_key_id = ACCESS_KEY_ID

secret_access_key = SECRET_ACCESS_KEY

endpoint = s3.tok-ap-geo.objectstorage.service.networklayer.com

location_constraint = ap-flex

acl = private

Download

61.036MB/s ですね

rclone copy icos:khayama-test2/temp_300GB_file . -vv

2018/09/28 13:50:42 DEBUG : rclone: Version “v1.43-116-g22ac80e8-beta” starting with parameters [“rclone” “copy” “icos:khayama-test2/temp_300GB_file” “.” “-vv”]

2018/09/28 13:50:42 DEBUG : Using config file from “/root/.config/rclone/rclone.conf”

2018/09/28 13:50:42 DEBUG : pacer: Reducing sleep to 0s

2018/09/28 13:50:43 DEBUG : temp_300GB_file: Couldn’t find file - need to transfer

…

2018/09/28 14:01:42 INFO :

Transferred: 38.756G / 300 GBytes, 13%, 60.059 MBytes/s, ETA 1h14m14s

Errors: 0

Checks: 0 / 0, -

Transferred: 0 / 1, 0%

Elapsed time: 11m0.7s

Transferring:

temp_300GB_file: 12% /300G, 61.036M/s, 1h13m2s

要注意事項

rclone を使う場合、2018/10/3 時点では、ICOS から 300GB 以上のファイルをダウンロードする際に、十数% あたりでダウンロードが止まってしまうというバグがあります。

大容量ファイルを扱う場合は、バグが解決しているか確かめた上で使ってください。

2. s3fs

バージョン

s3fs --version

Amazon Simple Storage Service File System V1.84(commit:c5af62b) with OpenSSL

Copyright (C) 2010 Randy Rizun <rrizun@gmail.com>

License GPL2: GNU GPL version 2 <http://gnu.org/licenses/gpl.html>

This is free software: you are free to change and redistribute it.

There is NO WARRANTY, to the extent permitted by law.

設定

echo ACCESS_KEY_ID:SECRET_ACCESS_KEY > ~/.passwd-s3fs

chmod 600 ~/.passwd-s3fs

s3fs khayama-test2 /mnt/bucket -o passwd_file=~/.passwd-s3fs -o allow_other,url=https://s3.tok-ap-geo.objectstorage.service.networklayer.com

Download

54.6 MiB/s なので 57.25 MB/s ですね

cp /mnt/bucket/temp_300GB_file . -v

'/mnt/bucket/temp_300GB_file' -> './temp_300GB_file'

1 files (300.0 GiB) copied in 5630.5 seconds (54.6 MiB/s).

3. aws cli

バージョン

aws --version

aws-cli/1.16.25 Python/2.7.5 Linux/3.10.0-862.el7.x86_64 botocore/1.12.15

設定

cat ~/.aws/credentials

[default]

aws_access_key_id = ACCESS_KEY_ID

aws_secret_access_key = SECRET_ACCESS_KEY

cat ~/.aws/config

[default]

output = json

region = ap-flex

Download

128.1 MiB/s なので 134.32 MB/s ですね

aws --endpoint-url https://s3.tok-ap-geo.objectstorage.service.networklayer.com s3 cp s3://khayama-test2/temp_300GB_file /home/

Completed 274.8 GiB/300.0 GiB (128.1 MiB/s) with 1 file(s) remaining

4. s3cmd

バージョン

s3cmd --version

s3cmd version 2.0.2

設定

s3cmd --configure

...

New settings:

Access Key: ACCESS_KEY_ID

Secret Key: SECRET_ACCESS_KEY

Default Region: ap-flex

S3 Endpoint: s3.tok-ap-geo.objectstorage.service.networklayer.com

DNS-style bucket+hostname:port template for accessing a bucket: %(bucket).s3.tok-ap-geo.objectstorage.service.networklayer.com

Encryption password: ******

Path to GPG program: /usr/bin/gpg

Use HTTPS protocol: True

HTTP Proxy server name:

HTTP Proxy server port: 0

Test access with supplied credentials? [Y/n] y

Please wait, attempting to list all buckets...

Success. Your access key and secret key worked fine :-)

Now verifying that encryption works...

Success. Encryption and decryption worked fine :-)

Save settings? [y/N] y

Configuration saved to '/root/.s3cfg'

Download

途中でリトライが発生していますが、61.67 MB/s ですね

s3cmd get s3://khayama-test2/temp_300GB_file

download: 's3://khayama-test2/temp_300GB_file' -> './temp_300GB_file' [1 of 1]

47244640256 of 322122547200 14% in 750s 60.02 MB/s failed

WARNING: Retrying failed request: /temp_300GB_file (('The read operation timed out',))

WARNING: Waiting 3 sec...

download: 's3://khayama-test2/temp_300GB_file' -> './temp_300GB_file' [1 of 1]

322122547200 of 322122547200 100% in 4250s 61.67 MB/s done

まとめ

IBM Cloud Object Storage (S3) からダウンロードするにあたっては

aws cli を使ってのダウンロードが優秀であることがわかりましたね。

ぜひ参考にしていただけたらと思います。

| tool | speed |

|---|---|

| aws cli | 134.32 MB/s |

| s3cmd | 61.67 MB/s |

| rclone | 61.036MB/s |

| s3fs | 57.25 MB/s |

他の場所でも同様の結果から aws cli を使うのがおすすめのようですね。

また、aws cli でいろんなオプションを試してみたいという方は以下の記事が参考になります。