xgboostで桜開花予想をしてみる

昨年の3月から今年の2月までのデータを利用

Python

初心者

機械学習

1.目的

AIさくら予想というのがあり、xgboostを使っているという記事があったので、xgboostでさくら開花予想をしてみた。

https://www.businessinsider.jp/post-186528

2.結論

微妙な結果でした。上記AIさくら予想が優秀なことがわかりました。

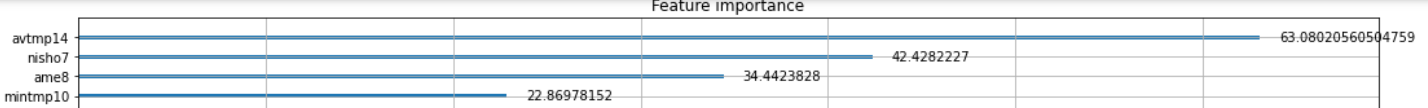

開花時期に影響が大きい要素として、年間平均気温、7月の日照時間、8月の雨量、10月の最低気温とされました。

年間平均気温はわかりますが、7月の日照時間、8月の雨量、10月の最低気温あたりは意外でした。

3.データソース

https://www.data.jma.go.jp/gmd/risk/obsdl/index.php

上記気象庁のデータを加工のうえ利用しました。

4.コード解説

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

from xgboost import XGBRegressor, plot_importance

from sklearn.model_selection import GridSearchCV, KFold

from tqdm import tqdm_notebook

path="./"

train = pd.read_csv(path+"kikou5train.csv")

x_test = pd.read_csv(path+"kikou5test.csv")

y_train = train.kaika.copy()

x_train = train.drop("kaika", axis=1)

train_id = x_train.Id

x_train.head()

date avtmp3 maxtmp3 mintmp3 ame3 nisho3 joki3 kumo3 avtmp4 maxtmp4 ... kumo13 avtmp14 maxtmp14 mintmp14 ame14 nisho14 joki14 kumo14 kaika TotalInc

Id

1 1961 8.2 21.9 -0.4 106.6 181.1 6.7 6.3 14.9 26.0 ... 3.8 5.9 24.5 -2.6 13.5 195.0 4.5 4.1 NaN 193.6

2 1962 8.2 18.8 -0.8 65.5 189.8 6.3 4.7 14.1 24.5 ... 2.0 4.8 15.3 -4.1 21.3 199.9 4.1 4.9 NaN 182.3

結合

df = pd.concat([x_train, x_test])

df.head()

特徴量エンジニアリング 年平均気温を追加

df["TotalInc"] = df.avtmp3 + df.avtmp4 + df.avtmp5 + df.avtmp6 + df.avtmp7 + df.avtmp8 + df.avtmp9 + df.avtmp10 + df.avtmp11 + df.avtmp12 + df.avtmp13 + df.avtmp14 #平均温度の

df.head()

date avtmp3 maxtmp3 mintmp3 ame3 nisho3 joki3 kumo3 avtmp4 maxtmp4 ... kumo13 avtmp14 maxtmp14 mintmp14 ame14 nisho14 joki14 kumo14 kaika TotalInc

0 1980 8.2 21.2 1.3 173.5 157.5 6 6.2 13.6 24 ... 2.9 5.3 17.2 -3.5 38 157.3 4.6 5.5 NaN 183.4

1 rows × 87 columns

x_train = df[df.Id.isin(train_id)].set_index("Id")

x_test = df[~df.Id.isin(train_id)].set_index("Id")

最適なハイパーパラメータ探索

random_state = 0

params = {

"learning_rate": [0.01, 0.05, 0.1],

"min_child_weight": [0.1],

"gamma": [0],

"reg_alpha": [0],

"reg_lambda": [1],

"max_depth": [3, 5, 7],

"max_delta_step": [0],

"random_state": [random_state],

"n_estimators": [50, 100, 200],

}

reg = XGBRegressor()

cv = KFold(n_splits=3, shuffle=True, random_state=random_state)

reg_gs = GridSearchCV(reg, params, cv=cv)

reg_gs.fit(x_train, y_train)

GridSearchCV(cv=KFold(n_splits=3, random_state=0, shuffle=True),

estimator=XGBRegressor(base_score=None, booster=None,

colsample_bylevel=None,

colsample_bynode=None,

colsample_bytree=None, gamma=None,

gpu_id=None, importance_type='gain',

interaction_constraints=None,

learning_rate=None, max_delta_step=None,

max_depth=None, min_child_weight=None,

missing=nan, monoto...

num_parallel_tree=None, random_state=None,

reg_alpha=None, reg_lambda=None,

scale_pos_weight=None, subsample=None,

tree_method=None, validate_parameters=None,

verbosity=None),

param_grid={'gamma': [0], 'learning_rate': [0.01, 0.05, 0.1],

'max_delta_step': [0], 'max_depth': [3, 5, 7],

'min_child_weight': [0.1],

'n_estimators': [50, 100, 200], 'random_state': [0],

'reg_alpha': [0], 'reg_lambda': [1]})

display(reg_gs.best_params_)

display(reg_gs.best_score_)

ax = plot_importance(reg_gs.best_estimator_, importance_type="gain")

fig = ax.figure

fig.set_size_inches(250, 250)

ax.figure.set_size_inches(18,18)

{'gamma': 0,

'learning_rate': 0.1,

'max_delta_step': 0,

'max_depth': 5,

'min_child_weight': 0.1,

'n_estimators': 50,

'random_state': 0,

'reg_alpha': 0,

'reg_lambda': 1}

0.36250088820449333

予測

y_pred3 = reg_gs.predict(x_test)

正解ラベルとの誤差を評価

y_true = pd.read_csv(path+"kikou5test.csv")

preds = pd.DataFrame({"pred3": y_pred3})

df_out = pd.concat([y_true, preds], axis=1)

df_out.head()

Id date avtmp3 maxtmp3 mintmp3 ame3 nisho3 joki3 kumo3 avtmp4 ... avtmp14 maxtmp14 mintmp14 ame14 nisho14 joki14 kumo14 kaika pred3 loss3

0 100 1966 9.6 21.6 1.2 99.9 150.4 7.0 6.6 13.6 ... 4.9 19.1 -4.0 43.8 162.6 5.1 5.0 30 29.816103 0.033818

RMSE

df_out["loss3"] = (df_out.kaika - df_out.pred3)**2

df_out.iloc[:, -3:].mean()

kaika 24.909091

pred3 26.849123

loss3 23.966188

dtype: float64

from sklearn.metrics import mean_squared_error, mean_absolute_error

# RMSE

rmse_kaika = np.sqrt(mean_squared_error(df_out.kaika, df_out.pred3))

rmse_kaika

4.895527368155607

さくらの開花の予測精度が、5日弱。意外と予測できているが、微妙でした。