Mastering the game of Go without human knowledge

Much progress towards artificial intelligence has been made using supervised learning systems that are trained to replicate the decisions of human experts1–4.

人工知能への多くの進歩は、人間の専門家の判断を再現するように訓練された教師あり学習システムを用いて行われている1-4。

However, expert data sets are often expensive, unreliable or simply unavailable. Even when reliable data sets are available, they may impose a ceiling on the performance of systems trained in this manner5.

しかし、専門家のデータセットは高価であることが多く、信頼性が低いか、単純に利用できません。信頼性の高いデータセットが利用可能であっても、このように訓練されたシステムのパフォーマンスには上限が課される可能性があります5。

By contrast, reinforcement learning systems are trained from their own experience, in principle allowing them to exceed human capabilities, and to operate in domains where human expertise is lacking.

対照的に、強化学習システムは、自分の経験から訓練され、原則として人間の能力を超えることを可能にし、人間の専門知識が不足している領域で活動する。

Recently, there has been rapid progress towards this goal, using deep neural networks trained by reinforcement learning.

近年、強化学習によって訓練された深いニューラルネットワークを用いて、この目標に向かって急速に進歩している。

These systems have outperformed humans in computer games, such as Atari6,7 and 3D virtual environments8–10.

これらのシステムは、Atari6,7や3D仮想環境などのコンピュータゲームにおいて人間より優れています8-10。

However, the most challenging domains in terms of human intellect—such as the game of Go, widely viewed as a grand challenge for artificial intelligence11—require a precise and sophisticated lookahead in vast search spaces.

しかし、人工知能の大きなチャレンジと広く見なされているGoのゲームのような、人間の知性の中で最も挑戦的な領域は、膨大な検索スペースで精密で洗練された先読みを必要とします。

Fully general methods have not previously achieved human-level performance in these domains.

完全な一般的な方法では、これらの分野では人間レベルのパフォーマンスは達成されていませんでした。

AlphaGo was the first program to achieve superhuman performance in Go.

AlphaGoは、Goで超人的なパフォーマンスを実現する最初のプログラムでした。

The published version12, which we refer to as AlphaGo Fan, defeated the European champion Fan Hui in October 2015.

AlphaGo Fanは、2015年10月に欧州チャンピオンFan Huiを敗北させました.

AlphaGo Fan used two deep neural networks: a policy network that outputs move probabilities and a value network that outputs a position evaluation.

AlphaGo Fanは、移動確率を出力するポリシーネットワークと、位置評価を出力する価値ネットワークという2つの深いニューラルネットワークを使用しました。

The policy network was trained initially by supervised learning to accurately predict human expert moves, and was subsequently refined by policy-gradient reinforcement learning.

政策ネットワークは、初めは人間の熟練者の動きを正確に予測する監督付き学習によって訓練され、続いて政策勾配強化学習によって洗練された。

The value network was trained to predict the winner of games played by the policy network against itself.

価値ネットワークは、ポリシーネットワークによって行われたゲームの優勝者を予測するように訓練されました。

Once trained, these networks were combined with a Monte Carlo tree search (MCTS)13–15 to provide a lookahead search, using the policy network to narrow down the search to high-probability moves, and using the value network (in conjunction with Monte Carlo rollouts using a fast rollout policy) to evaluate positions in the tree.

いったん訓練されれば、これらのネットワークはモンテカルロツリー検索(MCTS)13-15と組み合わされ、見込み検索を提供し、ポリシーネットワークを使用して検索を高確率の移動に絞り込み、値ネットワークを使用する(高速ロールアウトポリシーを使用した Monte Carlo のロールアウトと併せて)を使用してツリー内の位置を評価します。

A subsequent version, which we refer to as AlphaGo Lee, used a similar approach (see Methods), and defeated Lee Sedol, the winner of 18 international titles, in March 2016.

AlphaGo Leeと呼ばれる次のバージョンでも、同様のアプローチ(方法を参照)を使用し、2016年3月に18の国際タイトルを獲得したLee Sedolを敗退させました。

Our program, AlphaGo Zero, differs from AlphaGo Fan and AlphaGo Lee12 in several important aspects.

当社のプログラムAlphaGo Zeroは、AlphaGo FanおよびAlphaGo Lee12とはいくつかの重要な側面が異なります。

First and foremost, it is trained solely by self-play reinforcement learning, starting from random play, without any supervision or use of human data. Second, it uses only the black and white stones from the board as input features.

まず第一に、ランダム再生から始めて、人間のデータの監督や使用なしに自己再生強化学習のみによって訓練されます。 第二に、ボードの黒と白の石だけを入力機能として使用します。

Third, it uses a single neural network, rather than separate policy and value networks.

第3に、ポリシーと価値ネットワークを別々にするのではなく、単一のニューラルネットワークを使用します。

Finally, it uses a simpler tree search that relies upon this single neural network to evaluate positions and sample moves, without performing any Monte Carlo rollouts.

最後に、Monte Carloのロールアウトを実行せずに、この単一のニューラルネットワークを使用して位置とサンプルの移動を評価する単純なツリー検索を使用します。

To achieve these results, we introduce a new reinforcement learning algorithm that incorporates lookahead search inside the training loop, resulting in rapid mprovement and precise and stable learning.

これらの結果を得るために、訓練ループ内にルックアヘッド検索を組み込んだ新しい強化学習アルゴリズムを導入し、迅速な改善と正確で安定した学習を実現します。

Further technical differences in the search algorithm, training procedure and network architecture are described in Methods.

検索アルゴリズム、訓練手順、およびネットワークアーキテクチャのさらなる技術的な違いについては、「方法」で説明します。

Reinforcement learning in AlphaGo Zero

Our new method uses a deep neural network fθ with parameters θ.

我々の新しい方法は、パラメータθを有する深いニューラルネットワークfθを用いる。

This neural network takes as an input the raw board representation s of the position and its history, and outputs both move probabilities and a value, (p, v) = fθ(s).

このニューラルネットワークは、入力として、位置とその履歴の生のボード表現sを入力として取り、移動確率と(p、v)=fθ(s)の値を出力する。

The vector of move probabilities p represents the probability of selecting each move a (including pass), pa = Pr(a| s).

移動確率ベクトルpは、各移動a(パスを含む)、pa = Pr(a | s)を選択する確率を表す。

The value v is a scalar evaluation, estimating the probability of the current player winning from position s.

値vはスカラー評価であり、現在のプレイヤーが位置sから勝つ確率を推定する。

This neural network combines the roles of both policy network and value network into a single architecture.

このニューラルネットワークは、ポリシーネットワークとバリューネットワークの両方の役割を1つのアーキテクチャに統合します。

The neural network consists of many residual blocks of convolutional layers with batch normalization and rectifier nonlinearities (see Methods).

ニューラルネットワークは、バッチの正規化と整流非線形性(方法を参照)との畳み込み層の多くの残差ブロックから成ります。

The neural network in AlphaGo Zero is trained from games of self-play by a novel reinforcement learning algorithm.

AlphaGo Zeroのニューラルネットワークは、新しい強化学習アルゴリズムによる自己再生ゲームから訓練されています。

In each position s, an MCTS search is executed, guided by the neural network fθ.

各位置sにおいて、ニューラルネットワークfθによって導かれるMCTS探索が実行される。

The MCTS search outputs probabilities π of playing each move.

MCTS探索は、各移動をプレイする確率πを出力する。

These search probabilities usually select much stronger moves than the raw move probabilities p of the neural network fθ(s); MCTS may therefore be viewed as a powerful policy improvement operator.

これらの探索確率は、通常、ニューラルネットワークfθ(s)の生の移動確率pよりもはるかに強い動きを選択する。したがって、MCTSは強力な政策改善運営者とみなされる。

Self-play with search—using the improved MCTS-based policy to select each move, then using the game winner z as a sample of the value—may be viewed as a powerful policy evaluation operator.

検索による自己再生は(各移動を選択する改良されたMCTSベースのポリシーを使用して、それからゲームの勝者zを価値のサンプルとして使用して)、強力なポリシー評価オペレータと見ることができます。

The main idea of our reinforcement learning algorithm is to use these search operators repeatedly in a policy iteration procedure: the neural network’s parameters are updated to make the move probabilities and value (p, v) = fθ(s) more closely match the improved search probabilities and selfplay winner (π, z); these new parameters are used in the next iteration of self-play to make the search even stronger.

強化学習アルゴリズムの主な考え方は、これらの探索演算子をポリシー反復手続きで繰り返し使用することである。ニューラルネットワークのパラメータは移動確率を更新するために更新され、値(p、v)=fθ(s)改善された検索確率とセルフプレイの勝者(π、z)。これらの新しいパラメータは、セルフ・プレイの次の反復で使用され、検索をさらに強力にします。

Figure 1 illustrates the self-play training pipeline.

図1は、セルフプレイトレーニングパイプラインを示しています。

The MCTS uses the neural network fθ to guide its simulations (see Fig. 2).

Each edge (s, a) in the search tree stores a prior probability P(s, a), a visit count N(s, a), and an action value Q(s, a). Each simulation starts from the root state and iteratively selects moves that maximize an upper confidence bound Q(s, a) + U(s, a), where U(s, a) ∝ P(s, a) / (1 + N(s, a)) (refs 12, 24), until a leaf node s′ is encountered.

MCTSはニューラルネットワークfθを使用してそのシミュレーションを導く(図2参照)。

探索木の各エッジ(s、a)は、事前確率P(s、a)、訪問回数N(s、a)、及び動作値Q(s、a)を記憶する。 各シミュレーションは、ルート状態から開始し、U(s、a)+αP(s、a)/(1 + N(s、a))を最大にする動きを反復的に選択する。 (s、a))(参考文献12,24)、葉ノードs 'に遭遇するまで。

This leaf position is expanded and evaluated only once by the network to generate both prior probabilities and evaluation, (P(s′ , ·),V(s′ )) = fθ(s′ ).

このリーフ位置は、事前確率と評価(P(s '、・)、V(s'))=fθ(s ')の両方を生成するためにネットワークによって1回だけ拡張され評価される。

Each edge (s, a) traversed in the simulation is updated to increment its visit count N(s, a), and to update its action value to the mean evaluation over these simulations, = / Σ ′| → ′ ′ Q(s, a) 1 N(s, a) s s ,a s V(s ) where s, a→ s′ indicates that a simulation eventually reached s′ after taking move a from position s.

シミュレーションでトラバースされた各エッジ(s、a)は、その訪問数N(s、a)を増加させるように更新され、その行動値をこれらのシミュレーションに対する平均評価に更新する。 (s、a)は、位置sからaを移動させた後、シミュレーションが最終的にs 'に到達したことを示す。

MCTS may be viewed as a self-play algorithm that, given neural network parameters θ and a root position s, computes a vector of search probabilities recommending moves to play, π = αθ(s), proportional to the exponentiated visit count for each move, πa ∝ N(s, a)1/τ, where τ is a temperature parameter.

MCTSは、ニューラルネットワークパラメータθおよびルート位置sが与えられると、各移動の累乗された訪問回数に比例して、移動することを推奨する探索確率のベクトルπ=αθ(s)を計算する自己再生アルゴリズムとして見ることができる 、πaαN(s、a)1 /τここで、τは温度パラメータである。

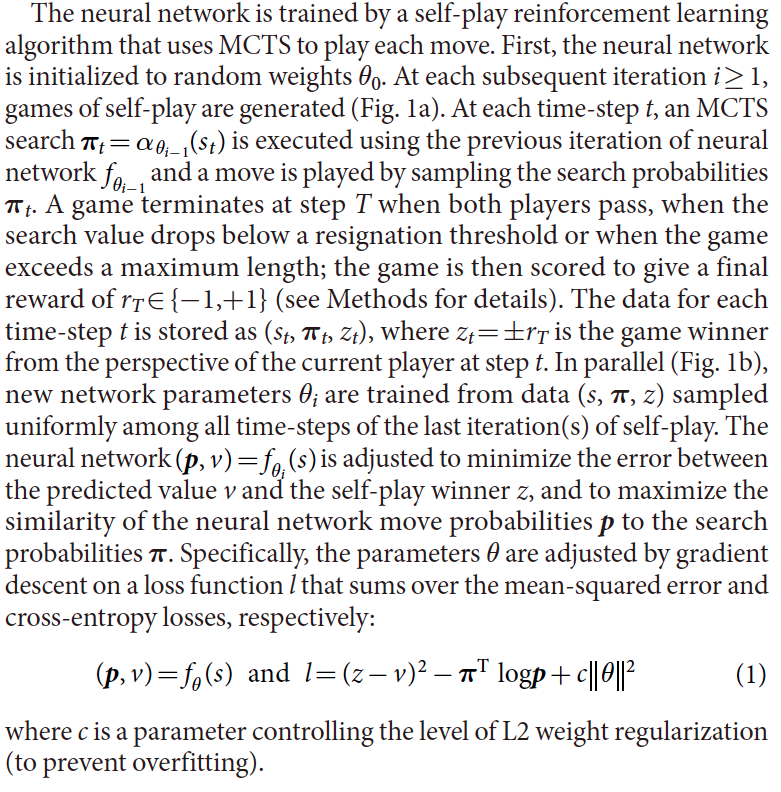

The neural network is trained by a self-play reinforcement learning algorithm that uses MCTS to play each move.

ニューラルネットワークは、MCTSを使用して各移動を再生する自己再生強化学習アルゴリズムによって訓練される。

First, the neural network is initialized to random weights θ0.

まず、ニューラルネットワークをランダム重みθ0に初期化する。

At each subsequent iteration i ≥ 1, games of self-play are generated (Fig. 1a).

後続の各反復i≥1において、自己再生のゲームが生成される(図1a)。

At each time-step t, an MCTS search π =αθ − t i 1(st) is executed using the previous iteration of neural network θ − f i 1 and a move is played by sampling the search probabilities πt.

各時間ステップtにおいて、ニューラルネットワークθ-f i 1の前の反復を用いてMCTS探索π=αθ-t i 1(st)が実行され、探索確率πtをサンプリングすることによって移動が行われる。

A game terminates at step T when both players pass, when the search value drops below a resignation threshold or when the game exceeds a maximum length; the game is then scored to give a final reward of rT ∈ {− 1,+ 1} (see Methods for details).

ゲームは、両方のプレイヤーが合格したとき、探索値が辞退閾値を下回ったとき、またはゲームが最大長を超えたときステップTで終了する。ゲームはrT∈{ - 1、+ 1}の最終的な報酬を与えるように採点される(詳細は方法を参照)。

The data for each time-step t is stored as (st, πt, zt), where zt = ± rT is the game winner from the perspective of the current player at step t.

各時間ステップtのデータは、(st、πt、zt)として記憶される。ここで、zt =±rTは、ステップtにおける現在のプレーヤの視点からのゲーム勝者である。

In parallel (Fig. 1b), new network parameters θi are trained from data (s, π, z) sampled uniformly among all time-steps of the last iteration(s) of self-play.

並行して(図1b)、新しいネットワークパラメータθiは、セルフプレイの最後の反復のすべての時間ステップの間で一様にサンプリングされたデータ(s、π、z)から訓練される。

The neural network = (p, v) fθ (s) i is adjusted to minimize the error between the predicted value v and the self-play winner z, and to maximize the similarity of the neural network move probabilities p to the search probabilities π.

ニューラルネットワーク=(p、v)fθ(s)iは、予測値vと自己再生勝者zとの間の誤差を最小にし、探索確率πに対するニューラルネットワーク移動確率pの類似性を最大にするように調整される。

Specifically, the parameters θ are adjusted by gradient descent on a loss function l that sums over the mean-squared error and cross-entropy losses, respectively:

(p, v) fθ (s) and l (z v) logp c (1) <- Wee

where c is a parameter controlling the level of L2 weight regularization (to prevent overfitting).

具体的には、パラメータθは、平均自乗誤差およびクロスエントロピー損失の合計をとる損失関数lに対する勾配降下によってそれぞれ調整される。

(p, v) fθ (s) and l (z v) logp c (1)

ここで、cは、L2重み正則化のレベルを制御するパラメータである(オーバーフィットを防止するため)。

Empirical analysis of AlphaGo Zero training

We applied our reinforcement learning pipeline to train our program AlphaGo Zero.

私たちはAlphaGo Zeroプログラムのトレーニングのために強化学習のパイプラインを適用しました。

Training started from completely random behaviour and continued without human intervention for approximately three days.

トレーニングは完全にランダムな行動から始まり、人間の介入なしに約3日間続けられた。

Over the course of training, 4.9 million games of self-play were generated, using 1,600 simulations for each MCTS, which corresponds to approximately 0.4 s thinking time per move.

訓練の間に、各MCTSのための1,600のシミュレーションを使用する自己再生の4.9百万のゲームが発生しました。これは、1回の移動につき約0.4秒の思考時間に相当します。

Parameters were updated from 700,000 mini-batches of 2,048 positions.

パラメータは、2,048ポジションの70万ミニバッチから更新されました。

The neural network contained 20 residual blocks (see Methods for further details).

ニューラルネットワークは20の残差ブロックを含んでいた(詳細は方法を参照)。

Figure 3a shows the performance of AlphaGo Zero during self-play reinforcement learning, as a function of training time, on an Elo scale25.

図3aは、自己再生強化学習中のAlphaGo Zeroのパフォーマンスを、Eloスケール25でトレーニング時間の関数として示しています。

Learning progressed smoothly throughout training, and did not suffer from the oscillations or catastrophic forgetting that have been suggested in previous literature26–28.

学習はスムーズに訓練を通して進歩し、以前の文献26-28で示唆された振動や壊滅的な忘却に苦しまなかった。

Surprisingly, AlphaGo Zero outperformed AlphaGo Lee after just 36 h.

意外なことに、AlphaGo Zeroはわずか36時間後にAlphaGo Leeを上回りました。

In comparison, AlphaGo Lee was trained over several months.

これと比較して、AlphaGo Leeは数ヶ月にわたって訓練を受けました。

After 72 h, we evaluated AlphaGo Zero against the exact version of AlphaGo Lee that defeated Lee Sedol, under the same 2 h time controls and match conditions that were used in the man–machine match in Seoul (see Methods).

72時間後、ソウルのマンマシンマッチで使用されたのと同じ2時間の時間コントロールと条件でLee Sedolを破ったAlphaGo Leeの正確なバージョンに対してAlphaGo Zeroを評価しました(方法を参照)。

AlphaGo Zero used a single machine with 4 tensor processing units (TPUs)29, whereas AlphaGo Lee was distributed over many machines and used 48 TPUs.

AlphaGo Zeroは4台のテンソル処理ユニット(TPU)29を持つ1台のマシンを使用しましたが、AlphaGo Leeは多くのマシンに分散され、48台のTPUを使用していました。

AlphaGo Zero defeated AlphaGo Lee by 100 games to 0 (see Extended Data Fig. 1 and Supplementary Information).

AlphaGo ZeroはAlphaGo Leeを100試合で0に下しました(拡張データ図1および補足情報を参照)。

To assess the merits of self-play reinforcement learning, compared to learning from human data, we trained a second neural network (using the same architecture) to predict expert moves in the KGS Server dataset;this achieved state-of-the-art prediction accuracy compared to previous work12,30–33 (see Extended Data Tables 1 and 2 for current and previous results, respectively).

自己再生強化学習のメリットを評価するために、我々はKGS Serverデータセットの専門家の動きを予測するために、同じアーキテクチャを使用する2番目のニューラルネットワークを訓練しました;これは最先端の予測を達成しました(以前の結果については、それぞれ拡張データ表1および2を参照)。

Supervised learning achieved a better initial performance, and was better at predicting human professional moves (Fig. 3).

教師あり学習はより良い初期成績を達成し、人のプロの動きを予測する上でより優れていました(図3)。

Notably, although supervised learning achieved higher move prediction accuracy, the self-learned player performed much better overall, defeating the human-trained player within the first 24 h of training.

特に、教師付き学習は移動予測の精度が高くなったが、自己学習したプレイヤーは、トレーニングの最初の24時間以内に人間の訓練を受けたプレーヤーを倒し、

This suggests that AlphaGo Zero may be learning a strategy that is qualitatively different to human play.

これは、AlphaGo Zeroが人間のプレーと質的に異なる戦略を学習している可能性があることを示唆しています。

To separate the contributions of architecture and algorithm, we compared the performance of the neural network architecture in AlphaGo Zero with the previous neural network architecture used in AlphaGo Lee (see Fig. 4).

アーキテクチャとアルゴリズムの貢献度を分離するために、AlphaGo ZeroのニューラルネットワークアーキテクチャのパフォーマンスをAlphaGo Leeで使用されていた以前のニューラルネットワークアーキテクチャと比較しました(図4参照)。

Four neural networks were created, using either separate policy and value networks, as were used in AlphaGo Lee, or combined policy and value networks, as used in AlphaGo Zero; and using either the convolutional network architecture from AlphaGo Lee or the residual network architecture from AlphaGo Zero.

Each network was trained to minimize the same loss function (equation (1)), using a fixed dataset of self-play games generated by AlphaGo Zero after 72 h of self-play training.

AlphaGo Zeroで使用されているように、AlphaGo Leeまたは組み合わせられたポリシーとバリューネットワークで使用されていたように、別々のポリシーとバリューネットワークのいずれかを使用して、4つのニューラルネットワークが作成されました。 AlphaGo Leeの畳み込みネットワークアーキテクチャーまたはAlphaGo Zeroの残存ネットワークアーキテクチャーのいずれかを使用します。

各ネットワークは、72時間のセルフ・プレイ・トレーニングの後、AlphaGo Zeroによって生成されたセルフ・プレイ・ゲームの固定データセットを使用して、同じ損失関数(式(1))を最小化するように訓練されました。

Using a residual network was more accurate, achieved lower error and improved performance in AlphaGo by over 600 Elo.

残存ネットワークを使用することで、AlphaGoの誤差が600 Eloを超えてより正確になり、パフォーマンスが向上しました。

Combining policy and value together into a single network slightly reduced the move prediction accuracy, but reduced the value error and boosted playing performance in AlphaGo by around another 600 Elo.

1つのネットワークにポリシーと価値を組み合わせることで、移動予測の精度はわずかに低下しましたが、AlphaGoの価値の誤差は縮小され、再生パフォーマンスは約600 Elo向上しました。

This is partly due to improved computational efficiency, but more importantly the dual objective regularizes the network to a common representation that supports multiple use cases.

これは部分的には計算効率の向上によるものですが、さらに重要なことに、デュアル・オブジェクトはネットワークを複数のユース・ケースをサポートする共通表現に正規化します。

Knowledge learned by AlphaGo Zero

AlphaGo Zero discovered a remarkable level of Go knowledge during its self-play training process.

AlphaGo Zeroは、セルフプレイの訓練プロセスにおいて、驚くべきレベルのGo知識を発見しました。

This included not only fundamental elements of human Go knowledge, but also non-standard strategies beyond the scope of traditional Go knowledge.

これには、人間のGo知識の基本要素だけでなく、伝統的なGo知識の範囲を超えた非標準的な戦略も含まれていました。

Figure 5 shows a timeline indicating when professional joseki (corner sequences) were discovered (Fig. 5a and Extended Data Fig. 2); ultimately AlphaGo Zero preferred new joseki variants that were previously unknown (Fig. 5b and Extended Data Fig. 3).

図5は、プロフェッショナルジョセキ(コーナーシーケンス)が発見された時を示すタイムラインを示す(図5aおよび拡張データ図2)。最終的にAlphaGo Zeroはこれまで知られていなかった新しいジョセキ変異を好みました(図5bおよび拡張データ図3)。

Figure 5c shows several fast self-play games played at different stages of training (see Supplementary Information).

図5cは、異なる訓練段階で行われたいくつかの高速セルフプレーゲームを示しています(補足情報を参照)。

Tournament length games played at regular intervals throughout training are shown in Extended Data Fig. 4 and in the Supplementary Information.

訓練期間中に定期的に行われたトーナメントの長さのゲームは、拡張データ図4および補足情報に示されています。

AlphaGo Zero rapidly progressed from entirely random moves towards a sophisticated understanding of Go concepts, including fuseki (opening), tesuji (tactics), life-and-death, ko (repeated board situations), yose (endgame), capturing races, sente (initiative), shape, influence and territory, all discovered from first principles.

AlphaGo Zeroは、完全にランダムな動きから急速に進化した、オープニング、テスジ、生死、コ(リピートボード)、ヨース(エンドゲーム)、レースのキャプチャ、イニシアチブ)、形、影響力、そして領域であり、すべて第一原理から発見されたものです。

Surprisingly, shicho (‘ladder’ capture sequences that may span the whole board)—one of the first elements of Go knowledge learned by humans—were only understood by AlphaGo Zero much later in training.

驚くべきことに、人間が学んだGo知識の最初の要素である蛇(「ボード全体に広がる可能性のあるはしごの捕捉シーケンス」)は、後でトレーニングでアルファゴーゼロによって理解されました。

Final performance of AlphaGo Zero

We subsequently applied our reinforcement learning pipeline to a second instance of AlphaGo Zero using a larger neural network and over a longer duration.

その後、我々の強化学習用パイプラインを、より大きなニューラルネットワークを使用し、より長い期間にわたって、AlphaGo Zeroの2番目のインスタンスに適用しました。

Training again started from completely random behaviour and continued for approximately 40 days.

トレーニングは完全にランダムな行動から始まり、約40日間続けられた。

Over the course of training, 29 million games of self-play were generated.

訓練の間、2900万のゲームが生まれました。

Parameters were updated from 3.1 million mini-batches of 2,048 positions each.

パラメータは、それぞれ2,048ポジションのミニバッチ310万個から更新されました。

The neural network contained 40 residual blocks.

ニューラルネットワークは40の残差ブロックを含んでいた。

The learning curve is shown in Fig. 6a.

学習曲線を図6aに示す。

Games played at regular intervals throughout training are shown in Extended Data Fig. 5 and in the Supplementary Information.

訓練を通して定期的に行われるゲームは、拡張データ図5および補足情報に示されている。

We evaluated the fully trained AlphaGo Zero using an internal tournament against AlphaGo Fan, AlphaGo Lee and several previous Go programs.

AlphaGo Fan、AlphaGo Lee、いくつかの以前のGoプログラムに対する内部トーナメントを使用して、完全に訓練されたAlphaGo Zeroを評価しました。

We also played games against the strongest existing program, AlphaGo Master—a program based on the algorithm and architecture presented in this paper but using human data and features (see Methods)—which defeated the strongest human professional players 60–0 in online games in January 201734.

AlphaGo Masterは、本書で紹介したアルゴリズムとアーキテクチャに基づいたプログラムですが、人間のデータと機能(方法を参照)を使用して、最も強力な人間プロのプレーヤーを60〜0人のオンラインゲームで敗北させました201734年1月

In our evaluation, all programs were allowed 5 s of thinking time per move; AlphaGo Zero and AlphaGo Master each played on a single machine with 4 TPUs; AlphaGo Fan and AlphaGo Lee were distributed over 176 GPUs and 48 TPUs, respectively.

私たちの評価では、すべてのプログラムは1回の移動につき5秒の思考時間を許されていました。 AlphaGo ZeroとAlphaGo Masterはそれぞれ1台のマシンで4台のTPUで再生しました。 AlphaGo FanとAlphaGo Leeは、それぞれ176個のGPUと48個のTPUで配布されました。

We also included a player based solely on the raw neural network of AlphaGo Zero; this player simply selected the move with maximum probability.

AlphaGo Zeroの生のニューラルネットワークのみに基づいたプレイヤーも含まれていました。 このプレーヤーは単純に最大確率で移動を選択しました。

Figure 6b shows the performance of each program on an Elo scale.

図6bは、Eloスケールでの各プログラムのパフォーマンスを示しています。

The raw neural network, without using any lookahead, achieved an Elo rating of 3,055.

ルックアヘッドを使用しない生のニューラルネットワークは、Eloの評価で3,055を達成しました。

Finally, we evaluated AlphaGo Zero head to head against AlphaGo Master in a 100-game match with 2-h time controls.

最後に、AlphaGo ZeroをAlphaGo Masterと対戦させ、2時間のコントロールで100試合で試合を評価しました。

AlphaGo Zero won by 89 games to 11 (see Extended Data Fig. 6 and Supplementary Information).

AlphaGo Zeroは89試合で11勝を達成しました(拡張データ図6および補足情報を参照)。

Conclusion

Our results comprehensively demonstrate that a pure reinforcement learning approach is fully feasible, even in the most challenging of domains: it is possible to train to superhuman level, without human examples or guidance, given no knowledge of the domain beyond basic rules.

私たちの結果は包括的に、純粋な強化学習のアプローチが完全に実現可能であることを示しています。最も困難な分野であっても、基本的なルールを超えたドメインについての知識がないため、人間の例や指導なしに超人レベルまで訓練することができます。

Furthermore, a pure reinforcement learning approach requires just a few more hours to train, and achieves much better asymptotic performance, compared to training on human expert data. Using this approach, AlphaGo Zero defeated the strongest previous versions of AlphaGo, which were trained from human data using handcrafted features, by a large margin.

さらに、純粋な強化学習のアプローチは、訓練するのにわずか数時間を要し、人間の専門家のデータを訓練するのに比べてはるかに優れた漸近性能を実現します。このアプローチを使用して、AlphaGo Zeroは手作りのフィーチャを使用して人間のデータから訓練されたAlphaGoの最も強力な旧バージョンを大幅に凌駕しました。

Humankind has accumulated Go knowledge from millions of games played over thousands of years, collectively distilled into patterns, proverbs and books.

人類は何千年もの間に行われた数百万のゲームからGoの知識を蓄積し、パターン、諺、書籍にまとめました。

In the space of a few days, starting tabula rasa, AlphaGo Zero was able to rediscover much of this Go knowledge, as well as novel strategies that provide new insights into the oldest of games.

AlphaGo Zeroは、タブララサを始めて数日間のスペースで、このGoの知識の多くを再発見することができました。また、最古のゲームに新しい洞察を提供する斬新な戦略もありました。