Reference

Titanic: Machine Learning from Disaster

Google Colab + Kaggle (Titanic) の実装に関するメモ

Data

train = pd.read_csv('/content/train.csv')

test = pd.read_csv('/content/test.csv')

train['Age'] = train['Age'].fillna(train['Age'].median())

train['Embarked'] = train['Embarked'].fillna('S')

train['Sex'][train['Sex'] == 'male'] = 0

train['Sex'][train['Sex'] == 'female'] = 1

train['Embarked'][train['Embarked'] == 'S' ] = 0

train['Embarked'][train['Embarked'] == 'C' ] = 1

train['Embarked'][train['Embarked'] == 'Q'] = 2

#train.head(10)

test['Age'] = test['Age'].fillna(test['Age'].median())

test['Sex'][test['Sex'] == 'male'] = 0

test['Sex'][test['Sex'] == 'female'] = 1

test['Embarked'][test['Embarked'] == 'S'] = 0

test['Embarked'][test['Embarked'] == 'C'] = 1

test['Embarked'][test['Embarked'] == 'Q'] = 2

test.Fare[152] = test.Fare.median()

#test.head(10)

features = train[['Pclass', 'Sex', 'Age', 'Fare']].values

target = train['Survived'].values

features_test = test[['Pclass', 'Sex', 'Age', 'Fare']].values

train_indices_s = np.random.choice(len(features), round((len(features)*0.8)), \

replace = False)

test_indices_s = np.array(list(set(range(len(features))) - set(train_indices)))

train_indices_t = np.random.choice(len(features_test), round((len(features_test)*0.8)), \

replace = False)

test_indices_t = np.array(list(set(range(len(features_test))) - set(train_indices_t)))

x_s_train = features[train_indices_s]

x_s_test = features[test_indices_s]

y_s_train = np.identity(2)[target[train_indices_s]]

y_s_test = np.identity(2)[target[test_indices_s]]

x_t_train = features[train_indices_t]

x_t_test = features[test_indices_t]

def normalize_cols(m):

col_max = m.max(axis = 0)

col_min = m.min(axis = 0)

return (m - col_min) / (col_max - col_min)

x_s_train = normalize_cols(x_s_train)

x_s_test = normalize_cols(x_s_test)

x_t_train = normalize_cols(x_t_train)

x_t_test = normalize_cols(x_t_test)

x_input = normalize_cols(features_test)

Sample Code

# Domain Adaptation

class DA():

def __init__(self):

pass

def weight_variable(self, name, shape):

initializer = tf.truncated_normal_initializer(mean = 0.0, stddev = 0.01, dtype = tf.float32)

return tf.get_variable(name, shape, initializer = initializer)

def bias_variable(self, name, shape):

initializer = tf.constant_initializer(value = 0.0, dtype = tf.float32)

return tf.get_variable(name, shape, initializer = initializer)

def f_extractor(self, x, n_in, n_units, keep_prob, reuse = False):

with tf.variable_scope('f_extractor', reuse = reuse):

w = self.weight_variable('w', [n_in, n_units])

b = self.bias_variable('b', [n_units])

f = tf.matmul(x, w) + b

# batch norm

batch_mean, batch_var = tf.nn.moments(f, [0])

f = (f - batch_mean) / (tf.sqrt(batch_var) + 1e-10)

# dropout

#f = tf.nn.dropout(f, keep_prob)

# relu

f = tf.nn.relu(f)

# leaky relu

#f = tf.maximum(0.2 * f, f)

return f

def classifier_d(self, x, n_units_f, n_units_d, n_domains, keep_prob, reuse = False):

with tf.variable_scope('classifier_d', reuse = reuse):

w_1 = self.weight_variable('w_1', [n_units_f, n_units_d])

b_1 = self.bias_variable('b_1', [n_units_d])

d = tf.matmul(x, w_1) + b_1

# batch norm

#batch_mean, batch_var = tf.nn.moments(d, [0])

#d = (d - batch_mean) / (tf.sqrt(batch_var) + 1e-10)

# relu

d = tf.nn.relu(d)

# dropout

#d = tf.nn.dropout(d, keep_prob)

w_2 = self.weight_variable('w_2', [n_units_d, n_domains])

b_2 = self.bias_variable('b_2', [n_domains])

d = tf.matmul(d, w_2) + b_2

logits = d

return logits

def classifier_c(self, x, n_units_f, n_units_c, n_classes, keep_prob, reuse = False):

with tf.variable_scope('classifier_c', reuse = reuse):

w_1 = self.weight_variable('w_1', [n_units_f, n_units_c])

b_1 = self.bias_variable('b_1', [n_units_c])

l = tf.matmul(x, w_1) + b_1

# batch norm

#batch_mean, batch_var = tf.nn.moments(l, [0])

#l = (l - batch_mean) / (tf.sqrt(batch_var) + 1e-10)

# relu

l = tf.nn.relu(l)

# dropout

#l = tf.nn.dropout(l, keep_prob)

w_2 = self.weight_variable('w_2', [n_units_c, n_classes])

b_2 = self.bias_variable('b_2', [n_classes])

l = tf.matmul(l, w_2) + b_2

logits = l

return logits

def loss_cross_entropy(self, y, t):

cross_entropy = - tf.reduce_mean(tf.reduce_sum(t * tf.log(tf.clip_by_value(y, 1e-10, 1.0)), axis = 1))

return cross_entropy

def loss_entropy(self, p):

entropy = tf.reduce_mean(tf.reduce_sum(p * tf.log(tf.clip_by_value(p, 1e-10, 1.0)), axis = 1))

return entropy

def accuracy(self, y, t):

correct_preds = tf.equal(tf.argmax(y, axis = 1), tf.argmax(t, axis = 1))

accuracy = tf.reduce_mean(tf.cast(correct_preds, tf.float32))

return accuracy

def training(self, loss, learning_rate, var_list):

optimizer = tf.train.AdamOptimizer(learning_rate = learning_rate)

train_step = optimizer.minimize(loss, var_list = var_list)

return train_step

def fit(self, x_s_train, x_s_test, y_s_train, y_s_test, x_t_train, x_t_test, \

n_in, n_units_f, n_units_d, n_domains, \

n_units_c, n_classes, lam, \

learning_rate, n_iter, batch_size, show_step, is_saving, model_path):

tf.reset_default_graph()

x_s = tf.placeholder(shape = [None, n_in], dtype = tf.float32)

y_s = tf.placeholder(shape = [None, n_classes], dtype = tf.float32)

d_s = tf.placeholder(shape = [None, n_domains], dtype = tf.float32)

x_t = tf.placeholder(shape = [None, n_in], dtype = tf.float32)

d_t = tf.placeholder(shape = [None, n_domains], dtype = tf.float32)

keep_prob = tf.placeholder(shape = [], dtype = tf.float32)

feat_s = self.f_extractor(x_s, n_in, n_units_f, keep_prob, reuse = False)

feat_t = self.f_extractor(x_t, n_in, n_units_f, keep_prob, reuse = True)

feat = tf.concat([feat_s, feat_t], axis = 0)

d = tf.concat([d_s, d_t], axis = 0)

logits_d = self.classifier_d(feat, n_units_f, n_units_d, n_domains, keep_prob, reuse = False)

probs_d = tf.nn.softmax(logits_d)

loss_d = self.loss_cross_entropy(probs_d, d)

logits_c_s = self.classifier_c(feat_s, n_units_f, n_units_c, n_classes, keep_prob, reuse = False)

probs_c_s = tf.nn.softmax(logits_c_s)

loss_c_s = self.loss_cross_entropy(probs_c_s, y_s)

loss_f = - lam * loss_d

var_list_f = tf.trainable_variables('f_extractor')

var_list_d = tf.trainable_variables('classifier_d')

var_list_c = tf.trainable_variables('classifier_c')

var_list_f_c = var_list_f + var_list_c

train_step_f = self.training(loss_f, learning_rate, var_list_f)

train_step_d = self.training(loss_d, learning_rate, var_list_d)

train_step_f_c = self.training(loss_c_s, learning_rate, var_list_f_c)

acc_d = self.accuracy(probs_d, d)

acc_c_s = self.accuracy(probs_c_s, y_s)

init = tf.global_variables_initializer()

saver = tf.train.Saver()

with tf.Session() as sess:

sess.run(init)

history_loss_c_s_train = []

history_loss_c_s_test = []

history_acc_c_s_train = []

history_acc_c_s_test = []

history_loss_d_train = []

history_loss_d_test = []

history_acc_d_train = []

history_acc_d_test = []

history_loss_f_train = []

history_loss_f_test = []

for i in range(n_iter):

# Training

# class classification for f and c

rand_index = np.random.choice(len(x_s_train), size = batch_size)

x_batch = x_s_train[rand_index]

y_batch = y_s_train[rand_index]

feed_dict = {x_s: x_batch, y_s: y_batch, keep_prob: 1.0}

sess.run(train_step_f_c, feed_dict = feed_dict)

temp_loss_c_s = sess.run(loss_c_s, feed_dict = feed_dict)

temp_acc_c_s = sess.run(acc_c_s, feed_dict = feed_dict)

history_loss_c_s_train.append(temp_loss_c_s)

history_acc_c_s_train.append(temp_acc_c_s)

if (i + 1) % show_step == 0:

print ('-' * 100)

print ('Iteration: ' + str(i + 1) + \

' Loss_c: ' + str(temp_loss_c_s) + ' Accuracy_c: ' + str(temp_acc_c_s))

# domain classification for d

rand_index = np.random.choice(len(x_s_train), size = batch_size//2)

x_batch_s = x_s_train[rand_index]

d_batch_s = np.ones(shape = [batch_size//2], dtype = np.int32) * 0

d_batch_s = np.identity(n_domains)[d_batch_s]

rand_index = np.random.choice(len(x_t_train), size = batch_size//2)

x_batch_t = x_t_train[rand_index]

d_batch_t = np.ones(shape = [batch_size//2], dtype = np.int32) * 1

d_batch_t = np.identity(n_domains)[d_batch_t]

feed_dict = {x_s: x_batch_s, d_s: d_batch_s, x_t: x_batch_t, d_t: d_batch_t, keep_prob: 1.0}

sess.run(train_step_f, feed_dict = feed_dict)

sess.run(train_step_d, feed_dict = feed_dict)

temp_loss_f = sess.run(loss_f, feed_dict = feed_dict)

temp_loss_d = sess.run(loss_d, feed_dict = feed_dict)

temp_acc_d = sess.run(acc_d, feed_dict = feed_dict)

history_loss_f_train.append(temp_loss_f)

history_loss_d_train.append(temp_loss_d)

history_acc_d_train.append(temp_acc_d)

if (i + 1) % show_step == 0:

print ('-' * 100)

print ('Iteration: ' + str(i + 1) + \

' Loss_d: ' + str(temp_loss_d) + ' Accuracy_d: ' + str(temp_acc_d))

# Test

# class classification for s

rand_index = np.random.choice(len(x_s_test), size = batch_size)

x_batch = x_s_test[rand_index]

y_batch = y_s_test[rand_index]

feed_dict = {x_s: x_batch, y_s: y_batch, keep_prob: 1.0}

temp_loss_c_s = sess.run(loss_c_s, feed_dict = feed_dict)

temp_acc_c_s = sess.run(acc_c_s, feed_dict = feed_dict)

history_loss_c_s_test.append(temp_loss_c_s)

history_acc_c_s_test.append(temp_acc_c_s)

# domain classification for f and d

rand_index = np.random.choice(len(x_s_test), size = batch_size//2)

x_batch_s = x_s_test[rand_index]

d_batch_s = np.ones(shape = [batch_size//2], dtype = np.int32) * 0

d_batch_s = np.identity(n_domains)[d_batch_s]

rand_index = np.random.choice(len(x_t_test), size = batch_size//2)

x_batch_t = x_t_test[rand_index]

d_batch_t = np.ones(shape = [batch_size//2], dtype = np.int32) * 1

d_batch_t = np.identity(n_domains)[d_batch_t]

feed_dict = {x_s: x_batch_s, d_s: d_batch_s, x_t: x_batch_t, d_t: d_batch_t, keep_prob: 1.0}

temp_loss_f = sess.run(loss_f, feed_dict = feed_dict)

temp_loss_d = sess.run(loss_d, feed_dict = feed_dict)

temp_acc_d = sess.run(acc_d, feed_dict = feed_dict)

history_loss_f_test.append(temp_loss_f)

history_loss_d_test.append(temp_loss_d)

history_acc_d_test.append(temp_acc_d)

if is_saving:

model_path = saver.save(sess, model_path)

print ('done saving at ', model_path)

print ('-' * 100)

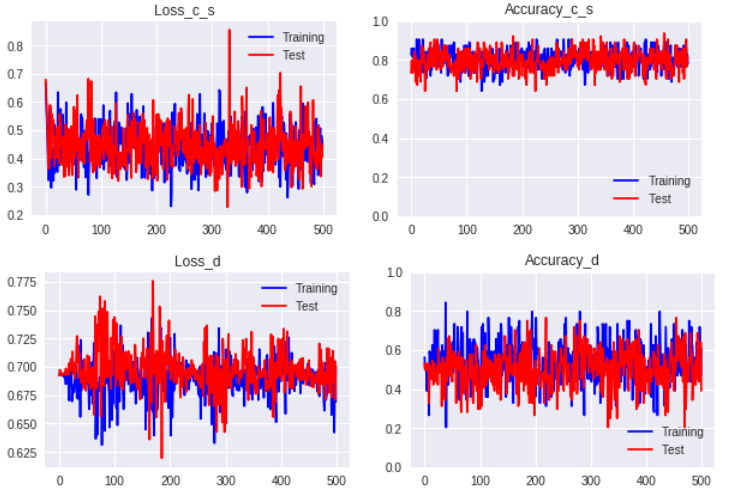

fig = plt.figure(figsize = (10, 3))

ax1 = fig.add_subplot(1, 2, 1)

ax1.plot(range(n_iter), history_loss_c_s_train, 'b-', label = 'Training')

ax1.plot(range(n_iter), history_loss_c_s_test, 'r-', label = 'Test')

ax1.set_title('Loss_c_s')

ax1.legend(loc = 'upper right')

ax2 = fig.add_subplot(1, 2, 2)

ax2.plot(range(n_iter), history_acc_c_s_train, 'b-', label = 'Training')

ax2.plot(range(n_iter), history_acc_c_s_test, 'r-', label = 'Test')

ax2.set_ylim(0.0, 1.0)

ax2.set_title('Accuracy_c_s')

ax2.legend(loc = 'lower right')

fig = plt.figure(figsize = (10, 3))

ax1 = fig.add_subplot(1, 2, 1)

ax1.plot(range(n_iter), history_loss_d_train, 'b-', label = 'Training')

ax1.plot(range(n_iter), history_loss_d_test, 'r-', label = 'Test')

ax1.set_title('Loss_d')

ax1.legend(loc = 'upper right')

ax2 = fig.add_subplot(1, 2, 2)

ax2.plot(range(n_iter), history_acc_d_train, 'b-', label = 'Training')

ax2.plot(range(n_iter), history_acc_d_test, 'r-', label = 'Test')

ax2.set_ylim(0.0, 1.0)

ax2.set_title('Accuracy_d')

ax2.legend(loc = 'lower right')

plt.show()

def predict(self, x_input, n_in, n_units_f, n_units_c, n_classes, model_path):

x = tf.placeholder(shape = [None, n_in], dtype = tf.float32)

keep_prob = tf.placeholder(shape = [], dtype = tf.float32)

feat = self.f_extractor(x, n_in, n_units_f, keep_prob, reuse = True)

logits = self.classifier_c(feat, n_units_f, n_units_c, n_classes, keep_prob, reuse = True)

probs = tf.nn.softmax(logits)

preds = tf.argmax(probs, axis = 1)

saver = tf.train.Saver()

with tf.Session() as sess:

saver.restore(sess, model_path)

feed_dict = {x: x_input, keep_prob: 1.0}

prediction = sess.run(preds, feed_dict = feed_dict)

return prediction

Parameters

n_in = 4

n_units_f = 64

n_units_d = 64

n_units_c = 64

n_domains = 2

n_classes = 2

lam = 1.0

learning_rate = 0.01

n_iter = 500

batch_size = 64

show_step = 100

model_path = 'datalab/model'

Output

is_saving = False

da.fit(x_s_train, x_s_test, y_s_train, y_s_test, x_t_train, x_t_test, \

n_in, n_units_f, n_units_d, n_domains, n_units_c, n_classes, lam, \

learning_rate, n_iter, batch_size, show_step, is_saving, model_path)

prediction = da.predict(x_input, n_in, n_units_f, n_units_c, n_classes, model_path)

PassengerId = np.array(test['PassengerId']).astype(int)

solution = pd.DataFrame(prediction, PassengerId, columns = ['Survived'])

solution.to_csv('/content/solution.csv', index_label = ['PassengerId'])