参考にしたページ

人工知能パーツ Microsoft Cognitive Services を使った表情分析アプリを作ろう! (Emotion API × JavaScript 編) - Qiita

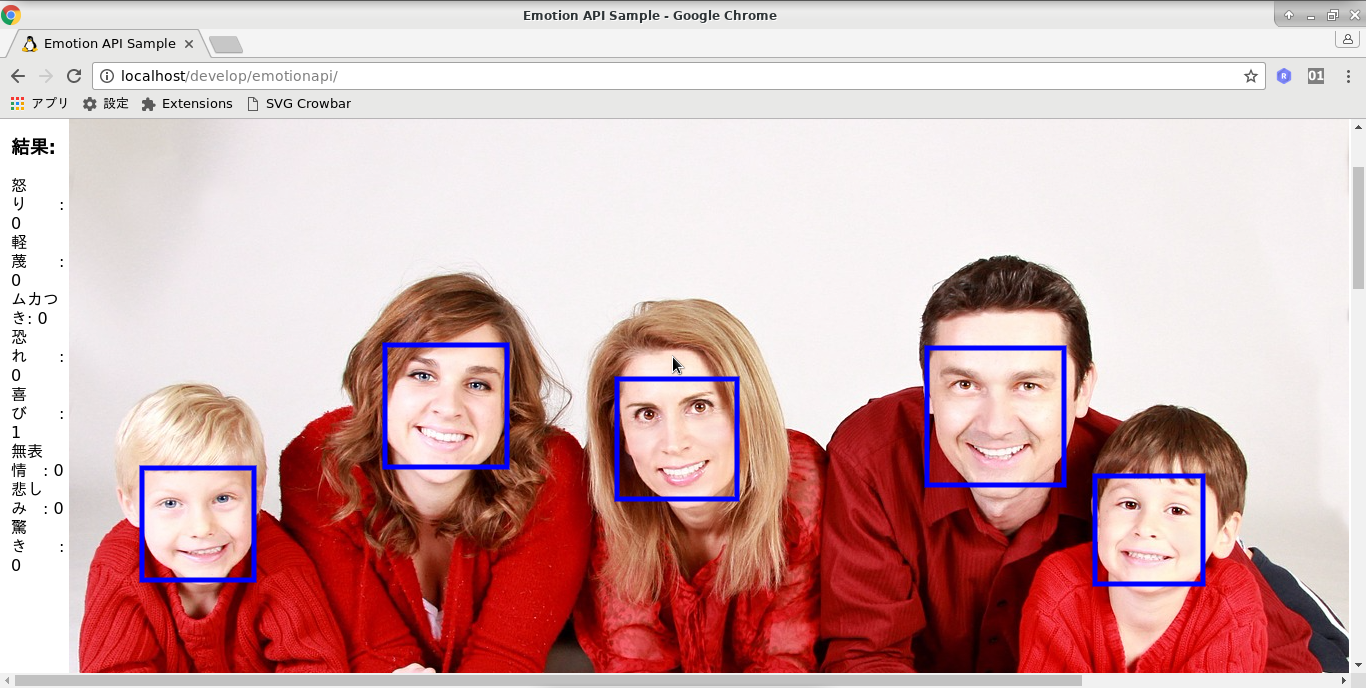

実行結果

実行する時は、http または、https で始まる画像のURL を入れて下さい。

javascript の YOUR_SUBSCRIPTION_KEY は書き換えて下さい。

index.html

<!DOCTYPE html>

<html lang="ja">

<head>

<meta http-equiv="Content-Type" content="text/html; charset=utf-8"/>

<script src="/js/jquery-3.2.1.min.js"></script>

<script src="js/emotionapi.js"></script>

<link rel="stylesheet" href="css/emotionapi.css">

<title>Emotion API Sample</title>

</head>

<body>

<div>

<h1>Microsoft Cognitive Services</h1>

<h2>Emotion API Sample bbb</h2>

<input type="url" id="imageUrlTextbox" class="urlinput">

</div>

<table>

<tr>

<td class="OutputTh">

<div id="OutputDiv">Output</div>

</td>

<td>

<div id="PhotoDiv">

<canvas id="area_aa" height="1600" width="1600"></canvas>

</div>

</td>

</tr>

</table>

<hr />

<div id="outarea_aa">outarea_aa</div>

<div id="outarea_bb">outarea_bb</div>

<div id="outarea_cc">outarea_cc</div>

<div id="outarea_dd">outarea_dd</div>

<div id="outarea_ee">outarea_ee</div>

<div id="outarea_ff">outarea_ff</div>

<div id="outarea_gg">outarea_gg</div>

<div id="outarea_hh">outarea_hh</div>

<hr />

<a href="js/html_src/">js</a><p />

Jun/20/2017<p />

</body>

</html>

emotion.js

// ----------------------------------------------------------------------

//

// emotionapi.js

//

// Jun/20/2017

//

// ----------------------------------------------------------------------

jQuery(function ()

{

jQuery ("#outarea_aa").text ("*** start *** ddd ***")

// URL が変更された場合(再度分析&表示)

jQuery("#imageUrlTextbox").change(function () {

showImage()

getFaceInfo()

})

showImage() // 画像を画面に表示

getFaceInfo() // 画像を分析

jQuery ("#outarea_hh").text ("*** end ***")

})

// ----------------------------------------------------------------------

// [4]:

var showImage = function ()

{

var canvas = document.getElementById ("area_aa")

var ctx = canvas.getContext('2d')

ctx.clearRect(0, 0, canvas.width, canvas.height)

var img = new Image()

const imageUrl = jQuery("#imageUrlTextbox").val();

if (imageUrl) {

img.src = imageUrl

img.onload = function()

{

ctx.drawImage(img, 0, 0);

}

}

}

// ----------------------------------------------------------------------

// [6]:

var getFaceInfo = function ()

{

const subscriptionKey = "YOUR_SUBSCRIPTION_KEY"

const imageUrl = jQuery("#imageUrlTextbox").val()

const webSvcUrl = "https://api.projectoxford.ai/emotion/v1.0/recognize"

if(document.getElementById('imageUrlTextbox').value=="")

{

jQuery("#OutputDiv").html("画像のURLを入力してください<br />")

}

else{

jQuery("#OutputDiv").text("分析中...");

}

jQuery.ajax({

type: "POST",

url: webSvcUrl,

headers: { "Ocp-Apim-Subscription-Key": subscriptionKey },

contentType: "application/json",

data: '{ "Url": "' + imageUrl + '" }'

}).done(function (data)

{

data_process (data)

// エラー処理

}).fail(function (err) {

if(document.getElementById('imageUrlTextbox').value!="")

{

jQuery("#OutputDiv").text("ERROR!" + err.responseText);

}

})

}

// ----------------------------------------------------------------------

// [6-4]:

function data_process (data)

{

jQuery ("#outarea_bb").text ("data.length = " + data.length)

if (0 < data.length) {

var canvas = document.getElementById ("area_aa")

var ctx = canvas.getContext('2d')

ctx.lineWidth = 5

ctx.strokeStyle = "rgb(0, 0, 255)"

var str_tmp = ""

for (var it=0; it< data.length; it++)

{

const faceRectange = data[it].faceRectangle;

const faceWidth = faceRectange.width;

const faceHeight = faceRectange.height;

const faceLeft = faceRectange.left;

const faceTop = faceRectange.top;

str_tmp += "left = " + faceLeft + "<br />"

str_tmp += "top = " + faceTop + "<br />"

str_tmp += "width = " + faceWidth + "<br />"

str_tmp += "height = " + faceHeight + "<br />"

ctx.strokeRect (faceLeft,faceTop,faceWidth,faceHeight)

}

jQuery ("#outarea_cc").html (str_tmp)

const outputText = show_score_proc (data)

jQuery("#OutputDiv").html(outputText)

}

// データが取得できなかった場合

else {

jQuery("#OutputDiv").text("検出できませんでした")

}

}

// ----------------------------------------------------------------------

// [6-4-8]:

function show_score_proc (data)

{

// 検出された表情スコアを取得

const faceScore = data[0].scores

const faceAnger = floatFormat(faceScore.anger)

const faceContempt = floatFormat(faceScore.contempt)

const faceDisgust = floatFormat(faceScore.disgust)

const faceFear = floatFormat(faceScore.fear)

const faceHappiness = floatFormat(faceScore.happiness)

const faceNeutral = floatFormat(faceScore.neutral)

const faceSadness = floatFormat(faceScore.sadness)

const faceSurprise = floatFormat(faceScore.surprise)

// 表情スコアを表示

var outputText = ""

outputText += "<h3>" + "結果:" + "</h3>"

outputText += "怒り : " + faceAnger + "<br>"

outputText += "軽蔑 : " + faceContempt + "<br>"

outputText += "ムカつき: " + faceDisgust + "<br>"

outputText += "恐れ : " + faceFear + "<br>"

outputText += "喜び : " + faceHappiness + "<br>"

outputText += "無表情 : " + faceNeutral + "<br>"

outputText += "悲しみ : " + faceSadness + "<br>"

outputText += "驚き : " + faceSurprise + "<br>"

return outputText

}

// ----------------------------------------------------------------------

//小数点6位までを残す関数 (表情スコアの丸めに利用)

// [6-4-8-4]:

function floatFormat( number )

{

return Math.round( number * Math.pow(10,6) ) / Math.pow(10,6)

}

// ----------------------------------------------------------------------