動作環境

GeForce GTX 1070 (8GB)

ASRock Z170M Pro4S [Intel Z170chipset]

Ubuntu 16.04 LTS desktop amd64

TensorFlow v1.1.0

cuDNN v5.1 for Linux

CUDA v8.0

Python 3.5.2

IPython 6.0.0 -- An enhanced Interactive Python.

gcc (Ubuntu 5.4.0-6ubuntu1~16.04.4) 5.4.0 20160609

GNU bash, version 4.3.48(1)-release (x86_64-pc-linux-gnu)

TensorFlowで5次元の補間を学習させようとしている。

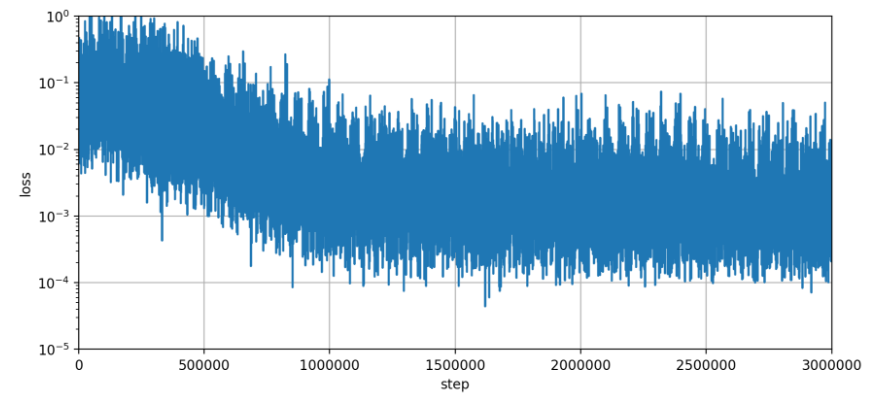

lossの経過

下記がloss経過の一例。

Jupyter code.

check_result_170722.ipynb

%matplotlib inline

# learning [Exr,Exi,Eyr,Eyi,Ezr,Ezi] from ADDA

# Jul. 28, 2017

import numpy as np

import matplotlib.pyplot as plt

# data1 = np.loadtxt('RES_170728_t2119/log_learn.170728_t0720', delimiter=',')

data1 = np.loadtxt('RES_170728_t2119/log_learn.170728_t0751', delimiter=',')

input1 = data1[:,0]

output1 = data1[:,1]

#

fig = plt.figure(figsize=(10,10),dpi=200)

ax1 = fig.add_subplot(2,1,1)

ax1.plot(input1, output1)

ax1.set_xlabel('step')

ax1.set_ylabel('loss')

ax1.set_yscale('log')

ax1.set_xlim([0, 3000000])

ax1.set_ylim([1e-5, 1.0])

ax1.grid(True)

# fig.show()

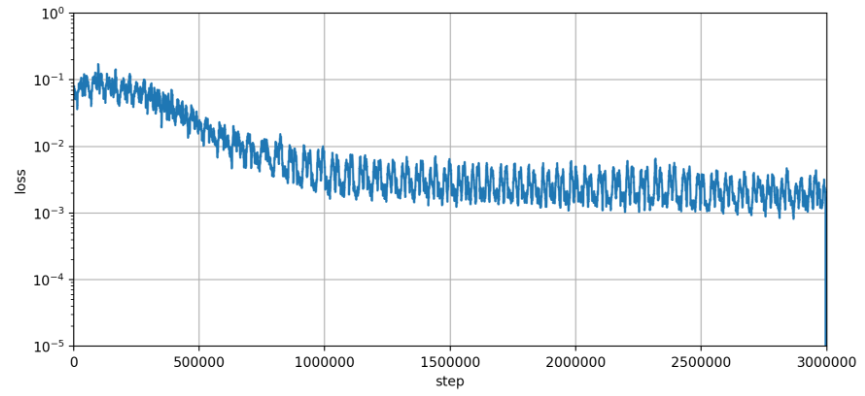

lossの経過 > moving average付き

moving averageを付けてみた。

参考: https://stackoverflow.com/questions/14313510/how-to-calculate-moving-average-using-numpy

pandasのインストールはまだで使う予定は今のところないため、関数実装を参考にした。

移動平均した分、リストの要素数が減るため、ダミーデータを追加して対応した。

check_result_170722.ipynb

%matplotlib inline

# learning [Exr,Exi,Eyr,Eyi,Ezr,Ezi] from ADDA

# Jul. 28, 2017

import numpy as np

import matplotlib.pyplot as plt

# from

# https://stackoverflow.com/questions/14313510/how-to-calculate-moving-average-using-numpy

def moving_average(a, n=3) :

ret = np.cumsum(a, dtype=float)

ret[n:] = ret[n:] - ret[:-n]

return ret[n - 1:] / n

# data1 = np.loadtxt('RES_170728_t2119/log_learn.170728_t0720', delimiter=',')

data1 = np.loadtxt('RES_170728_t2119/log_learn.170728_t0751', delimiter=',')

input1 = data1[:,0]

output1 = data1[:,1]

# moving average

NUM_AVERAGE = 50

output1 = moving_average(output1, n=NUM_AVERAGE)

for loop in range(NUM_AVERAGE - 1):

output1 = np.append(output1, 1e-7) # dummy

#

fig = plt.figure(figsize=(10,10),dpi=200)

ax1 = fig.add_subplot(2,1,1)

ax1.plot(input1, output1)

ax1.set_xlabel('step')

ax1.set_ylabel('loss')

ax1.set_yscale('log')

ax1.set_xlim([0, 3000000])

ax1.set_ylim([1e-5, 1.0])

ax1.grid(True)

# fig.show()

50個くらい平均に使わないとこのくらいの細さにはならない。

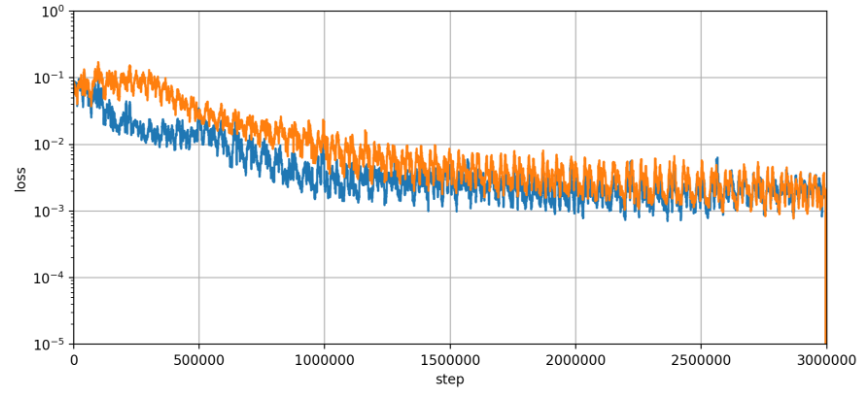

lossの経過 > 複数プロット対応

check_result_170722.ipynb

%matplotlib inline

# learning [Exr,Exi,Eyr,Eyi,Ezr,Ezi] from ADDA

# Jul. 28, 2017

import numpy as np

import matplotlib.pyplot as plt

def moving_average(a, n=3) :

# from

# https://stackoverflow.com/questions/14313510/how-to-calculate-moving-average-using-numpy

ret = np.cumsum(a, dtype=float)

ret[n:] = ret[n:] - ret[:-n]

return ret[n - 1:] / n

def add_lineplot(filepath, ax1):

data1 = np.loadtxt(filepath, delimiter=',')

input1 = data1[:,0]

output1 = data1[:,1]

# moving average

NUM_AVERAGE = 50

output1 = moving_average(output1, n=NUM_AVERAGE)

for loop in range(NUM_AVERAGE - 1):

output1 = np.append(output1, 1e-7) # dummy

ax1.plot(input1, output1)

fig = plt.figure(figsize=(10,10),dpi=200)

ax1 = fig.add_subplot(2,1,1)

ax1.grid(True)

ax1.set_xlabel('step')

ax1.set_ylabel('loss')

ax1.set_yscale('log')

ax1.set_xlim([0, 3000000])

ax1.set_ylim([1e-5, 1.0])

FILE_PATH1 = 'RES_170728_t0716/log_learn.170727_t2308'

FILE_PATH2 = 'RES_170728_t2119/log_learn.170728_t0951'

add_lineplot(FILE_PATH1, ax1)

add_lineplot(FILE_PATH2, ax1)

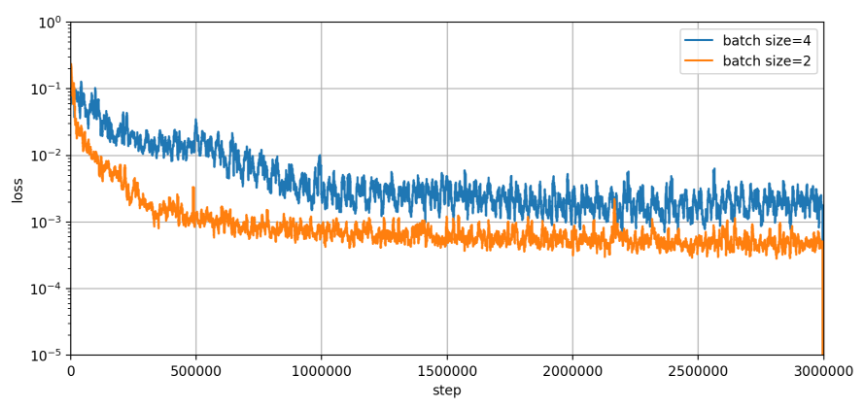

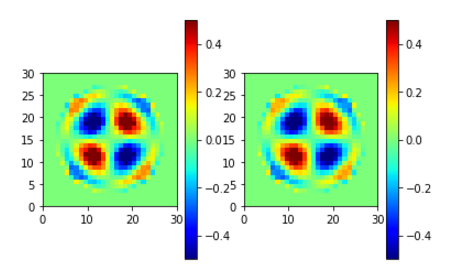

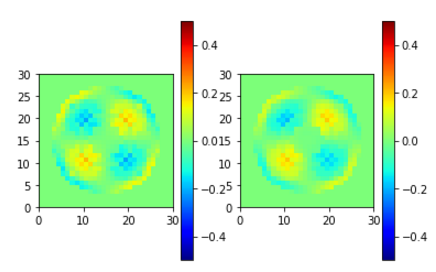

batch size 2

良すぎる。

What's the catch?

batch size 20の処理は誤差が大きかった。

リグレット、regret girlのホワイトアウト、みたいな。